Last month, Mitchell Hashimoto, HashiCorp co-founder, publicly declared that he was moving his popular open source Ghostty terminal emulator project from GitHub. GitHub runs the world’s largest service built on the Git distributed version control system, created by Linus Torvalds.

Once an enthusiastic user, Hashimoto grew disillusioned with service disruptions, and increasingly slow pull requests. “This is no longer a place for serious work if it just blocks you out for hours per day, every day,” he wrote.

Hashimoto was quick to defend Git itself: “The issue isn’t Git, it’s the infrastructure we rely on around it: issues, PRs, Actions, etc.”

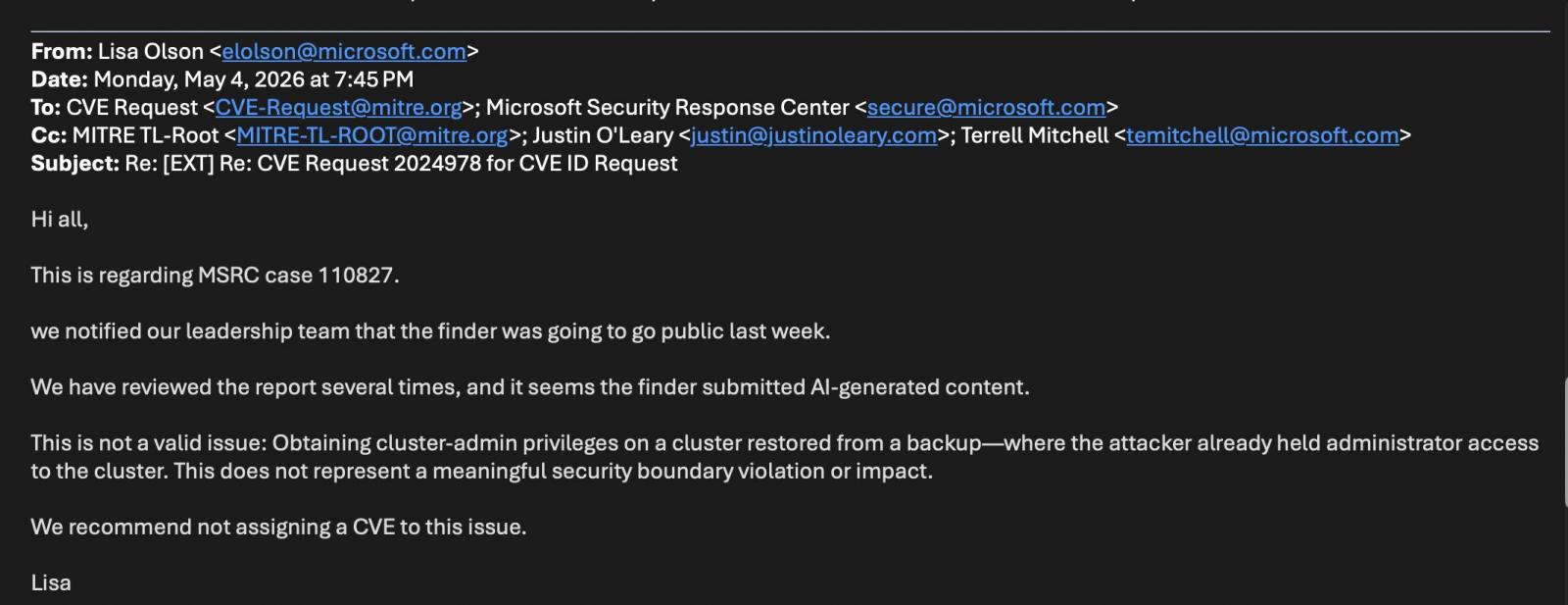

Many have blamed GitHub’s performance on Microsoft, which acquired the company in 2018. But to be fair, GitHub itself has been experiencing heavier-than-expected traffic thanks to a proliferation of AI-generated pull requests.

In 2025, GitHub saw a 206 percent year-over-year growth in AI-generated projects measured by the use of Bash shell scripts, a widespread way of running agents. And more AI code means more bugs. Research from GitClear found that AI-generated code heaped 10.83 issues per pull request, compared to 6.45 for the old-fashioned human variety.

Our new agentic workforce is raising big questions about how the entire software development lifecycle (SDLC) should evolve, and if Git should come along.

“Agents are nudging us toward a continuous flow,” warned Peco Karayanev, co-founder of DevOps platform provider Autoptic, which bridges Git-based deployments with observability tools for agent-based remediation.

Autoptic’s entire user base runs on some form of Git, either homebrew or from a service provider like GitLab.

Given the volume and magnitude of changes across repos, “we need git to start operating in a more continuous mode,” Karayanev wrote in an email interview.

Git operations, especially when used in GitOps-style automated deployments, still need to be managed by people. Updates, commits, pushes, merges are often yoked into sequences of “stop/go” episodes where someone has to hit enter on the keyboard a few times to continue the workflow, Karayanev noted. This model may not hold up once agents start getting priority.

A butler for Git

Git has always had its share of critics, especially those who use the tool daily.

There may not be another piece of software that is so widely adopted and yet so inscrutable. Torvalds and other Linux kernel developers built Git in 2005 after frustrations with trying to shoehorn Linux code into the commercial BitKeeper tool. Linux, a global group project of mammoth proportions, required a distributed version control system able to support non-linear development of thousands of parallel branches.

Like any distributed system, Git can be difficult to understand.

One of the co-founders of GitHub, Scott Chacon co-wrote a book on using Git (2009’s Pro Git) and still he finds himself occasionally flummoxed by the version control system.

There are still “sharp edges” to Git, Chacon told The Register. “There’s a lot of stuff that it doesn’t do very well from a usability standpoint,” he said.

Chacon co-founded GitButler as a way to “rethink the porcelain” of Git, to make Git more suitable to modern workflows. (Last month, GitButler received $17 million in venture capital funding).

Think of GitButler as a super-powered Git client. It allows the developer to work on two different branches simultaneously, using a technique called virtual branching. It reconciles the code a developer is working on with the upstream code. They can reorder commits, or edit the comments of a previous commit. It offers richer metadata about the files being worked on. It can show which commits are unique to that branch.

Best of all, it eliminates what many developers call “rebase hell,” where merges into an updated codebase must be checked one at a time, a problem GitButler solves by keeping the user’s code synchronized with what is upstream.

Many of these actions GitButler offers can be done through the Git command itself – although Git’s command language, and its rules, can be so obtuse that “you will probably make a mistake at some point,” Chacon said.

A Git for agents

Chacon believes GitHub’s current reliability issues stem from the current tsunami of agentic work.

This is “ironic” because GitHub was built to scale Git, he said. “But an influx of agents is pushing the service to the brink.”

The problem lies not with Git itself, but with everyone using one service, Chacon argued. Last year, GitHub had about 180 million users working across 630 million repositories – with 121 million created in 2025 alone, according to the company’s most recent annual Octoverse report.

“From the longer-term perspective, it doesn’t need to be like this,” he argued. Maybe Git should be run locally, mirrored globally and managed with clients … such as GitButler, Chacon suggested. Perhaps Git-based version control systems could be customized for specific industry verticals.

We need to think about how we “distribute these systems more,” he said. “Git is designed to be distributed but we’re not distributing it,” he said.

GitButler has created a command line interface specifically for agents. It was designed to give MCP servers an integrated map of the repository, which otherwise would require stitching together multiple Git commands. The Virtual Files concept allows the agent to work on a section of code that is also being worked on by a developer, or another agent.

These are changes that point to a rethinking of how a Git workflow should run.

“I think all of these systems should fundamentally change, because all of our workflows have changed, right? There needs to be different, sort of primitives for how to deal with these problem sets,” Chacon said.

A tip from gaming development

One company that wants its platform to replace Git altogether is Diversion, which has built an eponymous distributed version control system initially pitched for large-scale game design.

“Git’s architecture is actually an issue that prevents scaling,” argues Diversion CEO Sasha Medvedovsky in an interview with The Register. “Fundamentally it’s an architecture problem that can’t be fixed and is a bottleneck for end users and hosting services.”

Git is a distributed system insofar as every user, or hosted service, requires a dedicated database (much like blockchain). “It’s not distributed in the regular sense but rather replicated,” he wrote in an exchange with The Register on LinkedIn.

Operations run on a single thread, making concurrent operations impossible. As a result, the larger the repository, the slower the commit operations – a deadly combination for fast-paced agentic software development, Medvedovsky noted.

Of course, every CEO will have their talking points ready about a competitor’s weaknesses (Diversion is finalizing a blog post with hard numbers about Git and GitHub performance). But there are a growing number of other initiatives around prepping Git for the challenging times ahead.

Perhaps most notable is Jujutsu, a Git-compatible distributed version control system, stewarded by Google senior software engineer Martin von Zweigbergk. Like GitButler, Jujutsu (jj) aims to eliminate a lot of the annoyances that come with Git. It includes an undo button and the ability to keep committing even when there is a conflict.

And because everything written in C must be recast into Rust these days, long-time Git contributor Sebastian Thiel started a project called Gitoxide to rebuild Git in Rust. Potential benefits include significant performance improvements through multicore processing, and the much-needed memory safety that comes with Rust.

Will Git 3 solve all the problems?

Git’s chief maintainer is Junio Hamano, who took the reins from Torvalds in 2005. And he remains busy keeping Git current.

At FOSDEM this February, core Git contributor and GitLab engineering manager Patrick Steinhardt discussed some of the changes coming in the next version of Git, version 3, which is gradually being rolled out this year.

One of the chief improvements will be in the way Git manages the commit references, the IDs that point to each change being made. Surprisingly, this operation is a real bottleneck for the software. “The design is inefficient,” Steinhardt told the audience.

Every time a programmer commits a code change, it gets recorded in a “packed-refs” file, which saves time by not giving each commit its own reference file.

As projects grow larger, however, it takes longer for Git to amend or to delete a reference in packed-refs (One GitLab repo has a packed-refs file of more than 20 million references, Steinhardt said).

This is especially problematic when you have multiple, simultaneous readers and writers of that file. And just forget about getting a consistent view of all the references.

The freshly implemented Reftable feature, which will be the default in Git 3.0, stores references in an indexable binary format. The Git folks borrowed this concept from the Eclipse Foundation’s JGit Java implementation of Git.

Reftable allows for block updates, eliminating the need to rewrite a 2 GB-sized file for a single entry. And it is much faster for reading, which would pave the way for Git supporting larger, more sprawling repositories – perfect for an ever-busy agentic workforce.

For nearly two decades, Git has proved to be the version control system of choice for geeks worldwide. But even with these new features and various third-party enhancements, can it retain relevance for a new generation of agentically enhanced coders?

The battle is on. ®

You must be logged in to post a comment Login