When Sam Altman first told her that he’d never let OpenAI go corporate, that what he and his colleagues were building was too powerful to be driven by investors, Catherine Bracy more or less believed him.

Tech

OpenAI built a $180 billion charity. Will it do any good?

The conversation took place in 2022, when Bracy, CEO and founder of the social mobility-focused nonprofit TechEquity, was interviewing Altman for a book she was writing about the dangers of venture capital. It was before Altman’s mysterious firing and unfiring a year later, after which he mostly stopped responding to Bracy’s texts.

And ever since then, OpenAI — which was initially founded as a nonprofit in 2015 to “advance digital intelligence in the way that is most likely to benefit humanity as a whole, unconstrained by a need to generate financial return” — has been publicly trying to escape the confines of its charitable roots. Today, OpenAI contains both a corporate arm focused on building and selling AI and a nonprofit arm with a stated mission of ensuring that AI benefits people.

During the controversial process of trying to fully sever the two in 2024, OpenAI lost about half of its AI safety staffers and much of its senior leadership. That was followed by an intensified scrutiny from state attorneys general, nonprofit legal experts, competitor companies, effective altruists, Nobel Prize winners, vast swaths of California’s philanthropic community, and one of its original funders, Elon Musk. Different sides had different interests, but the overall argument was that shifting to a for-profit model would create a fiduciary duty to investors that would inherently clash with its original mission of safety and public benefit.

Is OpenAI’s new foundation a $180 billion distraction?

- Last October, OpenAI agreed to make its nonprofit arm very rich. The OpenAI Foundation is now worth about $180 billion and it has two main objectives:

- Helping the world adapt to and benefit from AI by giving money to charity.

- Acting as a moral compass for OpenAI the company, especially when it comes to safety and security decisions.

- The foundation has already given away about $40.5 million so far, a small fraction of the billions it plans to eventually donate. But critics see the donations as a distraction.

- While OpenAI says its foundation has the final say on security and safety-related decisions, the company has come under scrutiny in recent months for striking a deal with the Pentagon, fighting against statewide AI legislation, and testing ads for free users.

- Even if the foundation does eventually give away billions of dollars, it may never be enough to make up for what the public lost in allowing OpenAI to go corporate.

Nonetheless, OpenAI did finally strike a contortive restructuring deal last October. Essentially, the for-profit arm became what is known as a public benefit corporation (PBC), called the OpenAI Group. The original nonprofit became the OpenAI Foundation, which has a 26 percent stake currently worth $180 billion in the PBC, plus a sliver of exclusive legal control over certain major decisions.

One effect of the transition was that it essentially required OpenAI to put a number on what it owed the public for converting what had been a project for all humanity into something that most directly benefits the company’s investors. The resulting stake of the OpenAI Foundation is big enough to instantly make it one of the wealthiest charities in the country, or in OpenAI’s words, the “best-equipped nonprofit the world has ever seen.” On paper, at least, the foundation is now significantly richer than the entire country of Luxembourg. Even the Gates Foundation has only $77.6 billion in assets, less than half of what the OpenAI Foundation can draw from, though it’s important to note that most of the wealth of the OpenAI Foundation is locked in fairly illiquid shares within the still private company, which limits how quickly any money can be given away.

Still, its sheer size means that the OpenAI Foundation stands to eventually be a transformative presence on the philanthropic stage, one way or another. But while OpenAI says the foundation will eventually give out many billions of dollars in philanthropy to ensure that “artificial general intelligence benefits all of humanity,” it’s uncertain that a socially beneficial philanthropy can exist side by side with a company that is fighting an existential battle over who will dominate the AI industry.

“The unspoken truth here is that they’re never going to make a decision that is bad for the company,” Bracy said. “These two entities cannot live under the same roof” where “the mission is in control.” (Disclosure: Vox Media is one of several publishers that have signed partnership agreements with OpenAI. Our reporting remains editorially independent.)

The foundation’s first gifts came in the form of $40.5 million in no-strings-attached grants to over 200 community nonprofits, like churches, food banks, and afterschool programs. Notably, most grantees had little to no connection to AI or technology — and just as notably, several of these early grantees just so happen to be members of EyesOnOpenAI, a coalition of California nonprofits critical of OpenAI’s privatization that formed in 2025.

But there are signs the foundation will soon pivot into grantmaking that’s more obviously relevant to the company’s original charter, which aimed to ensure that the benefits of AI are broadly distributed while also prioritizing long-term safety in the technology’s development. On Feb. 19, OpenAI — the company, not the foundation — announced a $7.5 million grant in conjunction with Microsoft, Anthropic, Amazon, and other major tech companies for a new, international project aimed at researching how to make AI systems safer.

“The unspoken truth here is that they’re never going to make a decision that is bad for the company.”

— Catherine Bracy, TechEquity founder and CEO

The real questions around the OpenAI Foundation have less to do with how much it is giving and to whom than whether it is actually able to carry out its contractual oversight role. In theory, the foundation should be ensuring that OpenAI is the standard-bearer for ethical decision-making at the frontier of AI development. That would be a unique contribution to the field — and an embodiment of OpenAI’s original mission — that no amount of grantmaking could replace. Yet, a series of troubling recent decisions by the company hardly seems to bear out that vision.

OpenAI has begun its new corporate journey by debuting ads on its free tier service, firing an executive who raised safety concerns about a soon-to-come NSFW mode for ChatGPT on charges of sexual discrimination against a male colleague, and burning cash while its president funnels millions of dollars into Donald Trump’s super PAC. OpenAI President Greg Brockman has also teamed up with the private equity firm Andreessen Horowitz and Palantir’s co-founders to fund a $125 million super PAC aimed at promoting AI-friendly policies. Along with Google, xAI, and Anthropic, OpenAI has also come under scrutiny in recent weeks for its defense contracts with the Pentagon.

When OpenAI succeeded in its campaign to cede its foundational new technology from nonprofit control, it opened the door for many of these decisions. Even $180 billion in charity might not be enough to make up for the difference.

How OpenAI shed its nonprofit skin

Corporate charity is ubiquitous in the tech world, especially among the biggest players. Microsoft plans to donate $4 billion in cash and AI cloud technology to schools and nonprofits by 2030. Google gives away some $100 million annually, often to organizations focused on artificial intelligence and technology.

But from the beginning, OpenAI was different. Rather than making money and giving some of it to charity, OpenAI was the charity. It was founded as a nonprofit research lab with about $1 billion in start-up donations, mostly from tech titans like Altman, Brockman, and Elon Musk.

There are some structural advantages to being a charity. You can’t accept investments, but you can accept donations and you don’t have to pay most taxes. What’s more, in those early days, OpenAI’s stated mission — to build safe AI without the pressures of financial incentive — gave it a major boost when it came to recruitment for rarified talent. Machine learning prodigy Ilya Sutskever told Wired in 2016 that he chose to leave Google to become OpenAI’s chief scientist “to a very large extent, because of its mission.”

But there were limits to being a fully nonprofit entity. In pursuit of financing amid the rising computing costs of cutting-edge AI, OpenAI created its capped-profit subsidiary in 2019 to manage a new $1 billion investment from Microsoft. Three years later, ChatGPT took the world by storm. Sutskever, and other members of OpenAI’s board, tried and ultimately failed to oust Altman amid accusations of dishonesty in 2023. (Altman denied those accusations.) In 2024 — one year after Sutskever and other members of OpenAI’s board tried and ultimately failed to oust Altman amid accusations of dishonesty — the organization announced its intention to go fully corporate and splinter off the nonprofit into its own fully independent entity.

The transition to for-profit “just didn’t smell right,” said Orson Aguilar, head of LatinoProsperity, an economic justice nonprofit and Bracy’s co-leader at EyesOnOpenAI. He wasn’t alone: By early 2025, a dozen former OpenAI employees filed an amicus brief aimed at stopping the conversion because it would “fundamentally violate its mission.” And more than 60 nonprofit, philanthropy, and labor leaders, many of them based in OpenAI’s home state of California, agreed that the attempt to privatize felt unfair given the extent to which the company benefited from its tax-free status during its early development.

To grasp what this all means, try thinking of OpenAI’s for-profit arm as an angsty tween and the nonprofit as her well-meaning, but often powerless parent. For years, the tween had been allowed to do her own thing, but only within certain limits — she still had to do her homework and get home by a certain time. Now imagine, she’s sick of having a curfew. “Nobody else has one!” She still lives in her mother’s house, but she wants to follow her own rules.

That’s kind of what happened here. Up until now, OpenAI’s for-profit subsidiary had a capped-profit model, meaning there were limits on how much money investors could make. But this new deal paved the way for the for-profit to become a full-time corporate girlie, charitable bylaws be damned. And while OpenAI’s new public benefit corporation still technically exists under the original nonprofit’s control, it mostly follows its own rules. It can raise as much money as it wants and eventually, it will likely go public.

But California history did provide some hope that the public might at least get some meaningful benefit from the transition. Back in the 1990s, California’s branch of the health insurer Blue Cross Blue Shield — then a nonprofit called Blue Cross of California — decided to privatize. After some haggling with state regulators, the company agreed to forfeit all of its assets, worth $3.2 billion, to a pair of independent nonprofits in exchange for going private. The result was the California Endowment, which is now the state’s largest health foundation.

Many nonprofit leaders in California hoped that OpenAI, which is headquartered in the state, would strike a similar deal, ceding a majority of its assets to a fully independent nonprofit. And those assets were and are enormous.

Gary Mendoza, a former state official who oversaw the Blue Cross deal, estimated the OpenAI nonprofit’s rightful assets at over $250 billion, or half the company’s $500 billion worth. “Anything short of 50 percent,” he told the San Francisco Examiner last year, “is a missed opportunity.” And beyond money for the public, assuming the nonprofit kept its shares, it would add up to enough influence to really shape OpenAI’s corporate decision-making at a key moment for the future of artificial intelligence.

Given that the OpenAI Foundation ended up with little more than a quarter of the final company, this is obviously not what happened. But EyesOnOpenAI’s years-long lobbying effort was not a total bust. The criticism proved powerful enough that last May, OpenAI was forced to give up on an initial plan to restructure away its nonprofit assets into a new organization wholly disconnected from OpenAI, which would have left the nonprofit with no legal control over the for-profit arm.

On paper, the new deal includes some meaningful concessions. It contractually requires the nonprofit mission to come first on safety and security issues, with no regard to shareholder interests. The memorandum also calls on OpenAI to “mitigate risks to teens” specifically. It made the foundation the controlling shareholder of the corporation, affording it the right to appoint corporate directors and oversee critical decisions like a sale.

If OpenAI abided by all of its terms and eventually started giving away billions of dollars of philanthropy each year, then the world — or at least California, where many of OpenAI’s grants have been concentrated — could stand to greatly benefit from it.

Random acts of corporate kindness

And this brings us to the $40.5 million that OpenAI gave to over 200 nonprofits toward the end of last year.

Many of these charities applied to the grant with sophisticated ideas around how to help their communities integrate or adapt to AI, though they can ultimately use the grants however they see fit. Among them were public libraries, Boys and Girls Clubs, churches, food banks, and legal aid nonprofits. Coming at a moment when the majority of the country’s nonprofits face existential funding cuts, “it was just the perfect timing,” said Thomas Howard Jr, head of Kidznotes, a North Carolina nonprofit focused on music education that received $45,000 in OpenAI’s first round of grants.

“There’s nothing I’ve seen that gives me reassurance that they’ll catch the important safety issues when they come up — or that they’ll be doing a thorough investigation of the grantmaking opportunities.”

— Tyler Johnston, Midas Project executive director

So civil society’s fight over the OpenAI transition won at least enough concessions to help these worthy organizations and retain some semblance of nonprofit control over some of the for-profit’s activities. So why do so many people in the philanthropic community remain so negative about the foundation?

“I’m all for nonprofits getting money,” said Bracy, the head of TechEquity. “I don’t begrudge any organizations that took the money, but I don’t think it’s some indication that OpenAI is living up to the mission of the nonprofit.”

$40.5 million, of course, is only 0.02 percent of the OpenAI Foundation’s on-paper $180 billion windfall. How the foundation will eventually spend the other 99.98 percent remains to be seen, though the foundation has said that at least $25 billion will ultimately go to scientific research and what it’s calling “technical solutions for AI resilience.” The company plans to announce a second wave of grants directed at organizations using AI to work across issues like health in the coming months.

“We are doing the important work of engaging with experts, learning from communities, and shaping a point of view of where Foundation investments can make the greatest difference,” the OpenAI Foundation’s board of directors said in response to a request for clarity on where future funding will go. “We look forward to sharing more soon.”

But so far, critics remain skeptical. OpenAI has done little to prove that its newfound philanthropy is more than just “a smoke and mirrors show,” argued one member of the Coalition for AI Nonprofit Integrity (CANI) — a coalition composed largely of AI insiders, including former OpenAI employees, furiously opposed to the restructuring. He spoke on the condition of anonymity because he feared retaliation from OpenAI, which has accused CANI of being a front funded by Musk. (CANI has denied receiving any such funds — though not for lack of trying. If you scroll to the bottom of OpenTheft, a website created by CANI, you’ll find a direct plea to Musk for donations.)

While a spokesperson for OpenAI said that the foundation is in the process of building a dedicated team, and has sought the input of both nonprofit leaders and experts in how society can adapt to AI, the company has yet to make any major staffing announcements for its grantmaking arm. For now, with the exception of Zico Kolter, the head of the nonprofit’s safety committee, the foundation board still shares the same members as the corporate board, including CEO Sam Altman. The idea is that these board members can put on different hats when meeting about nonprofit versus corporate priorities, asserting the foundation’s oversight when needed. But it has created the appearance of a conflict of interest.

When asked for mechanisms and examples for how the foundation has responded to situations where its mission conflicts with shareholder interests, given the overlapping board membership, the spokesperson said that OpenAI has conflict-of-interest policies and governance procedures in place to ensure its directors only consider the mission when they meet, as they regularly do, about nonprofit issues.

The company also said the foundation board constantly exercises its oversight role, including for all new major product releases, like the release of GPT‑5.3‑Codex, an advanced agentic coding model, last month. The AI watchdog group the Midas Project, a frequent thorn in OpenAI’s side, accused the company of violating safety standards, an allegation that OpenAI fervently denied.

In any case, since the OpenAI Foundation is not a separate entity with its own independent board, some critics have compared it to other feel-good corporate social responsibility ventures, like the McDonald’s Ronald McDonald House, Walmart’s healthy foods program, and Home Depot’s work with veterans.

Corporate social responsibility has its place, and it can do real good. But Bracy believes that based on the OpenAI Foundation’s structuring and how they’ve conducted their grantmaking so far, it will probably never fund anything “they see as a threat to the growth of the company,” said Bracy, despite the fact that the need for guardrails on unrestricted AI development featured prominently in the company’s original mission. “They’re going to do what’s best for the bottom line of the for-profit.”

Critics like Bracy also doubt the OpenAI Foundation’s other main prerogative, which is to govern all safety and ethics-related issues for the broader organization, including the responsibility to review new products.

“Instead of a vehicle to serve humanity, it’s become a vehicle to serve one individual and a few of his friends and investors.”

— Anonymous member of CANI

While the nonprofit and its mission do legally retain control over the OpenAI corporation — particularly when it comes to safety issues — that may add up to little, given that the OpenAI Foundation doesn’t seem to be an independently governed foundation. It is not, in fact, even technically a foundation, but a public charity, which means it is not required to pay out a certain percentage of its assets each year under IRS requirements.

And while the nonprofit retains significant oversight powers on paper — including the authority to halt AI releases it deems unsafe — in practice, critics say, it’s unclear whether it would ever use them.

Increasingly, OpenAI has also been wading into political lobbying efforts that seem at odds with its mission to promote long-term safety in AI development. When California lawmakers were debating SB 53, a law requiring transparency reports from leading AI companies, OpenAI lobbied against it. And the company has come under intense scrutiny in recent weeks for its contract with the Pentagon, which has blacklisted its rival company Anthropic for raising ethical concerns about the use of its technology.

Why the fight is not over

OpenAI’s new corporate arrangement is very, very new. It’s still possible that OpenAI’s grantmaking arm really does staff up, and the nonprofit builds an independent board that has the power to enforce hard ethical decisions for the company, even when it hurts investors’ returns.

“They have a lot of freedom to continue to do good,” said Tyler Johnston, executive director of the Midas Project, but that would require them to “actually shake things up” and “show that they’ve created the scaffolding that will enable them to actualize their mission.”

But so far, “there’s nothing I’ve seen that gives me reassurance that they’ll catch the important safety issues when they come up,” he said. “Or that they’ll be doing a thorough investigation of the grantmaking opportunities.”

If OpenAI does not abide by the terms of its new contract — if the company, for example, tries to thwart an attempt to roll back a dangerous new tool — then California’s attorney general does have the power to demand answers from the company, and in theory, revisit the agreement’s terms.

Beyond the agreement, there are a few quite public means by which OpenAI’s former lovers, skeptics, and nemeses are still trying to press rewind on the restructuring.

Chief among them is Elon Musk, OpenAI’s most prominent original donor and co-founder. In between trading embarrassing jabs with Altman on X, Musk took OpenAI to court last year over claims that he was “assiduously manipulated” into donating tens of millions of dollars to a nonprofit research lab that turned into an “opaque web of for-profit OpenAI affiliates.”

A judge has found enough cause for the case to proceed to trial this April. Musk is suing for up to $134 billion in damages, though OpenAI has told its investors that it believes it would only be on the hook for Musk’s $38 billion in original donations. OpenAI, for its part, has accused Musk of an “unlawful campaign of harassment.”

Meanwhile, CANI is still holding out hope that it can convince the people of California to vote for a hyperspecific ballot measure, the California Charitable Assets Protection Act, which could reverse the decision to allow OpenAI — or any other “organizations developing transformative technologies” — to go corporate.

“They’re cutting corners on safety because of the race to artificial general intelligence that they just want to win,” said the member of CANI. “Instead of a vehicle to serve humanity, it’s become a vehicle to serve one individual and a few of his friends and investors.”

So maybe the fight over OpenAI’s restructuring isn’t completely over — but it’s probably on its last legs. And if they continue on the same path, it’s unlikely that the public will ever really benefit in the way they ought to, given the charitable benefits OpenAI enjoyed in its early days. At the very least, $40.5 million is just not going to cut it. Even $180 billion might fall far short.

“I think it’s them saying, ‘Listen, I dare you to enforce this,’” said Bracy, who believes OpenAI is “banking on the fact that they’re worth almost a trillion dollars, and they have endless resources — and the state of California does not.”

Tech

Testing Suggests Google’s AI Overviews Tells Millions of Lies Per Hour

A New York Times analysis found Google’s AI Overviews now answer questions correctly about 90% of the time, which might sound impressive until you realize that roughly 1 in 10 answers is wrong. “[F]or Google, that means hundreds of thousands of lies going out every minute of the day,” reports Ars Technica. From the report: The Times conducted this analysis with the help of a startup called Oumi, which itself is deeply involved in developing AI models. The company used AI tools to probe AI Overviews with the SimpleQA evaluation, a common test to rank the factuality of generative models like Gemini. Released by OpenAI in 2024, SimpleQA is essentially a list of more than 4,000 questions with verifiable answers that can be fed into an AI.

Oumi began running its test last year when Gemini 2.5 was still the company’s best model. At the time, the benchmark showed an 85 percent accuracy rate. When the test was rerun following the Gemini 3 update, AI Overviews answered 91 percent of the questions correctly. If you extrapolate this miss rate out to all Google searches, AI Overviews is generating tens of millions of incorrect answers per day.

The report includes several examples of where AI Overviews went wrong. When asked for the date on which Bob Marley’s former home became a museum, AI Overviews cited three pages, two of which didn’t discuss the date at all. The final one, Wikipedia, listed two contradictory years, and AI Overviews confidently chose the wrong one. The benchmark also prompts models to produce the date on which Yo Yo Ma was inducted into the classical music hall of fame. While AI Overviews cited the organization’s website that listed Ma’s induction, it claimed there’s no such thing as the Classical Music Hall of Fame. “This study has serious holes,” said Google spokesperson Ned Adriance. “It doesn’t reflect what people are actually searching on Google.” The search giant likes to use a test called SimpleQA Verified, which uses a smaller set of questions that have been more thoroughly vetted.

Tech

Earthset and eclipse, oh my! NASA releases magnificent images from Artemis mission’s moon flyby

A day after the Artemis 2 mission’s historic lunar flyby, NASA has released a stunning set of high-resolution images documenting Earthset and Earthrise, a solar eclipse that set the moon aglow, and other views of the lunar far side and the astronauts who took the pictures.

The photographs were taken during a seven-hour lunar observation period at the farthest point of the Orion space capsule’s 10-day odyssey. The mission marked the first crewed trip around the moon since Apollo 17 in 1972, and the farthest-ever voyage by space travelers (252,756 miles from Earth, and more than 4,000 miles beyond the moon).

The Earthset photo was captured just as our home planet was sinking beneath the lunar horizon, followed about 40 minutes later by a picture of Earth rising above the horizon on the other side of the moon. The pictures rekindled the spirit of NASA’s original Earthrise photo, taken by astronaut Bill Anders during Apollo 8’s round-the-moon mission in 1968.

As Artemis 2’s astronauts prepared to take their own Earthrise photo, NASA astronaut Christina Koch said she was inspired by the original. “I had the photo up in my room as a kid, and it was part of what inspired me to keep working hard to achieve things I dreamed about,” she said.

The original Earthrise is one of the best-known photos from the Apollo era, but it took decades to confirm who actually took the shot. Anders wasn’t the sort of person to make a fuss over attribution. After a long career at NASA, at the Nuclear Regulatory Commission, in the diplomatic corps and in private industry, he settled down in Western Washington and founded the Heritage Flight Museum in Burlington, Wash. Two years ago, he died in a plane crash in waters off the San Juan Islands at the age of 90.

Anders and the original Earthrise aren’t the only connections linking Artemis 2 with the Pacific Northwest. The success of the mission depends in part on components built in the Seattle area. L3Harris’ Aerojet Rocketdyne facility in Redmond worked on Orion’s main engine and built some of its thrusters, while Karman Space Systems’ Mukilteo facility provided mechanisms for Orion’s parachute deployment system and emergency hatch release system.

Artemis 2’s four astronauts — Koch, NASA mission commander Reid Wiseman, pilot Victor Glover and Canadian astronaut Jeremy Hansen — were scheduled for off-duty periods today as Orion coasted toward Friday’s Pacific Ocean splashdown. The astronauts took questions from the crew of the International Space Station during a ship-to-ship chat.

“Basically, every single thing we learned on ISS is up here,” Koch said. The big difference? “I found myself noticing not only the beauty of the Earth, but how much blackness there was around it,” she said. “It just made it even more special. It truly emphasized how alike we are, how the same thing keeps every single person on planet Earth alive. … We have some shared things about how we love and live that are just universal. The specialness and preciousness of that really is emphasized when you notice how much else there is around it.”

Meanwhile, NASA’s image-processing team put in long hours overnight to work on the pictures taken by Artemis 2’s astronauts during the flyby. Pictures are being posted to NASA’s lunar flyby gallery. Check out these highlights, and click on the images to feast your eyes on higher-resolution views:

Artemis updates from Alan Boyle’s Cosmic Log

Tech

Netflix launches Playground, a kid-friendly gaming app with no ads or extra fees

The newly announced Netflix Playground is an all-in-one app designed to give children a curated gaming experience built around familiar cartoon characters. The streaming giant describes it as an ever-growing library of instantly playable games for kids aged 8 and under.

Read Entire Article

Source link

Tech

Buick’s Rarest ’70s Muscle Car Was Only Produced For Three Years

At the height of the muscle car era, Buick made a very rare vehicle. This Buick came with an amazingly powerful engine that set it apart from most others of its type. This Buick muscle car was called the Buick GSX. The GSX was a higher-performance evolution of the GS, or Gran Sport, moniker that Buick had used since it first shoehorned a 401-cubic-inch “nailhead” engine from the larger Wildcat into the intermediate-sized Skylark in 1965. The Buick GSX definitely qualified as having one of the classic muscle car engines that made tons of torque.

Without a doubt, the 455-cubic-inch engine in the GSX did make a huge amount of torque. Even though the base 455 in the GSX was rated at 350 horsepower, which has generally been acknowledged as severely underrated to keep the car insurance underwriters calm, it was also rated at 510 lb-ft of torque, the highest-listed torque rating during the muscle-car heyday.

The Buick GSX was made during a three-year period, during which the fortunes of the muscle cars would both rise and fall. The GSX’s run started in 1970, which could be considered the peak year for American muscle cars, particularly those from General Motors, and ended in 1972. A total of 678 GSX examples were produced in 1970, with just 124 in 1971 and an even lower 44 in 1972. And then the GSX was done, with only 846 units having ever been produced.

What was so special about the GSX?

In reality, the 1970 Buick GSX was essentially a package of appearance items that was applied to the 1970 GS model, which came with either the standard 350-horsepower 455 or the 360-horsepower Stage 1 engine. The buyer had a choice of two exterior colors: the unique Saturn Yellow and the non-exclusive Apollo White.

The GSX also received a front chin spoiler in black, a Buick-branded hood tach originally popularized on Pontiac’s GTO and Grand Prix, a rear spoiler that sat atop the trunk lid, body-colored racing-type mirrors and headlight bezels, a padded steering wheel, and, of course, the two distinctive broad hood stripes, complemented by the narrower stripe running along the body sides from front to rear and crossing at the rear spoiler. A firmed-up suspension called the “Rallye ride package” used gas shocks, sway bars, stiffer springs and bushings, and power front discs to improve the Buick GSX’s handling.

The 455-cubic-inch Buick GSX motor could be upgraded with the Stage 1 package, which added larger valves, a higher-compression cylinder head, a more aggressive camshaft, an upgraded four-barrel Rochester Quadrajet carburetor, and a retimed distributor. This made it one of the most powerful Buick engines, ranked by horsepower. Transmission options were either a three-speed Turbo Hydramatic or a four-speed manual. Performance numbers for the 1970 Buick GSX Stage 1, as performed by Motor Trend, were a quarter-mile time of 13.38 seconds at 105.5 mph. Pretty fast.

What happened to the Buick GSX in 1971 and 1972?

The 1971 Buick GSX saw some changes, as both emission regulations and the heavy hand of the insurance industry began to rein in performance. General Motors required all of its vehicles to run on regular gasoline, which lowered the standard 455’s compression ratio from 10:1 to 8.5:1, while the Stage 1 lost a full two points of compression. Horsepower dropped accordingly, from 350 to 315 in the standard 455 and from 360 to 345 in the Stage 1. One more change that Buick made to the GSX for 1971 and 1972 was the availability of a smaller, lower-powered engine — a 350-cubic-inch mill with a four-barrel carburetor producing 260 horsepower in 1971. Instead of the original two colors of Saturn Yellow and Apollo White, an additional nine colors became available.

1972 marked the final year for this fading muscle car, now available in 12 colors, even though total production amounted to just 44 cars. Power was also down, thanks to the use of “net” horsepower numbers, which lowered the output of the Stage 1 455 engine to 270 horsepower, the standard 455 engine to 250 horsepower, and the 350 engine to just 195 horsepower.

The Buick GSX is a distinctive muscle car that lived during both the best and the worst times of the muscle car era. Its rarity makes it one of the classic American muscle cars worth every penny.

Tech

AI joins the 8-hour work day as GLM ships 5.1 open source LLM, beating Opus 4.6 and GPT 5.4 on SWE-Bench Pro

Is China picking back up the open source AI baton?

Z.ai, also known as Zhupai AI, a Chinese AI startup best known for its powerful, open source GLM family of models, has unveiled GLM-5.1 today under a permissive MIT License, allowing for enterprises to download, customize and use it for commercial purposes. They can do so on Hugging Face.

This follows its release of GLM-5 Turbo, a faster version, under only proprietary license last month.

The new GLM-5.1 is designed to work autonomously for up to eight hours on a single task, marking a definitive shift from vibe coding to agentic engineering.

The release represents a pivotal moment in the evolution of artificial intelligence. While competitors have focused on increasing reasoning tokens for better logic, Z.ai is optimizing for productive horizons.

GLM-5.1 is a 754-billion parameter Mixture-of-Experts model engineered to maintain goal alignment over extended execution traces that span thousands of tool calls.

“agents could do about 20 steps by the end of last year,” wrote z.ai leader Lou on X. “glm-5.1 can do 1,700 rn. autonomous work time may be the most important curve after scaling laws. glm-5.1 will be the first point on that curve that the open-source community can verify with their own hands. hope y’all like it^^”

In a market increasingly crowded with fast models, Z.ai is betting on the marathon runner. The company, which listed on the Hong Kong Stock Exchange in early 2026 with a market capitalization of $52.83 billion, is using this release to cement its position as the leading independent developer of large language models in the region.

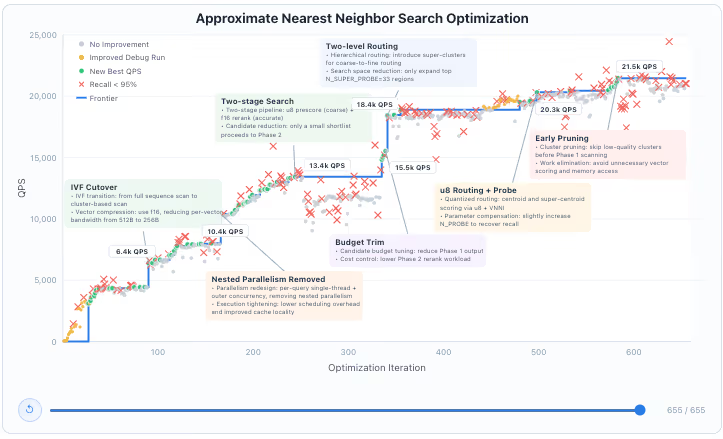

Technology: the staircase pattern of optimization

GLM-5.1s core technological breakthrough isn’t just its scale, though its 754 billion parameters and 202,752 token context window are formidable, but its ability to avoid the plateau effect seen in previous models.

In traditional agentic workflows, a model typically applies a few familiar techniques for quick initial gains and then stalls. Giving it more time or more tool calls usually results in diminishing returns or strategy drift.

Z.ai research demonstrates that GLM-5.1 operates via what they call a staircase pattern, characterized by periods of incremental tuning within a fixed strategy punctuated by structural changes that shift the performance frontier.

In Scenario 1 of their technical report, the model was tasked with optimizing a high-performance vector database, a challenge known as VectorDBBench.

The model is provided with a Rust skeleton and empty implementation stubs, then uses tool-call-based agents to edit code, compile, test, and profile. While previous state-of-the-art results from models like Claude Opus 4.6 reached a performance ceiling of 3,547 queries per second, GLM-5.1 ran through 655 iterations and over 6,000 tool calls. The optimization trajectory was not linear but punctuated by structural breakthroughs.

At iteration 90, the model shifted from full-corpus scanning to IVF cluster probing with f16 vector compression, which reduced per-vector bandwidth from 512 bytes to 256 bytes and jumped performance to 6,400 queries per second.

By iteration 240, it autonomously introduced a two-stage pipeline involving u8 prescoring and f16 reranking, reaching 13,400 queries per second. Ultimately, the model identified and cleared six structural bottlenecks, including hierarchical routing via super-clusters and quantized routing using centroid scoring via VNNI. These efforts culminated in a final result of 21,500 queries per second, roughly six times the best result achieved in a single 50-turn session.

This demonstrates a model that functions as its own research and development department, breaking complex problems down and running experiments with real precision.

The model also managed complex execution tightening, lowering scheduling overhead and improving cache locality. During the optimization of the Approximate Nearest Neighbor search, the model proactively removed nested parallelism in favor of a redesign using per-query single-threading and outer concurrency.

When the model encountered iterations where recall fell below the 95 percent threshold, it diagnosed the failure, adjusted its parameters, and implemented parameter compensation to recover the necessary accuracy. This level of autonomous correction is what separates GLM-5.1 from models that simply generate code without testing it in a live environment.

Kernelbench: pushing the machine learning frontier

The model’s endurance was further tested in KernelBench Level 3, which requires end-to-end optimization of complete machine learning architectures like MobileNet, VGG, MiniGPT, and Mamba.

In this setting, the goal is to produce a faster GPU kernel than the reference PyTorch implementation while maintaining identical outputs. Each of the 50 problems runs in an isolated Docker container with one H100 GPU and is limited to 1,200 tool-use turns. Correctness and performance are evaluated against a PyTorch eager baseline in separate CUDA contexts.

The results highlight a significant performance gap between GLM-5.1 and its predecessors. While the original GLM-5 improved quickly but leveled off early at a 2.6x speedup, GLM-5.1 sustained its optimization efforts far longer. It eventually delivered a 3.6x geometric mean speedup across 50 problems, continuing to make useful progress well past 1,000 tool-use turns.

Although Claude Opus 4.6 remains the leader in this specific benchmark at 4.2x, GLM-5.1 has meaningfully extended the productive horizon for open-source models.

This capability is not simply about having a longer context window; it requires the model to maintain goal alignment over extended execution, reducing strategy drift, error accumulation, and ineffective trial and error. One of the key breakthroughs is the ability to form an autonomous experiment, analyze, and optimize loop, where the model can proactively run benchmarks, identify bottlenecks, adjust strategies, and continuously improve results through iterative refinement.

All solutions generated during this process were independently audited for benchmark exploitation, ensuring the optimizations did not rely on specific benchmark behaviors but worked with arbitrary new inputs while keeping computation on the default CUDA stream.

Product strategy: subscription and subsidies

GLM-5.1 is positioned as an engineering-grade tool rather than a consumer chatbot. To support this, Z.ai has integrated it into a comprehensive Coding Plan ecosystem designed to compete directly with high-end developer tools.

The product offering is divided into three subscription tiers, all of which include free Model Context Protocol tools for vision analysis, web search, web reader, and document reading.

The Lite tier at $27 USD per quarter is positioned for lightweight workloads and offers three times the usage of a comparable Claude Pro plan. The Pro tier at $81 per quarter is designed for complex workloads, offering five times the Lite plan usage and 40 to 60 percent faster execution.

The Max tier at $216 per quarter is aimed at advanced developers with high-volume needs, ensuring guaranteed performance during peak hours.

For those using the API directly or through platforms like OpenRouter or Requesty, Z.ai has priced GLM-5.1 at $1.40 per one million input tokens and $4.40 per million output tokens. There’s also a cache discount available for $0.26 per million input tokens.

|

Model |

Input |

Output |

Total Cost |

Source |

|

Grok 4.1 Fast |

$0.20 |

$0.50 |

$0.70 |

|

|

MiniMax M2.7 |

$0.30 |

$1.20 |

$1.50 |

|

|

Gemini 3 Flash |

$0.50 |

$3.00 |

$3.50 |

|

|

Kimi-K2.5 |

$0.60 |

$3.00 |

$3.60 |

|

|

MiMo-V2-Pro (≤256K) |

$1.00 |

$3.00 |

$4.00 |

|

|

GLM-5 |

$1.00 |

$3.20 |

$4.20 |

|

|

GLM-5-Turbo |

$1.20 |

$4.00 |

$5.20 |

|

|

GLM-5.1 |

$1.40 |

$4.40 |

$5.80 |

|

|

Claude Haiku 4.5 |

$1.00 |

$5.00 |

$6.00 |

|

|

Qwen3-Max |

$1.20 |

$6.00 |

$7.20 |

|

|

Gemini 3 Pro |

$2.00 |

$12.00 |

$14.00 |

|

|

GPT-5.2 |

$1.75 |

$14.00 |

$15.75 |

|

|

GPT-5.4 |

$2.50 |

$15.00 |

$17.50 |

|

|

Claude Sonnet 4.5 |

$3.00 |

$15.00 |

$18.00 |

|

|

Claude Opus 4.6 |

$5.00 |

$25.00 |

$30.00 |

|

|

GPT-5.4 Pro |

$30.00 |

$180.00 |

$210.00 |

Notably, the model consumes quota at three times the standard rate during peak hours, which are defined as 14:00 to 18:00 Beijing Time daily, though a limited-time promotion through April 2026 allows off-peak usage to be billed at a standard 1x rate. Complementing the flagship is the recently debuted GLM-5 Turbo.

While 5.1 is the marathon runner, Turbo is the sprinter, proprietary and optimized for fast inference and tasks like tool use and persistent automation.

At a cost of $1.20 per million input / $4 per million output, it is more expensive than the base GLM-5 but comes in at more affordable than the new GLM-5.1, positioning it as a commercially attractive option for high-speed, supervised agent runs.

The model is also packaged for local deployment, supporting inference frameworks including vLLM, SGLang, and xLLM. Comprehensive deployment instructions are available at the official GitHub repository, allowing developers to run the 754 billion parameter MoE model on their own infrastructure.

For enterprise teams, the model includes advanced reasoning capabilities that can be accessed via a thinking parameter in API requests, allowing the model to show its step-by-step internal reasoning process before providing a final answer.

Benchmarks: a new global standard

The performance data for GLM-5.1 suggests it has leapfrogged several established Western models in coding and engineering tasks.

On SWE-Bench Pro, which evaluates a model’s ability to resolve real-world GitHub issues using an instruction prompt and a 200,000 token context window, GLM-5.1 achieved a score of 58.4. For context, this outperforms GPT-5.4 at 57.7, Claude Opus 4.6 at 57.3, and Gemini 3.1 Pro at 54.2.

Beyond standardized coding tests, the model showed significant gains in reasoning and agentic benchmarks. It scored 63.5 on Terminal-Bench 2.0 when evaluated with the Terminus-2 framework and reached 66.5 when paired with the Claude Code harness.

On CyberGym, it achieved a 68.7 score based on a single-run pass over 1,507 tasks, demonstrating a nearly 20-point lead over the previous GLM-5 model. The model also performed strongly on the MCP-Atlas public set with a score of 71.8 and achieved a 70.6 on the T3-Bench.

In the reasoning domain, it scored 31.0 on Humanitys Last Exam, which jumped to 52.3 when the model was allowed to use external tools. On the AIME 2026 math competition benchmark, it reached 95.3, while scoring 86.2 on GPQA-Diamond for expert-level science reasoning.

The most impressive anecdotal benchmark was the Scenario 3 test: building a Linux-style desktop environment from scratch in eight hours.

Unlike previous models that might produce a basic taskbar and a placeholder window before declaring the task complete, GLM-5.1 autonomously filled out a file browser, terminal, text editor, system monitor, and even functional games.

It iteratively polished the styling and interaction logic until it had delivered a visually consistent, functional web application. This serves as a concrete example of what becomes possible when a model is given the time and the capability to keep refining its own work.

Licensing and the open segue

The licensing of these two models tells a larger story about the current state of the global AI market. GLM-5.1 has been released under the MIT License, with its model weights made publicly available on Hugging Face and ModelScope.

This follows the Z.ai historical strategy of using open-source releases to build developer goodwill and ecosystem reach. However, GLM-5 Turbo remains proprietary and closed-source. This reflects a growing trend among leading AI labs toward a hybrid model: using open-source models for broad distribution while keeping execution-optimized variants behind a paywall.

Industry analysts note that this shift arrives amidst a rebalancing in the Chinese market, where heavyweights like Alibaba are also beginning to segment their proprietary work from their open releases.

Z.ai CEO Zhang Peng appears to be navigating this by ensuring that while the flagship’s core intelligence is open to the community, the high-speed execution infrastructure remains a revenue-driving asset.

The company is not explicitly promising to open-source GLM-5 Turbo itself, but says the findings will be folded into future open releases. This segmented strategy helps drive adoption while allowing the company to build a sustainable business model around its most commercially relevant work.

Community and user reactions: crushing a week’s work

The developer community response to the GLM-5.1 release has been overwhelmingly focused on the model’s reliability in production-grade environments.

User reviews suggest a high degree of trust in the model’s autonomy.

One developer noted that GLM-5.1 shocked them with how good it is, stating it seems to do what they want more reliably than other models with less reworking of prompts needed. Another developer mentioned that the model’s overall workflow from planning to project execution performs excellently, allowing them to confidently entrust it with complex tasks.

Specific case studies from users highlight significant efficiency gains.

A user from Crypto Economy News reported that a task involving preprocessing code, feature selection logic, and hyperparameter tuning solutions, which originally would have taken a week, was completed in just two days. Since getting the GLM Coding plan, other developers have noted being able to operate more freely and focus on core development without worrying about resource shortages hindering progress.

On social media, the launch announcement generated over 46,000 views in its first hour, with users captivated by the eight-hour autonomous claim. The sentiment among early adopters is that Z.ai has successfully moved past the hallucination-heavy era of AI into a period where models can be trusted to optimize themselves through repeated iteration.

The ability to build four applications rapidly through correct prompting and structured planning has been cited by multiple users as a game-changing development for individual developers.

The implications of long-horizon work

The release of GLM-5.1 suggests that the next frontier of AI competition will not be measured in tokens per second, but in autonomous duration.

If a model can work for eight hours without human intervention, it fundamentally changes the software development lifecycle.

However, Z.ai acknowledges that this is only the beginning. Significant challenges remain, such as developing reliable self-evaluation for tasks where no numeric metric exists to optimize against.

Escaping local optima earlier when incremental tuning stops paying off is another major hurdle, as is maintaining coherence over execution traces that span thousands of tool calls.

For now, Z.ai has placed a marker in the sand. With GLM-5.1, they have delivered a model that doesn’t just answer questions, but finishes projects. The model is already compatible with a wide range of developer tools including Claude Code, OpenCode, Kilo Code, Roo Code, Cline, and Droid.

For developers and enterprises, the question is no longer, “what can I ask this AI?” but “what can I assign to it for the next eight hours?”

The focus of the industry is clearly shifting toward systems that can reliably execute multi-step work with less supervision. This transition to agentic engineering marks a new phase in the deployment of artificial intelligence within the global economy.

Tech

‘I wouldn’t bet against Elon’: Cisco CEO Chuck Robbins ‘absolutely’ wants to put data centers in space

- Cisco CEO Chuck Robbins says he’s already exploring how to send data centers to space

- OpenAI’s Sam Altman sees it as a “pipe dream,” Elon Musk is optimistic

- Space-bound data centers would tackle a lot of the current issues

Cisco CEO Chuck Robbins has revealed his company execs are already discussing plans to put data centers in space.

Robbins clearly backs the idea, noting that space could remove some of Earth’s key constraints like power, cooling and land availability. Abundant solar energy and fewer community objections are among the highlights (though a different type of objection would likely occur).

And Robbins isn’t the only person with influence over data centers who believes this: “Sam Altman is one who says, ‘I don’t think they should be in their backyards’,” he told Nilay Patel of The Verge.

Article continues below

Cisco is actively exploring putting data centers in space

Although Altman may be sceptical of locating data centers in space, SpaceX’s Elon Musk is a major supporter. When asked whether he would believe Altman, who claims space-bound data centers are a “pipe dream,” or Elon Musk, Robbins stated: “I wouldn’t bet against Elon.”

These campuses are generally seen as noisy, energy-intensive operations that are especially unpopular locally. Hyperscalers face increasing public opposition and concerns over environmental impacts, however soaring usage is a conflicting trend that’s requiring ongoing buildouts.

However, Cisco is still figuring out some of the technical challenges relating to temperature, atmospheric conditions and launch logistics.

There’s also a growing demand for data sovereignty, and it’s unclear at best how space-located data centers would play into this with infrastructure design shifting from global systems to localized deployments.

As for the next steps, there are clearly a lot of them. “We’re in the early stages of just making sure the atmospheric issues, the temperatures, all of those things are taken into consideration,” the CEO stated, noting “we don’t even know everything we need to do yet.”

“Absolutely,” Robbins concluded when asked whether we should put data centers in space.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

Tech

Our Favorite Video Doorbell Is $40 Off

Tired of solicitors knocking on your front door trying to sell you junk while you’re relaxing? You can grab the Google Nest Doorbell, our favorite video doorbell, from Amazon for just $140, a $40 discount from its usual price, and turn them away without getting off the couch. This attractive and elegant video doorbell has a variety of smart features, full Google integration, and hooks up to a powered source so you never have to charge the battery. I have the wireless version at home and found it extremely useful for spotting packages, talking to neighbors, or just snooping on my house while I’m away.

The video quality is excellent, with a huge 166-degree field of view that easily captures both your front yard and any packages that might be sitting on the ground close to the door. If you have other Nest displays, like the Nest Hub, they’ll show video alerts, and you can even turn on automatic picture-in-picture on your Google TV. When people speak to the doorbell the quality is nice and crisp, and you can even talk to delivery drivers or friends who stop by when you aren’t home.

You don’t need a subscription to use the basic video capture and doorbell features on the Nest Doorbell, but there is an upgraded plan available that adds in a longer video history, as well as more advanced detection features. While it hasn’t been the most consistent for me, it attempts to differentiate between familiar and unfamiliar faces, so it doesn’t bother pinging my phone when it sees me getting home. Depending on which plan you choose, you can get up to 60 days of video history, so I’ve been able to look back weeks into the past to look for packages, or spot if something happened to my neighbor’s car.

For the $40 discount on the wired Google Nest Doorbell, head over to Amazon to grab one in Snow, Hazel, or Linen. If you aren’t sure about the Nest Doorbell, or you aren’t invested in the Google Home ecosystem, we have a full roundup of the best video doorbells from brands like Google, Arlo, and Eufy.

Tech

Joby and Air Space Intelligence team up to manage US electric air taxi skies

In short: Joby Aviation and Air Space Intelligence have announced a partnership to integrate AI-driven airspace management into U.S. electric air taxi operations, using ASI’s Flyways AI platform to model high-density eVTOL traffic before commercial flights begin later this year.

The electric air taxi race has long centred on the aircraft itself: wing count, battery range, noise footprint. Now, with Joby Aviation weeks away from completing FAA type certification and the White House’s eVTOL Integration Pilot Programme clearing the way for early commercial operations across 10 U.S. states, the harder question is finally being asked out loud. The skies may be ready for one or two electric air taxis. They are almost certainly not ready for hundreds of them, all manoeuvring simultaneously through the same congested corridors above Manhattan, Miami, and Dallas. Joby and Air Space Intelligence (ASI) announced on 7 April 2026 that they intend to fix that, before it becomes a problem.

The partnership tasks the two companies with accelerating the integration of advanced air mobility into the U.S. National Airspace System (NAS), using ASI’s AI-powered Flyways platform as the core coordination layer. Joint demonstrations, including live operational exercises, are expected before the end of 2026, a timeline that aligns directly with Joby’s own commercial launch ambitions.

A new operating system for the sky

ASI, founded in Boston in 2018 and backed by a $34 million Series B led by Andreessen Horowitz in December 2023, has spent years solving a version of this problem for conventional aviation. Its flagship PRESCIENCE platform provides a four-dimensional digital twin of the operating environment, ingesting live traffic data, weather feeds, and demand forecasts to simulate airspace conditions hours in advance. Flyways AI, ASI’s commercial product layer built on PRESCIENCE, translates those simulations into decision-ready recommendations for air traffic controllers, allowing them to proactively reroute flows before congestion sets in rather than reacting after the fact.

Alaska Airlines and the U.S. Department of Defense are among ASI’s confirmed customers. The company’s existing work with legacy aviation gives it a dataset and a regulatory credibility that most newer entrants in the advanced air mobility space cannot easily replicate. Applying that platform to eVTOL is, in ASI’s framing, a natural extension. “Scaling advanced air mobility requires more than new aircraft,” said Bernard Asare, President of Civil Aviation at Air Space Intelligence. “It requires a new operating system for the airspace. Our Flyways AI platform gives operators and controllers the predictive awareness to coordinate high-density operations proactively, not reactively. This partnership brings that same capability to eVTOL operations from day one.”

What Joby brings to the table

Joby’s contribution is operational experience and institutional relationships that no software company can substitute. The Santa Cruz-based manufacturer has conducted more than 1,000 test flights of its S4 aircraft, completed Stage 4 of the FAA’s five-stage type certification process, and, in March 2026, was selected to participate in five projects under the White House-backed eVTOL Integration Pilot Programme, giving it the legal pathway to begin passenger operations in states including New York, Florida, Texas, North Carolina, and Utah before full certification is granted.

Joby has also built a commercial ecosystem that few of its rivals can match: a partnership with Delta Air Lines that includes vertiport infrastructure at JFK and LAX, a $250 million strategic investment from Toyota, a 25-site vertiport deal with Metropolis, and an active Dubai operation that represents the company’s first revenue-generating international market. Its SuperPilot autonomy stack, developed with Nvidia’s IGX Thor platform, is designed to progressively reduce cockpit dependency as regulatory confidence grows, part of a broader AI infrastructure build-out that mirrors a year of rapid enterprise AI expansion across sectors.

“America has long set the global standard for aviation, and modernising our airspace is key to maintaining that leadership,” said Greg Bowles, Chief Policy Officer at Joby Aviation. “By combining Joby’s operational capabilities with ASI’s advanced AI-driven Flyways platform, we’re helping build the intelligent infrastructure needed to integrate electric air taxis seamlessly into the NAS.”

The BNATCS window

The timing is not accidental. The FAA’s Brand New Air Traffic Control System (BNATCS) is now under active development, a $32.5 billion overhaul of the U.S.’s ageing telecommunications, radar, and automation infrastructure. Congress has committed $12.5 billion, with a further $20 billion still required. Peraton has been named as system integrator. The programme will introduce 5,170 new high-speed network connections across fibre, satellite, and wireless, and is expected to include automated decision-support tools specifically designed for the influx of new traffic categories, including drones and eVTOLs, that current systems were never built to handle.

The Joby-ASI partnership positions both companies to influence how those tools are designed. By running live operational exercises with Flyways AI ahead of the BNATCS rollout, the two companies will be able to generate real-world data on how AI-mediated coordination performs alongside human controllers. That data is precisely what the FAA needs to define the standards that will govern every eVTOL operator in the country. Joby and ASI are, in other words, not merely preparing their own operations; they are helping to write the rulebook. This kind of infrastructure investment at scale echoes broader AI infrastructure deals reshaping technology’s physical footprint, with companies moving quickly to own the foundational layers before standards harden.

The governance gap eVTOL must cross

The challenge ASI is addressing sits at the intersection of aviation safety and AI governance, an area that regulators globally are still working to define. Autonomous or AI-assisted systems operating in safety-critical environments require a level of explainability and auditability that most machine learning architectures were not originally designed to provide. PRESCIENCE’s 4D simulation approach, which generates human-interpretable lookahead scenarios rather than black-box outputs, is partly a product of this regulatory reality. Making AI legible to air traffic controllers is not a nice-to-have; it is a certification prerequisite. The broader question of governed AI in high-stakes environments is one the entire industry is grappling with, and the Joby-ASI model may offer a template.

What sets this partnership apart from earlier eVTOL airspace initiatives, which tended to focus on unmanned traffic management (UTM) for drones rather than manned commercial aircraft, is the integration of existing air traffic control workflows. Flyways AI is not a parallel system that operates alongside the NAS; it is designed to slot into the controller’s existing interface, augmenting rather than replacing human judgement. That design philosophy may prove decisive as the FAA works to define what AI assistance in the cockpit and in the tower is, and is not, permitted to do.

What comes next

Both companies have indicated that live operational exercises will begin in 2026, though neither has specified which markets or corridors will be used for the initial demonstrations. Given Joby’s eIPP designations, New York and Florida are the likeliest candidates. The exercises are expected to produce data that can be submitted to the FAA as part of the ongoing NAS integration process, contributing to the regulatory record that will define how all future eVTOL operators handle airspace coordination at scale.

The partnership carries no disclosed financial terms. It is framed as a technical and operational collaboration, with both companies sharing data and co-developing protocols rather than exchanging capital. Whether that structure changes as the relationship matures will depend in part on how quickly Joby’s commercial operations scale, and how central Flyways AI becomes to running them. The question that defined much of last year’s AI conversation, whether AI tools can move from demonstration to durable operational infrastructure, is about to be tested in one of the most demanding environments imaginable: the U.S. National Airspace System, at altitude, with passengers on board.

The aircraft are almost ready. The question now is whether the sky itself can keep up.

Tech

KEF Muo (2nd Gen) Review

Verdict

Taken on its own terms there’s a whole lot to like about the KEF Muo and not a great deal to take issue with. But nothing happens in isolation – and the little shortcomings this speaker demonstrates means it’s under threat from some slightly more well-rounded alternatives…

-

Insightful, rhythmically positive sound of impressive scale

-

Impressive all-round specification

-

Extremely well-made and -finished

-

Midrange reproduction is relatively blunt and approaching strident

-

Plenty of very capable alternatives

-

Rather brief control app

Key Features

-

Power

40 watts of Class D

-

Connections

aptX Adaptive and USB-C

-

Water resistance

IP67 rating

Introduction

It’s been a full 10 years since KEF launched its original Muo Bluetooth speaker, a wireless speaker that back then, promised a high-end performance at a premium price.

Since 2016 the company has enjoyed an enviable strike-rate where its new products are concerned – so does the 2nd Gen Muo chalk up another hit?

Design

Its dimensions, relatively light weight and very promising IP rating would tend to indicate the KEF Muo is a go-anywhere, do-anything kind of Bluetooth speaker. And it’s true, it’s built to survive in any realistic environment and to be no kind of hindrance when it comes to getting there or coming back again.

But bear in mind the majority of the Muo is built from smooth, tactile and exquisitely finished aluminium. The sort of material, in fact, that it’s not especially difficult to mark or scratch or even dent. So if you do intend to take your speaker with you into the Great Outdoors, be aware that there are devices that lend themselves much more readily to being slung into a backpack and bounced around in there than this one.

And you’ll want to keep it pristine, because in any of the available finishes the Muo (to my eyes, at least) looks the business. I wouldn’t necessarily choose the Midnight Black of my review sample, but I’d happily take any of the Silver Dusk, Moss Green, Blue Aura, Cocoa Brown or Orange Moon alternatives.

There are some physical controls integrated into the rubber end-cap at the top of the speaker – they cover power on/off and volume up/down, and there’s a multifunction button that takes care of skip forwards/backwards, play/pause and answer/end/reject call (the mic that turns this into a speakerphone features noise- and echo-cancellation technology). There’s also a button to initiate Bluetooth pairing at the rear of the speaker – it’s just next to the USB-C slot.

Features

- Bluetooth 5.4 with aptX Adaptive

- 40 watts of Class D power

- Auracast-enabled

There are a couple of ways of getting audio information on board the Muo. The USB-C slot at the rear of the cabinet can be used for data transfer as well as charging the battery, and wireless connectivity is dealt with by Bluetooth 5.4 that’s compatible with the SBC, AAC and aptX Adaptive codecs. These options can deal with 16-bit/48Hz and 24-bit/48Hz resolutions respectively.

And there are further connectivity options. The Muo is Auracast-enabled, so can be part of an extremely expansive system as long as it’s partnered correctly. Two Muo (Muos?) can form a stereo pair. And both Microsoft Swift Pair and Google Fast Pair are available, too.

Once the digital audio information is on board, it’s delivered by a two-driver array powered by a total of 40 Class D watts. A 20mm tweeter takes up 10 of those watts, the other 30 is taken by a 117mm x 58mm racetrack mid/bass driver that features the company’s P-Flex technology – this arrangement, says KEF, results in a frequency response of 43Hz – 20kHz.

There’s an accelerometer built into the Muo which allows it to detect its orientation and adjust its sound output accordingly. In portrait position, the tweeter is above the mid/bass driver; put the speaker into landscape orientation (it is fitted with four small rubber feet for this purpose) and obviously the drivers are now side-by-side.

You can also exert control over the Muo by using the KEF Connect app. In this guise it deals only with input selection and volume control, but it does at least give access to five EQ presets and an indication of battery life too.

Battery life is quoted at 24 hours from a single charge (at moderate volume levels, naturally), and should the worst happen you can go from flat to full in around two hours via the USB-C input. A quick 15-minute burst should be enough to get another three hours of playback (again, provided you’re not going for it where volume levels are concerned).

Sound Quality

- Nicely shaped and varied low-frequency response

- Sizeable and detailed presentation

- Can sound slightly strident, especially through the midrange

For a relatively compact speaker in physical terms, the sound the Muo makes is anything but discreet. No matter if you give it a bog-standard 320kbps MP3 file of Private Life by Grace Jones to deal with or a bigger 24-bit/44.1kHz FLAC file of By Storm’s Dead Weight, the KEF sounds big and spacious, and delivers a presentation that easily escapes the confines of its cabinet.

It extracts and reveals plenty of detail, both broad and fine, at every stage of the frequency range – which goes a long way to convincing you, as the listener, that you’re getting a full account of what’s going on.

Down at the bottom end there’s a lot of information regarding texture made available, and bass sounds are nicely shaped and controlled too – so as well as an impressive amount of variation at the low end, rhythms are expressed with genuine positivity. It’s a similar story at the opposite end, inasmuch as treble sounds have shape and substance to go along with a fair amount of bite – and harmonic variation is apparent at every turn.

As well as the more understated dynamics of harmonic fluctuations, the Muo is also quite adept at dealing with the big dynamic variations that come when a recording ramps up the volume or the intensity. It has no problem tracking changes in attack, and maintains the distance between quiet and loud even if you’re listening quite loud in the first place.

Turning the volume up doesn’t alter the evenness of the frequency response or harm the natural, neutral tonality the speaker demonstrates at either end of the frequency range, either.

In the midrange, though, things aren’t quite so clear-cut. There’s still an admirable amount of detail available, and the transition from the midrange to the stuff going on either side of it, is smoothly and naturalistically achieved.

But there’s not a huge amount in common where tonality is concerned – the way the KEF hands over the midrange in general, and voices in particular, isn’t in absolute sympathy with the bass or treble reproduction. There’s a mild abrasiveness to the tonality here, which can result in voices becoming slightly strident or, in extremis, actually rather hard-edged and unyielding.

Should you buy it?

You value the look and the feel of your Bluetooth speaker as much as you value the sound

You’re after the best sound

You’re after an entirely even-handed and uncoloured account of your music

Final Thoughts

KEF has been out of the Bluetooth speaker conversation for quite a while – but the quality of the products it has launched since it last had a Bluetooth speaker in its line-up made me very optimistic about the new Muo’s chances.

I’m in no doubt that it’s one of the more covetable and more desirable designs around – but the question of whether it sounds like £249-worth is not quite so straightforward to answer, especially not if you’ve heard the Bang & Olufsen A1 3rd Gen in action…

How We Test

I listen to the Muo on my desk, in the kitchen, and in the garden (during those few moments when it isn’t raining sideways around here). I connect it wirelessly to an Apple iPhone 14 Pro, and to a FiiO M15S which allows the use of the aptX codec.

I also hard-wire it to an Apple MacBook Pro (running Colibro software) using its USB-C slot.

FAQs

Kind of, sort of – aptX Adaptive can operate at a lossy 24-bit/48Hz and the USB-C slot can deal with 16-bit/48Hz

No, it can only be charged via its USB-C input

Full Specs

| KEF Muo (2nd Gen) Review | |

|---|---|

| UK RRP | £249 |

| USA RRP | $249 |

| EU RRP | €269 |

| CA RRP | CA$349 |

| AUD RRP | AU$449 |

| Manufacturer | KEF |

| IP rating | IP67 |

| Battery Hours | 24 |

| Fast Charging | Yes |

| Size (Dimensions) | 82 x 59 x 216 MM |

| Weight | 740 G |

| Release Date | 2026 |

| Audio Resolution | SBC, AAC, aptX Adaptive |

| Driver (s) | 20mm tweeter, 58 x 117mm mid/bass |

| Ports | USB-C |

| Connectivity | Bluetooth 5.4 |

| Colours | Midnight Black, Silver Dusk, Moss Green, Blue Aura, Cocoa Brown, Orange Moon |

| Frequency Range | 43 20000 – Hz |

| Speaker Type | Portable Speaker |

Tech

Max severity Flowise RCE vulnerability now exploited in attacks

Hackers are exploiting a maximum-severity vulnerability, tracked as CVE-2025-59528, in the open-source platform Flowise for building custom LLM apps and agentic systems to execute arbitrary code.

The flaw allows injecting JavaScript code without any security checks and was publicly disclosed last September, with the warning that successful exploitation leads to command execution and file system access.

The problem is with the Flowise CustomMCP node allowing configuration settings to connect to an external Model Context Protocol (MCP) server and unsafely evaluating the mcpServerConfig input from the user. During this process, it can execute JavaScript without first validating its safety.

The developer addressed the issue in Flowise version 3.0.6. The latest current version is 3.1.1, released two weeks ago.

Flowise is an open-source, low-code platform for building AI agents and LLM-based workflows. It provides a drag-and-drop interface that lets users connect components into pipelines powering chatbots, automation, and AI systems.

It is used by a broad range of users, including developers working in AI prototyping, non-technical users working with no-code toolsets, and companies that operate customer support chatbots and knowledge-based assistants.

Caitlin Condon, security researcher at vulnerability intelligence company VulnCheck, announced on LinkedIn that exploitation of CVE-2025-59528 has been detected by their Canary network.

“Early this morning, VulnCheck’s Canary network began detecting first-time exploitation of CVE-2025-59528, a CVSS-10 arbitrary JavaScript code injection vulnerability in Flowise, an open-source AI development platform,” Condon warned.

Although the activity appears limited at this time, originating from a single Starlink IP, the researchers warned that there are between 12,000 and 15,000 Flowise instances exposed online right now.

However, it is unclear what percentage of those are vulnerable Flowise servers.

Condon notes that the observed activity related to CVE-2025-59528 occurs in addition to CVE-2025-8943 and CVE-2025-26319, which also impact Flowise and for which active exploitation in the wild has been observed.

Currently, VulnCheck provides exploit samples, network signatures, and YARA rules only to its customers.

Users of Flowise are recommended to upgrade to version 3.1.1 or at least 3.0.6 as soon as possible. They should also consider removing their instances from the public internet if external access is not needed.

-

NewsBeat5 days ago

NewsBeat5 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business5 days ago

Business5 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion4 days ago

Fashion4 days agoWeekend Open Thread: Spanx – Corporette.com

-

Crypto World6 days ago

Crypto World6 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Business2 days ago

Business2 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Business4 days ago

Business4 days agoExpert Picks for Every Need

-

Sports3 days ago

Sports3 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business6 days ago

Business6 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Crypto World7 days ago

Crypto World7 days agoBitcoin enters the public bond market as Moody’s gives a first-of-its-kind crypto deal a rating

-

Business2 days ago

Business2 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Crypto World7 days ago

Bitcoin stalls below key resistance as technical signals skew bearish

-

Tech5 days ago

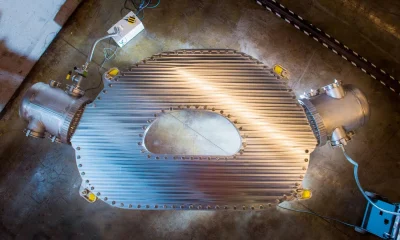

Tech5 days agoCommonwealth Fusion Systems leans on magnets for near-term revenue

-

Politics7 days ago

Politics7 days agoStarmer’s centre has collapsed, and the left was right all along

-

Fashion1 day ago

Fashion1 day agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Crypto World6 days ago

Crypto World6 days agoRipple rolls out enterprise crypto treasury platform for corporates

-

Crypto World6 days ago

Crypto World6 days agoWhy It’s Partnering, Not Issuing

-

Crypto World7 days ago

AI Memory Rout Wipes 9% Off Nvidia Stock: Chart Says More Pain Ahead

-

Business3 days ago

Business3 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Tech7 days ago

Tech7 days agoSolo Leveling: Ranking All Sung Jinwoo Shadows by Power

-

Tech6 days ago

Tech6 days agoDrawing Tablet Controls Laser In Real-Time

You must be logged in to post a comment Login