Tech

Today’s NYT Mini Crossword Answers for April 22

Looking for the most recent Mini Crossword answer? Click here for today’s Mini Crossword hints, as well as our daily answers and hints for The New York Times Wordle, Strands, Connections and Connections: Sports Edition puzzles.

Today’s Mini Crossword features some Earth Day-specific clues. Read on for all the answers. And if you could use some hints and guidance for daily solving, check out our Mini Crossword tips.

If you’re looking for today’s Wordle, Connections, Connections: Sports Edition and Strands answers, you can visit CNET’s NYT puzzle hints page.

Read more: Tips and Tricks for Solving The New York Times Mini Crossword

Let’s get to those Mini Crossword clues and answers.

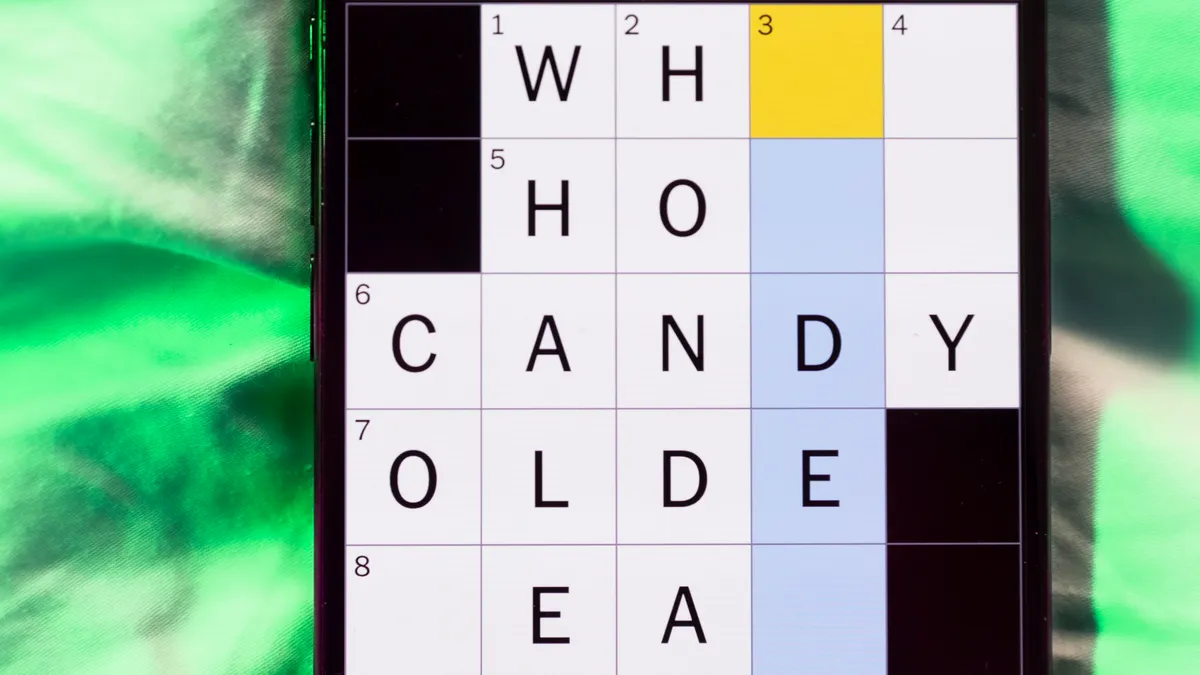

The completed NYT Mini Crossword puzzle for April 22, 2026.

Mini across clues and answers

1A clue: It’s clearly recyclable!

Answer: GLASS

6A clue: ___ Day (April 22nd observance)

Answer: EARTH

7A clue: Thick, underground part of a plant stem

Answer: TUBER

8A clue: Small cluster of trees

Answer: GROVE

9A clue: Rowed, as a boat

Answer: OARED

Mini down clues and answers

1D clue: From the ___ (right at the beginning)

Answer: GETGO

2D clue: Author Ingalls Wilder who wrote “Little House on the Prairie”

Answer: LAURA

3D clue: ___ Day, observance on the last Friday of April

Answer: ARBOR

4D clue: Actor Buscemi

Answer: STEVE

5D clue: Rip into bits, as paper

Answer: SHRED

Tech

The $8 Billion Economy Inside Counter-Strike 2

In addition to being a very popular first-person shooter game, Counter-Strike 2 is a great demonstration of the finest economic systems of games created so far. In particular, such an economic system is represented by virtual trading of items worth more than $8 billion. Indeed, one should bear in mind that this is not a typo – this figure really represents the cost of items worth $8 billion. It may be added that this amount is larger than GDP in many countries, despite not having any effect on the gameplay. So, how did some in-game items attain such a multi-billion dollar economy and what fuels it? We will explain.

How a Digital Skin Gets Its Price Tag

Every skin in CS2 has a set of properties that determine its value, and understanding them is the first step to making sense of this economy.

Rarity tier is the most obvious one. Skins are categorized from Consumer Grade (white, the most common) all the way up to Covert (red, the rarest non-knife items) and Contraband (the ultra-rare category with only one item — the M4A4 Howl). Knives and gloves sit in their own Extraordinary tier, which is part of why they command such premium prices.

Then there’s float value — a number between 0.00 and 1.00 that determines a skin’s visual condition. A float of 0.01 means the skin looks virtually brand new (Factory New), while 0.85 means it’s scratched up and Battle-Scarred. Two AK-47 Redlines might look similar at a glance, but a 0.01 float Factory New will sell for significantly more than a 0.15 Minimal Wear.

And finally, there are pattern-based factors. Certain skins like Case Hardened and Fade have pattern indexes that produce unique visual results. A Case Hardened AK-47 with a full blue gem pattern can sell for tens of thousands of dollars, while the same skin with a standard pattern might go for $40. Doppler knives have distinct phases, each with its own pricing tier. Even sticker placements matter — a skin with rare Katowice 2014 stickers in the right positions can multiply the base price several times over.

The Marketplace Ecosystem

Here’s where things get interesting from a tech perspective. Unlike most games where you buy skins from a single in-game store, CS2 has an entire ecosystem of competing marketplaces.

Steam Community Market is Valve’s own platform and the default option for most players. It’s integrated directly into the Steam client, making it convenient, but it comes with a 15% transaction fee and locks your earnings in Steam Wallet — you can’t cash out to real money.

It resulted in the creation of an extensive array of third-party marketplaces, which include websites like Skinport, DMarket, CSFloat, Buff163, and countless other options. These websites allow users to exchange skins for real money, using various means of payment, such as PayPal payments, bank transfers, and cryptocurrency transactions. The fee structures on these websites vary considerably, ranging from zero percent up to 10 percent and beyond.

This price fragmentation is exactly why analytics and comparison tools have become essential for anyone who takes CS2 trading seriously. Experienced traders routinely check CS2 prices across multiple platforms before making a move, because the price gap between the cheapest listing and the most expensive one for the same skin can easily be 15-30%.

Market Cap Tracking — Like Crypto, But For Skins

One of the more fascinating developments in the CS2 economy has been the adoption of financial tracking concepts borrowed from traditional and crypto markets.

The total CS2 market capitalization — the combined estimated value of every tradeable item in the ecosystem — is tracked in real time, much like how CoinMarketCap tracks cryptocurrency values.At the end of 2025, the peak market capitalization of CS2 was more than $6 billion; however, the market capitalization dropped by roughly 30% in a single move when Valve made an update (to be discussed later).

Such advanced monitoring is essential for the user to see whether the general market is expanding or contracting. If there is an increase in the market cap, then demand and investments are likely increasing; otherwise, a sharp drop may indicate a Valve update, season, or a major event in the global gaming economy.

The data-driven platform collects information from over 20 marketplaces and provides dashboards that contain trend analysis, volumes, and price movements that could have been taken directly from a professional stock trading platform. The economy of CS2 has reached such a degree of development that the very concept of “gaming” becomes irrelevant.

Trade-Up Contracts: The Economy’s Built-In Upgrade Path

Valve didn’t just build a marketplace — they built game mechanics directly into the economic system. However, the most crucial part is the Trade-Up Contracts where the user gets a skin from the next level collection using ten skins from the current level collection.

Even though this concept seems quite simple, it requires rather complex mathematical calculations. Namely, the output skin’s type is dependent on the input collections’ types, whereas its float is calculated according to the average float of all input skins scaled to the output collection’s range. Thus, an advanced player may affect the probability of getting a certain skin via inputs manipulation.

To explain, if seven skins belong to one collection while three skins are from another, the output skin will most probably originate from the first collection. If the first collection contains a $500 skin at the next tier and the second contains a $30 skin, you can engineer a heavily weighted gamble in your favor.

But here’s the catch — the math only works if you actually run the numbers. The inputs might cost $80 in total, but if the expected value of the output is only $60, you’re making a bad bet regardless of the potential upside. That’s why experienced traders simulate their contracts using a CS2 trade-up calculator before committing any skins. These tools predict every possible outcome with exact probabilities, float projections, and expected profit or loss.

The trade-up system was further shaken in October 2025 when Valve added the ability to trade up Covert skins into knives and gloves — something that was previously impossible. Players could suddenly turn five Covert skins worth roughly $5-10 each into knives that were previously selling for $1,000+. The result? Knife prices crashed overnight, the total market cap dropped by hundreds of millions, and the entire pricing hierarchy had to readjust.

The Tech Infrastructure Behind It All

All that lies beneath all these graphs and calculations is quite a bit of technology. Real-time data feeds, APIs, and aggregators pull pricing information from several different marketplaces simultaneously.

Automated trading, monitoring services, portfolio management tools, and other such applications are developed by third parties using the marketplace APIs. Some platforms offer their own developer APIs with endpoints for price recommendations, market analytics, and cross-platform price comparison — essentially creating the financial infrastructure layer that the CS2 economy needed to operate at scale.

Steam itself provides API access for inventory data, market listings, and transaction history, which third-party services use to power everything from inventory valuation tools to automated trading systems.

The sophistication has reached a point where the CS2 economy has its own version of Bloomberg terminals — dashboards that track market-wide trends, individual item price histories, trading volumes, liquidity scores, and even volatility metrics. Professional traders monitor these tools the same way a Wall Street analyst watches stock tickers.

Why It Matters Beyond Gaming

The CS2 skin economy isn’t just a curiosity — it’s a case study in how digital ownership, market dynamics, and community-driven value creation work at scale.

This is what some of the main points which can be derived from this are. Firstly, scarcity defines value in all instances. It has been illustrated in the CS2 skins case study, in which it is clear that it does not matter whether items are tangible or useful in order for them to have economic value.

Second, platform decisions have outsized economic impact. Valve’s single update in October 2025 erased over a billion dollars in virtual item value. No other company has that kind of direct influence over a player-driven economy of this scale.

And third, the line between gaming economies and financial markets is dissolving. When your hobby comes with real-time price tracking, market cap analytics, trade-up calculators, and cross-platform arbitrage opportunities, you’re not just playing a game anymore. You’re participating in a micro-economy that happens to live inside one.

Whether you’re a casual CS2 player who’s never sold a skin or a veteran trader running profit calculations on every drop, the scale and sophistication of what’s been built here is worth paying attention to. An $8 billion economy that runs on cosmetic pixels, community trust, and a few really good APIs — that’s the kind of thing you only find in gaming.

Tech

NHS England gives Palantir contractors broader access to patient data

A leaked internal briefing note describes a new admin role on the £330m Federated Data Platform that lets external staff bypass case-by-case data approvals. Patient groups and Labour MPs have called the change dangerous.

NHS England has decided to allow external personnel from contractors, including Palantir, to access identifiable patient data through a new administrative role on its main data platform, the Guardian reported on Sunday, citing an internal briefing note.

The change applies to the National Data Integration Tenant, a controlled environment that NHS England describes as a “haven” for identifiable patient data before that data is pseudonymised and passed into other systems connected to the Federated Data Platform (FDP).

Under existing rules, anyone working on the platform has to apply for approval to access specific datasets, a process known as a Controlled Data Access (CDA) request.

The briefing note seen by the Guardian says NHS England is creating a new “admin” role that grants broader permissions to approved external staff in a single approval, on the basis that applying for individual CDAs had become “too inconvenient”.

Palantir Technologies won the £330m FDP contract in 2023 and is the primary external contractor on the platform. NHS England has said that anyone external requiring access under the new arrangement must hold government security clearance and be approved by an NHS England director or more senior official. The list of contractors with potential access also includes consultancy firms supporting the programme.

The Federated Data Platform is designed to pull operational data from trusts across the NHS into a single environment for planning, waiting-list management and resource allocation.

Identifiable patient information is meant to remain inside the National Data Integration Tenant, with only pseudonymised or aggregate data passed to downstream FDP modules. The new admin role applies to staff working inside the tenant itself.

Patient groups and several Labour MPs criticised the change. Labour MP Rachael Maskell told the Guardian the move was “dangerous” and urged ministers to intervene to halt the broader FDP project. medConfidential, a patient-data-rights group, said the new role represented a material shift in how identifiable data is governed inside NHS England’s largest data programme.

NHS England said the change had been internally approved by its information-governance team and that all access remained subject to existing legal and clinical-safety frameworks.

Palantir declined to comment on the specifics of the access arrangement. The company has previously said it processes NHS data only on instructions from NHS England and does not own or commercialise the underlying data.

The change reignites a long-running political dispute over the FDP contract. Critics have argued from the start of the procurement process that giving a single US-headquartered defence and intelligence contractor central access to the NHS data spine creates concentration risk and undermines public trust.

NHS England and the Department of Health have defended the contract on the basis that it improves operational efficiency and reduces clinical-safety risk by consolidating fragmented data systems.

Wes Streeting, the Health Secretary, has not made a public statement on the latest disclosure. The government’s broader position has been that the FDP is essential to NHS modernisation and that data-access processes are subject to robust controls.

The briefing note suggests the trade-off the new admin role embodies: faster operational onboarding of external staff against tighter case-by-case oversight of which datasets they access.

The Information Commissioner’s Office has not formally commented on whether the new role triggers any additional regulatory review. The Guardian’s reporting did not indicate when the role was scheduled to take effect; NHS England did not provide a date when asked.

Tech

Hantavirus Conspiracy Theories Are Already Spreading Online

Conspiracy theorists, wellness influencers, and grifters have already started promoting wild claims about the hantavirus outbreak that began aboard the MV Hondius, a cruise ship on the Atlantic.

Some conspiracy theorists compared the outbreak to the Covid-19 pandemic, claiming it was another effort to control the global population, while others pushed a false narrative that the Covid-19 vaccine caused hantavirus. Many others promoted ivermectin as a treatment, using the incident as a way to sell emergency medical kits featuring the antiparasitic drug typically used as a horse dewormer.

In more recent days, many of these same people spreading conspiracy theories have promoted the baseless and antisemitic claims that the entire incident is a false flag orchestrated by Israel.

Conspiracy theories flooding social media in response to breaking news are nothing new, but what is notable about those being pushed around the hantavirus outbreak is just how closely they echo the conspiracy theories promoted during the Covid-19 pandemic.

“One of the most striking shifts since the Covid pandemic is how rapidly misinformation narratives now organize themselves around emerging outbreaks,” Katrine Wallace, an epidemiologist at University of Illinois Chicago School of Public Health, tells WIRED.

“Within hours of the first hantavirus headlines, social media accounts were already promoting ivermectin, attributing the outbreak to Covid vaccines, and warning about a hantavirus vaccine that does not exist. The claims themselves were often contradictory, but that contradiction no longer appears to limit their spread.”

Once the hantavirus outbreak started making headlines around the world, conspiracy theorists and grifters jumped into action, spreading dangerously ill-informed claims and, of course, trying to sell people ivermectin.

“Ivermectin should work against it,” Mary Talley Bowden wrote on X. Bowden, a doctor, is a prominent promoter of medical misinformation who has promoted ivermectin as a treatment for Covid-19 and prescribed ivermectin to a Covid-19 patient. Hours after her first post on Hantavirus went viral, she followed up to say that she is selling ivermectin to Texans. Bowden did not respond to a request for comment.

Her post, which has been viewed 4 million times, was shared by former Congresswoman Marjorie Taylor Greene, who added that vitamin D and zinc would help fight the infection. Greene even claimed that not getting the Covid-19 vaccine had somehow allowed her to “develop natural immunity” against hantavirus.

Greene separately claimed, without evidence, that the pharmaceutical company Moderna had purposely manipulated the virus in order to allow them to cash in by developing a hantavirus vaccine. Greene did not respond to a request for comment.

Other prolific health disinformation promoters boosted the ivermectin claims, including Simone Gold, the founder of Covid denial group America’s Frontline Doctors, and Peter McCullough, a disinformation peddler who promoted the “sudden death” conspiracy theory about the Covid-19 vaccine, which falsely claimed that those who received the shot were at risk of dropping dead without any warning.

McCullough is also the chief scientific officer for The Wellness Company, which has been described as “Goop for the GOP.” The company has used the hantavirus outbreak to promote a $325 “Contagion Emergency Kit” which includes both ivermectin and hydroxychloroquine.

All the false claims and posts about ivermectin gained enough traction online that the World Health Organization responded to say that there is no research to suggest ivermectin is an effective treatment for hantavirus.

Conspiracy theorists have, meanwhile, been pushing the baseless idea that a side effect of Covid vaccines includes a hantavirus infection.

Tech

Sony has yet to decide on a PS6 release date and price

Sony is still keeping things wide open when it comes to its next-generation console.

According to the company’s latest earnings call, the PlayStation 6 still has no confirmed release date or price. Sony says it is closely monitoring rising memory costs and wider market conditions before locking anything in.

The update came during Sony’s annual corporate strategy and investor Q&A session. At this session, CEO Hiroki Totoki addressed concerns about how global supply pressures could shape the company’s next console. His message was simple: nothing is decided yet.

As shared by Eurogamer, Totoki explained that the ongoing memory shortage is driving up manufacturing costs. This is increasing the overall bill of materials (BoM) for hardware like the PS6. While Sony has already secured enough components for 2026 and has some pricing agreements in place, the outlook beyond that is far less stable.

Looking further ahead, he warns that memory prices will likely remain high into FY2027. This means Sony is still evaluating how that will impact production costs and retail pricing. That uncertainty is now directly feeding into PS6 planning.

“We have not yet decided at what timing we will launch the new console, or at what prices,” Totoki said, adding that Sony is running multiple scenarios to figure out its next move. That includes, notably, the possibility of adjusting its business model. However, the company didn’t expand on what that could actually mean for players.

For now, it’s all positioning rather than specifics. Sony isn’t confirming features, pricing tiers, or even a launch window. The company is just acknowledging that external pressures, particularly AI-driven demand for memory, are reshaping its hardware strategy.

The comments echo wider industry concerns, with companies across the tech space warning about supply bottlenecks affecting future products. Within PlayStation itself, former executives and hardware leads have also hinted that the next generation may need a different approach compared to the PS5 era.

As it stands, the PS6 remains firmly in the planning phase. And while speculation around features and timelines continues to build, Sony’s message is clear: the final shape of its next console will depend as much on the global market as it does on its own roadmap.

Tech

Waymo issues recall to deal with a flooding problem

Waymo has issued a software update to its fleet of nearly 4,000 vehicles to help them avoid flooded roads as part of a recall announced by the National Highway Traffic Safety Administration (NHTSA) on Tuesday.

But the company hasn’t fully solved the problem of how its vehicles behave in these conditions. In documents released by NHTSA, the federal safety regulator says Waymo is still “developing the final remedy for this recall.”

The issue appears to be that Waymo’s robotaxis were slowing, but not stopping, when encountering flooded roads that they could not traverse, according to NHTSA. Robotaxis using both Waymo’s fifth- and sixth-generation autonomous vehicle systems are affected.

The regulator said the recall applies to 3,791 vehicles — giving us a more up-to-date understanding of just how many vehicles Waymo has on the roads in around a dozen U.S. cities.

Waymo has now issued multiple recalls for its self-driving cars. The company’s first recall came in February 2024 after it discovered two robotaxis in Phoenix had separately crashed into the same towed vehicle. Since then, Waymo has issued recalls to fix low-speed crashes with parking gates and telephone poles, as well as to address illegal driving in the vicinity of school buses.

Waymo decided to issue the recall in late April after its robotaxis struggled to navigate flooding in central Texas; in one incident, an empty robotaxi was swept away in San Antonio. The company has also paused operations in the city.

The initial update sent to its fleet places “restrictions at times and in locations where there is an elevated risk of encountering a flooded, higher-speed roadway,” according to NHTSA.

“We have identified an area of improvement regarding untraversable flooded lanes specific to higher-speed roadways, and have made the decision to file a voluntary software recall with NHTSA related to this scenario,” Waymo said in a statement. “We are working to implement additional software safeguards and have put mitigations in place, including refining our extreme weather operations during periods of intense rain, limiting access to areas where flash flooding might occur.”

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

ABC Shows A Backbone In FCC Fight, Shows FCC Manufactured A Controversy Surrounding James Talarico

from the censorial-fascist-weirdos dept

ABC/Disney, like most major media companies, has spent much of its time during America’s bout with authoritarianism being a feckless wimp. The company was quick to ditch its already fleeting embrace of civil rights to please our dim, racist president, and were just as quick to pay Trump a $15 million bribe to settle a baseless Trump lawsuit they could have easily won.

But as Trump’s health and power becomes more shaky, ABC appears to be showing the faint outline of a backbone.

ABC/Disney execs are now more directly accusing the Trump administration of violating the First Amendment with its endless threats to pull the company’s broadcast licenses if it platforms journalists, comedians, or talk show hosts who refuse to kiss the administration’s ass.

Quick background: we’ve noted repeatedly how Trump FCC boss Brendan Carr has been abusing the FCC’s dated “equal opportunity” (or “equal time”) rule to try and threaten daytime and late night talk shows with government retribution if they refuse to enthusiastically coddle Republicans.

Recently, the Carr FCC took the unprecedented step of demanding that ABC-owned Houston affiliate KTRK file a petition for declaratory ruling to the FCC, explaining to the agency why it didn’t file the appropriate paperwork for a February 2nd appearance by Democrat James Talarico on The View (the traction Talarico is making among Christians clearly seems to worry the administration).

So KTRK last week filed their petition for declaratory ruling. And it shows slightly more backbone that we’ve become used to, directly stating that the Trump FCC’s actions violate the First Amendment and are having a “chilling effect” on free speech. While the petition is technically on behalf of KTRK, it was signed by Paul Clement, a former Bush-era solicitor general and very experienced Supreme Court litigator.

Talk shows have historically been exempt from the dated, golden-era-of-television rules, which required that any airing of a political candidate on “publicly owned” airwaves is countered with the appearance from a candidate from the opposing party. But Carr isn’t interested in equilibrium; he’s interested abusing FCC authority to try and silence critics of Donald Trump and his increasingly unpopular policies.

ABC’s notice to the FCC notes that the target of the administration’s censorial rage, The View, was clearly granted a Bona Fide Exemption to the rule back in 2002. Most talk shows have broadly been viewed as exempt since 1984 or so (and increasingly so, as the Internet challenged TV’s supremacy). From the ABC filing:

“The View has been broadcasting under a bona fide news exemption granted to it more

than twenty years ago, consistent with longstanding Commission interpretations designed to

minimize the serious First Amendment problems inherent in the equal time regime.

The View’s exemption remains valid and the constitutional infirmities in the equal time doctrine are even more pronounced today, when the broadcast airwaves account for a slice of the numerous media options through which Americans get their political information.”

Carr’s FCC has also been threatening to pull ABC’s broadcast licenses in the wake of Jimmy Kimmel making fun of the president’s wife; but as we’ve previously reported, ABC only holds eight broadcast licenses in total; most in reality are owned by right wing affiliate companies already loyal to Trumpism.

Here’s an interesting bit of note: It appears that the Carr FCC staged things in advance with the help of those affiliates to make ABC-owned KTRK seem like it was doing something wrong.

First, the FCC tried to tell ABC and KTRK that The View being widely viewed as exempt is “not a position uniformly held by broadcasters that air the program” (it is).

But on pages 3-4 of ABC’s filing, they note that not only did those other affiliates not originally file the paperwork for the appearance (because there’s no need to given their exemption), the FCC personally reached out to a number of non-ABC owned affiliates to have them file paperwork late so it would appear that the ABC-owned KTRK was an outlier that did something wrong. From ABC’s filing:

“The Bureau neglected to note, however, that while certain ABC affiliates documented Talarico’s appearance in their online public inspection files, the filings were made more than two weeks after Talarico’s appearance and apparently at the request of the FCC, which reportedly promised to eschew enforcement for the late filing. KTRK Television received no such request and no such offer, despite the Bureau specifically contacting it about the Talarico appearance less than 10 days after it occurred.”

That’s really profoundly greasy behavior. These other affiliates, that the FCC pressured to file late notices of Talarico’s appearances, would be companies like Sinclair, Tegna, Nexstar, Gray Media, or Scripps, most of which are owned by Trump-loyalists and/or are seeking FCC approval for approval for mergers that illegally ignore the country’s last remaining media consolidation limits.

So again, the FCC accused ABC and its directly owned affiliate of something false, then told non-ABC owned affiliates to file paperwork they never would have otherwise planned to if they wanted merger approval to make it seem like KTRK did something wrong. And since a lot of these affiliates are already very Trump-friendly propaganda mills, the FCC likely didn’t have to apply much pressure.

While it’s always possible the Trump-stocked Supreme Court makes an insane ruling in Trump’s favor, these threats to pull broadcast licenses are not fights the Trump FCC wants to actually litigate. They’re designed to simply be a form of harassment that makes life so costly and difficult for companies that threat targets — and everybody else — just pre-emptively bows to pressure to censor.

Trump and Carr expect companies to pre-emptively quiver and not put up a costly fight. And while these threats have worked for a while (because our corporate media is broadly opportunistic and pathetic), Trump’s abysmal poll numbers in the wake of the Iran war and soaring gas prices are likely instilling new confidence even among the most weak-kneed companies.

Filed Under: affiliates, brendan carr, censorship, equal time rule, fcc, first amendment, free speech, james talarico

Companies: abc, disney

Tech

Google just made Gemini for Home a lot better at running your smart home

If you have a Google smart display or speaker at home, there are new updates you should know about. Google has rolled out a fresh batch of improvements to Gemini for Home, making the assistant noticeably smarter and faster across smart speakers and displays.

Gemini for Home is getting smarter and more personal

The most interesting addition is how Gemini now uses information you’ve saved in Ask Home to answer camera-related questions. If you’ve saved a note saying your nanny’s name is Alice, you can ask Gemini when Alice arrived, and it will pull up the relevant camera footage automatically.

You can also ask for a Home Brief on your speaker or display to get a quick summary of everything that happened at home while you were away. On smart displays, Gemini will now show thumbs-up and thumbs-down buttons after most voice interactions, making it easier to give Google quick feedback.

Response times have also improved across the board. Backend processing for common commands has been optimized, so things like turning on lights, setting alarms, and managing timers should feel noticeably quicker than before.

Adult users will also now get more helpful responses to general queries, including things like cocktail recipes, while parental controls remain in place to protect younger users.

The Google Home app is getting useful upgrades too

These updates arrive alongside version 4.16 of the Google Home app, which brings its own set of improvements. Device setup is now simpler thanks to a new QR code discovery flow that automatically directs you to the right setup path for your device.

Nest Thermostat users can now pause outdoor temperature settings with a single tap, without affecting their long-term schedule. Thermostat schedule banners also now display more relevant and timely information.

Lastly, iPhone users can now manage compatible third-party thermostats and air conditioners directly in the app, catching up with what Android users could already do.

Tech

This Fire TV Soundbar Plus deal could be the easiest TV upgrade you make

Most TV speakers treat dialogue as an afterthought, burying it beneath a wall of compressed sound that makes you reach for the subtitles before the first scene is done.

The Fire TV Soundbar Plus is the answer to that frustration, and with the 3.1-channel system now down from $249.99 to $174.99, a built-in subwoofer and spatial audio are available at a price that makes upgrading feel like an obvious rather than an extravagant decision.

Save almost a third on Amazon’s Fire TV Soundbar Plus and enjoy films with the clarity and depth they’re supposed to have

For anyone who has been tolerating flat TV speakers for a long while, the Fire TV Soundbar Plus at $174.99 is a clean, uncomplicated upgrade

The dedicated centre dialogue channel and Dialogue Enhancer work together to lift spoken word above background sound, so conversations stay intelligible even when a scene’s mix is working against you, without requiring any manual adjustment on your part.

That clarity sits alongside Dolby Atmos and DTS:X, which between them create three-dimensional audio with genuine movement and depth, meaning an action sequence or a concert film feels like it has physical space around it rather than arriving flat from a single point in the room.

Inside the bar, three full-range speakers, three tweeters, and two woofers distribute sound across the frequency range, and the four EQ modes – Movie, Music, Sports, and Night – let you shift the balance to match what you are actually watching without overcomplicating the process.

Setup involves a single HDMI cable into your TV’s eARC or ARC port, after which the Fire TV Soundbar Plus syncs automatically and can be controlled through your existing Fire TV remote if your device supports it, removing the need for a second remote entirely.

Bluetooth support also means the bar doubles as a speaker for music from your phone or tablet, extending its usefulness well beyond film nights and into everyday listening without any additional pairing complexity.

For anyone who has been tolerating flat, dialogue-swallowing TV speakers for longer than they should, the Fire TV Soundbar Plus at $174.99 is a clean, uncomplicated upgrade with a wall mount kit included, so the whole thing is ready to go from a single purchase.

SQUIRREL_PLAYLIST_10148964

Tech

Vapi raises $50m Series B led by Peak XV for enterprise voice AI

Peak XV leads. M12, Kleiner Perkins, and Bessemer Venture Partners join; earlier investors return. Amazon Ring, Intuit, and New York Life are named enterprise customers; total funding now stands at $72m.

Vapi, the San Francisco-based enterprise voice-AI platform, has raised $50m in a Series B round to scale its voice-agent infrastructure, the company said on Tuesday.

The round was led by Peak XV, with participation from M12 (Microsoft’s Venture Fund), Kleiner Perkins, Bessemer Venture Partners, and earlier investors. The investment brings Vapi’s total funding to $72m.

Vapi reports that its voice agents have handled more than one billion calls to date, with over one million developers and 2.7 million unique agents built on the platform. The company said it has grown its enterprise annual recurring revenue tenfold since the prior round.

Named enterprise customers include Amazon Ring, Kavak, Instawork, New York Life, UnityAI, Cherry, and Intuit. Amazon Ring uses Vapi to handle inbound customer support calls about its smart-home security devices. Jason Mitura, vice-president of software development at Ring, said in a statement that the company evaluated dozens of vendors before selecting Vapi.

“We went from zero to production in two weeks, and 100% of our inbound volume now runs through Vapi,” Mitura said, adding that customer-satisfaction scores improved following deployment.

The platform is API-native and designed to let teams build, deploy, and manage voice agents without engineering involvement in the configuration of the agent’s behaviour.

Vapi supports inbound customer service, outbound collections, candidate screening, sales-coaching simulations, and autonomous navigation of third-party IVR systems.

The company says the strongest traction has been in financial services, healthcare, insurance, automotive, and workforce management.

Co-founders Jordan Dearsley and Nikhil Gupta met at the University of Waterloo and previously built a Y Combinator-backed calendar product together.

Vapi began in mid-2023 when Dearsley wired together a voice-based AI walking companion; the product itself did not take off, but the underlying latency-optimised infrastructure became the basis for Vapi, which launched publicly on Product Hunt in March 2024.

Arnav Sahu, partner at Peak XV, framed the investment thesis in a statement. “Vapi has built a differentiated self-serve product for developers and enterprises in the massive voice-AI revolution,” Sahu said.

“In 10 years, it’s likely most calls will not have a human behind the phone. With its bottom-up, PLG approach, we believe Vapi is the next Zapier and N8N for voice-AI workflows.”

Dearsley said in the announcement that the company’s focus is on production-grade customer outcomes rather than chatbot-style automation.

“Most businesses have spent decades of time and effort, only to make their customer experience worse,” Dearsley said. “Vapi gives teams the platform to deploy voice agents that actually solve problems for customers, millions of them, every day.”

Vapi cited industry estimates that nearly $3tn of global sales are at risk in 2026 from poor customer experience, and pointed to a 2% drop in customer-satisfaction scores since 2022 as the backdrop to its enterprise traction.

The company said the next phase of its product roadmap will focus on uptime guarantees, predictable latency under load, call-level monitoring, guardrails to keep agents within defined boundaries, and escalation paths to human operators.

Tech

This Radeon RX 9070 XT deal brings this 16GB GPU down to $680

A graphics card upgrade is one of those decisions that tends to sit on a list for months, waiting for a price drop significant enough to finally make it feel justified.

That moment has arrived for the PowerColor Red Devil AMD Radeon RX 9070 XT, now down from $849.99 to $680, putting one of the most capable mid-to-high-end cards on the market nearly $170 closer to an easy decision.

Save close to $170 on the AMD Radeon RX 9070 XT and unlock a new level of gaming power

The PowerColor Red Devil AMD Radeon RX 9070 XT at $680 is a serious card at a price that is hard to argue with.

The 16GB of GDDR6 memory is the headline figure here, and in practice, it means that demanding titles, high-resolution textures, and multitasking across applications all have the headroom they need without the card struggling at the edges of its capabilities.

A 2520MHz clock speed keeps frame delivery consistent under load, and the three-fan cooling arrangement is built around ring-blade technology and a direct-contact copper plate that pulls heat away from the GPU and VRAM before it has the chance to affect performance.

PowerColor has also included a Dual BIOS switch, which lets you choose between an OC mode that pushes the fans harder for lower temperatures and a Silent mode that keeps noise down while maintaining a stable thermal balance, giving you meaningful control over how the card behaves in your system.

The AMD Radeon RX 9070 XT supports DisplayPort 2.1 across three outputs alongside HDMI 2.1, which means it is ready for high refresh rate gaming at 4K and can push resolutions up to 7680 x 4320 for anyone running an 8K display or looking ahead to one.

Backing the hardware is a 12-layer PCB built from high thermal conductivity material and Honeywell PTM7950 phase-change compound filling the microscopic gaps between the GPU die and heatsink, all of which adds up to a card that is engineered to stay stable across long sessions rather than throttle when things get demanding.

A 900W minimum system power requirement means this card asks something of your existing setup, so confirming your PSU meets that threshold before ordering is worth doing, but for a system that can support it, the PowerColor Red Devil AMD Radeon RX 9070 XT at $680 is a serious card at a price that is hard to argue with.

SQUIRREL_PLAYLIST_10148964

-

Crypto World4 days ago

Crypto World4 days agoHarrisX Poll Found 52% of Registered Voters Support the CLARITY Act

-

Fashion4 days ago

Fashion4 days agoWeekend Open Thread: Marianne Dress

-

Crypto World5 days ago

Crypto World5 days agoUpbit adds B3 Korean won pair as Base token gains Korea access

-

NewsBeat5 days ago

NewsBeat5 days agoNCP car park operator enters administration putting 340 UK sites at risk of closure

-

Fashion22 hours ago

Fashion22 hours agoCoffee Break: Travel Steam Iron

-

Fashion2 days ago

Fashion2 days agoWhat to Know Before Buying a Curling Wand or Curling Iron

-

Tech2 days ago

Tech2 days agoAuto Enthusiast Carves Functional Two-Stroke Engine from Solid Metal

-

Politics17 hours ago

Politics17 hours agoWhat to expect when you’re expecting a budget

-

Politics3 days ago

Politics3 days agoPolitics Home Article | Starmer Enters The Danger Zone

-

Business3 days ago

Business3 days agoIgnore market noise, India’s long-term story intact, say D-Street bulls Ramesh Damani and Sunil Singhania

-

Tech1 day ago

Tech1 day agoGM Agrees To Pay $12.75 Million To Settle California Lawsuit Over Misuse Of Customers’ Driving Data

-

Crypto World6 days ago

Crypto World6 days agoBlackRock CEO Larry Fink Discusses a New Asset Class

-

Entertainment5 days ago

Entertainment5 days agoSarah Paulson Called Out For Met Gala ‘Hypocrisy’

-

Sports6 days ago

NBA playoff winners and losers: Austin Reaves is not loving Lakers vs. Thunder matchup, but Chet Holmgren is

-

Entertainment6 days ago

Entertainment6 days agoBold and Beautiful Early Spoilers May 11-15: Steffy Revolted & Liam Overjoyed!

-

Entertainment5 days ago

Entertainment5 days agoGeneral Hospital: Ric & Ava Bombshell – Ric’s Massive Secret Exposed!

-

Crypto World5 days ago

Crypto World5 days agoRobinhood says Wall Street is building onchain

-

Politics5 days ago

Politics5 days agoSimon Cowell Says He Was ‘Horrible’ To Susan Boyle During BGT Audition

-

Sports5 days ago

Sports5 days agoUEFA Champions League final schedule, teams, venue, live time and streaming | Football News

-

Tech7 days ago

Tech7 days agoApple and Samsung are dominating smartphone sales so thoroughly that only one other company makes the top 10

You must be logged in to post a comment Login