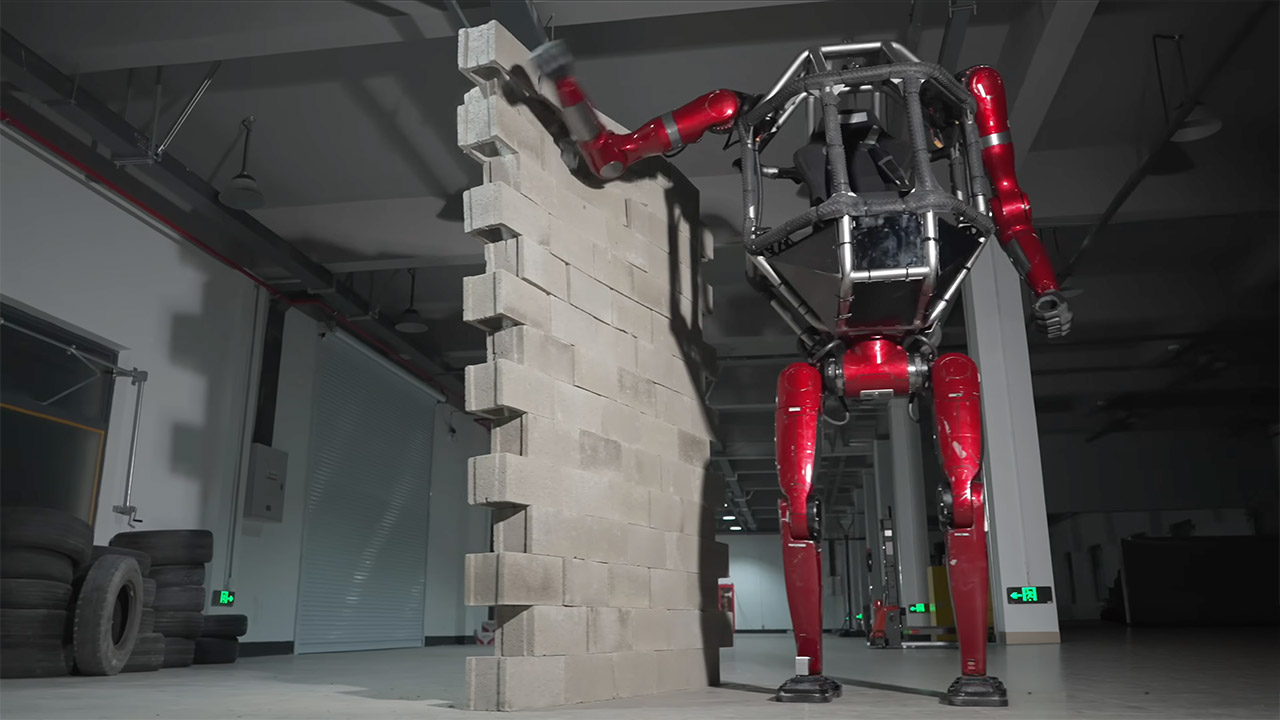

A doctor in a hospital exam room watches as a medical transcription agent updates electronic health records, prompts prescription options, and surfaces patient history in real time. A computer vision agent on a manufacturing line is running quality control at speeds no human inspector can match. Both generate non-human identities that most enterprises cannot inventory, scope, or revoke at machine speed.

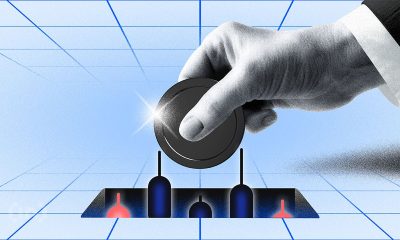

That is the structural problem keeping agentic AI stuck in pilots. Not model capability. Not compute. Identity governance.

Cisco President Jeetu Patel told VentureBeat at RSAC 2026 that 85% of enterprises are running agent pilots while only 5% have reached production. That 80-point gap is a trust problem. The first questions any CISO will ask: which agents have production access to sensitive systems, and who is accountable when one acts outside its scope? IANS Research found that most businesses still lack role-based access control mature enough for today’s human identities, and agents will make it significantly harder. The 2026 IBM X-Force Threat Intelligence Index reported a 44% increase in attacks exploiting public-facing applications, driven by missing authentication controls and AI-enabled vulnerability discovery.

Why the trust gap is architectural, not just a tooling problem

Michael Dickman, SVP and GM of Cisco’s Campus Networking business, laid out a trust framework in an exclusive interview with VentureBeat that security and networking leaders rarely hear stated this plainly. Before Cisco, Dickman served as Chief Product Officer at Gigamon and SVP of Product Management at Aruba Networks.

Dickman said that the network sees what other telemetry sources miss: actual system-to-system communications rather than inferred activity. “It’s that difference of knowing versus guessing,” he said. “What the network can see are actual data communications … not, I think this system needs to talk to that system, but which systems are actually talking together.” That raw behavioral data, he added, becomes the foundation for cross-domain correlation, and without it, organizations have no reliable way to enforce agent policy at what he called “machine speed.”

The trust prerequisite that most AI strategies skip

Dickman argues that agentic AI breaks a pattern he says defined every prior technology transition: deploy for productivity first, bolt on security later.

“I don’t think trust is one of those things where the business productivity comes first, and the security is an afterthought,” Dickman told VentureBeat. “Trust actually is one of the key requirements. Just table stakes from the beginning.”

Observing data and recommending decisions carries consequences that stay contained. Execution changes everything. When agents autonomously update patient records, adjust network configurations, or process financial transactions, the blast radius of a compromised identity expands dramatically.

“Now more than ever, it’s that question of who has the right to do what,” Dickman said. “The who is now much more complicated because you have the potential in our reality of these autonomous agents.”

Dickman breaks the trust problem into four conditions. The first is secure delegation, which starts by defining what an agent is permitted to do and maintaining a clear chain of human accountability. The second is cultural readiness; he pointed to alert fatigue as a case study. The traditional fix, Dickman noted, was to aggregate alerts, so analysts see fewer items. With agents capable of evaluating every alert, that logic changes entirely.

“It is now possible for an agent to go through all alerts,” Dickman said. “You can actually start to think about different workflows in a different way. And then how does that affect the culture of the work, which is amazing.”

The third is token economics: Every agent’s action carries a real computational cost. Dickman sees hybrid architectures as the answer, where agentic AI handles reasoning while traditional deterministic tools execute actions. The fourth is human judgment. For example, his team used an AI tool to draft a product requirements document. The agent produced 60 pages of repetitive filler that immediately provided how technically responsive the architecture was, yet showed signs of needing extensive fine-tuning to make the output relevant. “There’s no substitute for the human judgment and the talent that’s needed to be dextrous with AI,” he said.

What the network sees that endpoints miss

Most enterprise data today is proprietary, internal, and fragmented across observability tools, application platforms, and security stacks. Each domain team builds its own view. None sees the full picture.

“It’s that difference of knowing versus guessing,” Dickman said. “What the network can see are actual data communications. Not ‘I think this system needs to talk to that system,’ but which systems are actually talking together.”

That telemetry grows more valuable as IoT and physical AI proliferate. Computer vision agents analyzing shopper behavior and running factory-floor quality control generate highly sensitive data that demands precise access controls.

“All of those things require that trust that we started with, because this is highly sensitive data around like who’s doing what in the shop or what’s happening on the factory floor,” Dickman said.

Why siloed agent data misses the signal

“It’s not only aggregation, but actually the creation of knowledge from the network,” Dickman said. “There are these new insights you can get when you see the real data communications. And so now it becomes what do we do first versus second versus third?”

That last question reveals where Dickman’s focus lands: the strategic challenge is sequencing, not capability.

“The real power comes from the cross-domain views. The real power comes from correlation,” Dickman said. “Versus just aggregation and deduplication of alerts, which is good, but it’s a little bit basic.”

This is where he sees the most common pitfall. Team A builds Agent A on top of Data A. Team B builds Agent B on top of Data B. Each silo produces incrementally useful automation. The cross-domain insight never materializes.

Independent practitioners validate the pattern. Kayne McGladrey, an IEEE senior member, told VentureBeat that organizations are defaulting to cloning human user profiles for agents, and permission sprawl starts on day one. Carter Rees, VP of AI at Reputation, identified the structural reason. “A significant vulnerability in enterprise AI is broken access control, where the flat authorization plane of an LLM fails to respect user permissions,” Rees told VentureBeat. Etay Maor, VP of Threat Intelligence at Cato Networks, reached the same conclusion from the adversarial side. “We need an HR view of agents,” Maor told VentureBeat at RSAC 2026. “Onboarding, monitoring, offboarding.”

Agentic AI trust gap assessment

Use this matrix to evaluate any platform or combination of platforms against the five trust gaps Dickman identified. Note that the enforcement approaches in the right column reflect Cisco’s framework.

|

Trust gap

|

Current control failure

|

What network-layer enforcement changes

|

Recommended action

|

|

Agent identity governance

|

IAM built for human users cannot inventory, scope, or revoke agent identities at machine speed

|

Agentic IAM registers each agent with defined permissions, an accountable human owner, and a policy-governed access scope

|

Audit every agent identity in production. Assign a human owner. Define permitted actions before expanding the scope

|

|

Blast radius containment

|

Host-based agents and perimeter controls can be bypassed; flat segments give compromised agents lateral movement

|

Microsegmentation enforces least-privileged access at the network layer, limiting blast radius independent of host-level controls

|

Implement microsegmentation for every agent-accessible system. Start with the highest-sensitivity data (PHI, financial records)

|

|

Cross-domain visibility

|

Siloed observability tools create fragmented views; Team A’s agent data never correlates with Team B’s security telemetry

|

Network telemetry captures actual system-to-system communications, feeding a unified data fabric for cross-domain correlation

|

Unify network, security, and application telemetry into a shared data fabric before deploying production agents

|

|

Governance-to-enforcement pipeline

|

No formal process connecting business intent to agent policy to network enforcement

|

Policy-to-enforcement pipeline translates governance decisions into machine-speed network rules

|

Establish a formal pipeline from business-intent definition to automated network policy enforcement

|

|

Cultural and workflow readiness

|

Organizations automate existing workflows rather than redesigning for agent-scale processing

|

Network-generated behavioral data reveals actual usage patterns, informing workflow redesign

|

Run a 30-day telemetry capture before designing agent workflows. Build around observed data, not assumptions

|

A broken ankle and a microsegmentation lesson

Dickman grounded his framework in a scenario from his own life. A family member recently broke an ankle, which put him in a hospital exam room watching a medical transcription agent update the EHR, prompt prescription options, and surface patient history in real time. The doctor approved each decision, but the agent handled tasks that previously required manual entry across multiple systems.

The security implications hit differently when it is a loved one’s records on the screen.

“I would call it do governance slowly. But do the enforcement and implementation rapidly,” he said. “It must be done in machine speed.”

It starts with agentic IAM, where each agent is registered with defined permitted actions and a human accountable for its behavior.

“Here’s my set of agents that I’ve built. Here are the agents. By the way, here’s a human who’s accountable for those agents,” Dickman said. “So if something goes wrong, there’s a person to talk to.”

That identity layer feeds microsegmentation — a network-enforced boundary Dickman says enforces least-privileged access and limits blast radius.

“Microsegmentation guarantees that least-privileged access,” Dickman said. “You’re not relying on a bunch of host agents, which can be bypassed or have other issues.”

If the governance model works for a medical transcription agent handling patient records in an emergency department, it scales to less sensitive enterprise use cases.

Five priorities before agents reach production

1. Force cross-functional alignment now. Define what the organization expects from agentic AI across line-of-business, IT, and security leadership. Dickman sees the human coordination layer moving more slowly than the technology. That gap is the bottleneck.

2. Get IAM and PAM governance production-ready for agents. Dickman called out identity and access management and privileged access management specifically as not mature enough for agentic workloads today. Solidify the governance before scaling the agents. “That becomes the unlock of trust,” he said. “Because when the technology platform is ready, you then need the right governance and policy on top of that.”

3. Adopt a platform approach to networking infrastructure. A platform strategy enables data sharing across domains in ways fragmented point solutions cannot. That shared foundation is what makes the cross-domain correlation in the trust gap assessment above operationally real.

4. Design hybrid architectures from the start. Agentic AI handles reasoning and planning. Traditional deterministic tools execute the actions. Dickman sees this combination as the answer to token economics: it delivers the intelligence of foundation models with the efficiency and predictability of conventional software. Do not build pure-agent systems when hybrid systems cost less and fail more predictably.

5. Make the first use cases bulletproof on trust. Pick two or three high-value use cases and build them with role-based access control, privileged access management, and microsegmentation from day one. Even modest deployments delivered with best practices intact build the organizational confidence that accelerates everything after.

“You can guarantee that trust to the organization, and that will unleash the speed,” Dickman said.

That is the structural insight running through every section of this conversation. The 85% of enterprises stuck in pilot mode are not waiting for better models. They are waiting for the identity governance, the cross-domain visibility, and the policy enforcement infrastructure that makes production deployment defensible. Whether they build on Cisco’s platform or assemble their own, Dickman’s framework holds: identity governance, cross-domain visibility, policy enforcement. None of those prerequisites is optional.

The organizations that satisfy them first will deploy agents at a pace the rest cannot match, because every new agent inherits the trust architecture the first ones required. The ones still debating whether to start will watch that gap widen. Theoretical trust does not ship.

You must be logged in to post a comment Login