This sponsored article is brought to you by Applied Materials.

At pivotal moments in history, progress has required more than individual brilliance. The most consequential breakthroughs — such as those achieved under the Human Genome Project — required a new operating paradigm: Concentrate the world’s best talent around a single mission, establish a common platform, share critical infrastructure, and collapse feedback loops. When stakes are high and timelines are compressed, sequential and siloed innovation simply cannot keep pace.

Today’s AI era is creating an engineering race with similar demands. Every company is pushing to deliver higher-performance AI systems, faster. But performance is no longer defined by compute alone. AI workloads are increasingly dominated by the movement of data: In many cases, moving bits consumes as much — or more — energy than compute itself. As a result, reducing energy per bit can extend system‑level performance alongside gains in peak compute.

The path to energy‑efficient AI therefore runs through system‑level engineering, spanning three tightly interconnected domains:

- Logic, where performance per watt depends on efficient transistor switching, low‑loss power, and signal delivery through dense wiring stacks.

- Memory, where surging bandwidth and capacity demands expose the memory wall, with processor capability advancing faster than memory access.

- Advanced packaging, where 3D integration, chiplet architectures, and high‑density interconnects bring compute and memory closer together — enabling system designs monolithic scaling can no longer sustain.

These domains can no longer be optimized independently. Gains in logic efficiency stall without sufficient memory bandwidth. Advances in memory bandwidth fall short if packaging cannot deliver proximity within thermal and mechanical constraints. Packaging, in turn, is constrained by the precision of both front‑end device fabrication and back‑end integration processes.

In the angstrom era, the hardest problems arise at the boundaries — between compute and memory in the package, front‑end and back‑end integration, and the tightly coupled process steps needed for precise 3D fabrication. And it is precisely this boundary‑driven complexity where the traditional innovation model breaks down.

The Traditional R&D Workflow Is Too Slow for Angstrom‑Era AI

For decades, the semiconductor industry’s R&D model has resembled a relay race. Capabilities are developed in one part of the ecosystem, handed off downstream through integration and manufacturing, evaluated by chip and system designers, and only then fed back for the next iteration. That model worked when progress was dominated by relatively modular steps that could be scaled independently and simply dropped into the manufacturing flow.

But the AI timeline has upended these rules. At angstrom‑scale dimensions, the physics enforces inescapable coupling across the entire stack: materials choices shape integration schemes; integration defines design rules; design rules dictate power delivery; wiring sets thermal budgets; and thermals ultimately constrain packaging scaling. System architects simply cannot wait 10–15 years for each major semiconductor technology inflection to mature.

Representing a roughly $5 billion investment, EPIC is the largest commitment to advanced semiconductor equipment R&D in U.S. history.

A long‑term perspective is essential to align materials innovation with emerging device architectures — and to develop the tools and processes required to integrate both with manufacturable precision. At Applied Materials, together with our customers, we are charting a course across the next 3–4 generations, extending as far as 10 years down the roadmap.

The angstrom era demands that we break down silos and bring together the industry’s best minds — from leading companies to leading academic institutions. If the problem is coupled, the solution must be coupled. If the timeline is compressed, the learning loop must be compressed. It’s not enough to just innovate — we must innovate how we innovate.

EPIC: A Center and Platform for High‑Velocity Co‑Innovation

This is the challenge that Applied Materials EPIC Center is designed to solve.

Representing a roughly US $5 billion investment, EPIC is the largest commitment to advanced semiconductor equipment R&D in U.S. history. When it opens in 2026, it will deliver state‑of‑the‑art cleanroom capabilities built from the ground up to shorten the path from early‑stage research to full‑scale manufacturing. But the facilities are only one component of the model. EPIC is also a platform, an operating system for high-velocity co‑innovation that revolutionizes how ideas move from the lab to the fab.

EPIC is a platform, an operating system for high-velocity co‑innovation that revolutionizes how ideas move from the lab to the fab.Applied Materials

EPIC is a platform, an operating system for high-velocity co‑innovation that revolutionizes how ideas move from the lab to the fab.Applied Materials

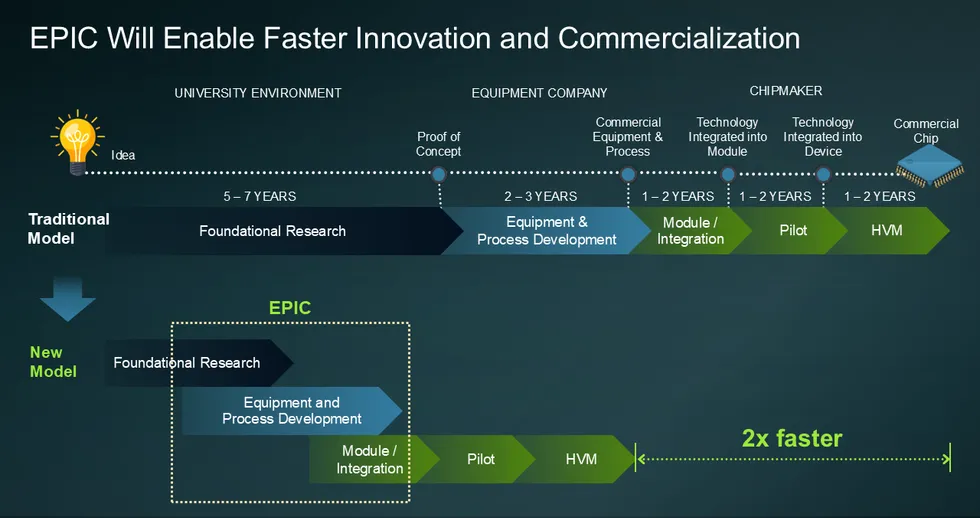

The EPIC model compresses the traditional workflow. Customer engineers work side‑by‑side with Applied technologists from day one — moving beyond isolated process optimization and downstream handoffs. Within a shared, secure environment, EPIC tightly integrates atomistic modeling, test vehicles, process development, validation, and metrology feedback. Constraints that once surfaced late in development are identified and addressed early.

The result is a potentially 2x faster path that benefits the entire ecosystem under one roof:

- Chipmakers gain earlier access to Applied’s R&D portfolio, faster learning cycles, and accelerated transfer of next‑generation technologies into high‑volume manufacturing.

- Ecosystem partners gain earlier access to advanced manufacturing technology and collaboration opportunities that expand what is possible through materials innovation.

- Academic institutions gain opportunities to strengthen the lab‑to‑fab pipeline and help develop future semiconductor talent.

Building on decades of co‑development, we are reinventing the innovation pipeline with our partners across logic, memory, and advanced packaging to deliver the next leap in energy‑efficient AI.

Accelerating Advanced Logic

Logic remains the engine of AI compute. In the angstrom era, however, system‑level gains are increasingly constrained by power and energy. Extending AI performance now depends on architectures that deliver more performance per watt — accelerating the move to 3D devices such as gate‑all‑around (GAA) transistors, which boost density within a compact footprint while preserving power efficiency.

These architectural shifts are unfolding at unprecedented scale, with the logic roadmap already extending beyond first‑generation GAA toward more advanced designs. One key example is GAA with backside power delivery, which relocates thick power lines to the backside of the wafer, reducing resistive losses and freeing front‑side routing for tighter logic cell integration. Another example brings adjacent GAA PMOS and NMOS transistors closer together while inserting a dielectric isolation wall between them to minimize electrical interference. Further out, complementary FETs (CFETs) push density scaling even more by stacking PMOS and NMOS devices directly atop one another.

While these architectures deliver compelling gains in performance per watt and logic density without relying solely on tighter lithography, they significantly raise integration complexity. Manufacturing a single GAA device today can involve more than 2,000 tightly interdependent process steps. At the same time, wiring stacks continue to grow taller and denser to connect these advanced logic devices. Modern leading‑edge GPUs now in development pack more than 300 billion transistors into an area little larger than a postage stamp, interconnected by over 2,000 miles of wiring.

At this level of complexity, the process steps used to create these precise 3D devices and wiring stacks cannot be optimized independently. Design and process must evolve in lockstep, and materials innovation and fabrication methods must advance alongside device architecture. EPIC’s co‑innovation model is designed to accelerate exactly this convergence — enabling logic compute to continue advancing the frontiers of AI at the pace the roadmap demands.

Powering the Memory Roadmap

At the same time, the AI computing era is fundamentally reshaping how data is generated, moved, and processed — making memory technologies, especially DRAM, central to delivering the energy‑efficient performance AI systems require. As models grow larger and more data‑hungry, the DRAM roadmap is shifting toward architectures that deliver higher density, greater bandwidth, and faster access per watt.

At the DRAM cell level, this shift is driving a transition from 6F² buried‑channel array transistors (BCAT) to more compact 4F² architectures, which orient the transistor vertically to boost density and reduce chip area. Looking beyond 4F², sustaining gains in performance per watt will require moving past what 2D scaling alone can deliver. The industry is therefore turning to 3D DRAM, stacking memory cells vertically to add capacity within a constrained footprint. As these structures grow taller and aspect ratios intensify, high-mobility materials engineering in three dimensions becomes increasingly critical to performance and reliability.

Beyond the memory cell array, another powerful lever for DRAM scaling is shrinking the peripheral circuitry, which includes logic transistors and interconnect wiring. One emerging approach places select periphery functions beneath the DRAM array by bonding two wafers — one optimized for the DRAM cells and the other for CMOS logic — using multiple wiring layers.

In parallel, DRAM performance is being extended by leveraging logic‑proven enhancers in the memory periphery. These include mobility boosters such as embedded silicon germanium and stress films, along with wiring upgrades like improved low‑k dielectrics and advanced copper interconnects. Memory manufacturers are also transitioning periphery transistors from planar devices to FinFET architectures, following the logic roadmap to further improve I/O speed. These valuable inflections are central to EPIC’s mission — where they can be co-developed and rapidly validated for next‑generation memory systems.

Driving System Scaling With Advanced Packaging

As data movement becomes the dominant energy cost in AI systems, advanced packaging has emerged as a critical lever for improving system‑level efficiency—shortening interconnect distances, increasing bandwidth density, and reducing the power required to move data between logic and memory.

High‑bandwidth memory (HBM) marks a major inflection along this path. By stacking DRAM dies — scaling to 16 layers and beyond — and placing memory much closer to the processor, HBM enables rapid access to ever‑larger working datasets. This delivers step‑function gains in both bandwidth and energy efficiency.

More broadly, the rise of 3D packages such as HBM underscores why advanced packaging is becoming central to the AI era. Packaging now addresses system‑level constraints that logic and memory device scaling alone can no longer overcome. It also enables a move away from monolithic systems‑on‑chip toward chiplet‑based architectures, as AI workloads increasingly demand flexible designs that combine logic, memory, and specialized accelerators optimized for specific tasks.

A vital technology powering this roadmap is hybrid bonding. With interconnect pitches approaching those of on‑chip wiring, conventional bumps and microbumps run into fundamental limits in density, power, and signal integrity. Hybrid bonding removes these barriers by allowing dramatically higher interconnect and I/O density, supporting a broad range of chiplet architectures — from memory stacking to tighter compute‑memory integration.

As bonded structures like HBM stacks grow larger and more complex, warpage control, die placement, stack alignment, and thermal management become first‑order challenges. EPIC tackles these and other high‑value advanced‑packaging challenges through early, parallel co‑innovation across materials, integration, and manufacturing.

Bringing It All Together

Across logic, memory, and advanced packaging, our industry faces an ambitious roadmap that promises significant gains in energy efficiency for AI systems. But realizing that potential demands breakthrough materials innovation at a time when feature sizes are shrinking, interfaces are multiplying, and process interdependencies are escalating. These challenges cannot be solved on 10–15‑year timelines under the traditional relay‑race model. We must break down silos, align earlier across the ecosystem, and parallelize learning to keep pace with AI’s demands.

In the AI era, progress will be defined by the speed at which lightbulb moments turn into manufacturing and commercialization reality. The only viable path forward is a new innovation model — and EPIC is how we are driving it.

EPIC is a platform, an operating system for high-velocity co‑innovation that revolutionizes how ideas move from the lab to the fab.Applied Materials

EPIC is a platform, an operating system for high-velocity co‑innovation that revolutionizes how ideas move from the lab to the fab.Applied Materials

You must be logged in to post a comment Login