Getting AI agents to perform reliably in production — not just in demos — is turning out to be harder than enterprises anticipated. Fragmented data, unclear workflows, and runaway escalation rates are slowing deployments across industries.

“The technology itself often works well in demonstrations,” said Sanchit Vir Gogia, chief analyst with Greyhound Research. “The challenge begins when it is asked to operate inside the complexity of a real organization.”

Burley Kawasaki, who oversees agent deployment at Creatio, and team have developed a methodology built around three disciplines: data virtualization to work around data lake delays; agent dashboards and KPIs as a management layer; and tightly bounded use-case loops to drive toward high autonomy.

In simpler use cases, Kawasaki says these practices have enabled agents to handle up to 80-90% of tasks on their own. With further tuning, he estimates they could support autonomous resolution in at least half of use cases, even in more complex deployments.

“People have been experimenting a lot with proof of concepts, they’ve been putting a lot of tests out there,” Kawasaki told VentureBeat. “But now in 2026, we’re starting to focus on mission-critical workflows that drive either operational efficiencies or additional revenue.”

Why agents keep failing in production

Enterprises are eager to adopt agentic AI in some form or another — often because they’re afraid to be left out, even before they even identify real-world tangible use cases — but run into significant bottlenecks around data architecture, integration, monitoring, security, and workflow design.

The first obstacle almost always has to do with data, Gogia said. Enterprise information rarely exists in a neat or unified form; it is spread across SaaS platforms, apps, internal databases, and other data stores. Some are structured, some are not.

But even when enterprises overcome the data retrieval problem, integration is a big challenge. Agents rely on APIs and automation hooks to interact with applications, but many enterprise systems were designed long before this kind of autonomous interaction was a reality, Gogia pointed out.

This can result in incomplete or inconsistent APIs, and systems can respond unpredictably when accessed programmatically. Organizations also run into snags when they attempt to automate processes that were never formally defined, Gogia said.

“Many business workflows depend on tacit knowledge,” he said. That is, employees know how to resolve exceptions they’ve seen before without explicit instructions — but, those missing rules and instructions become startlingly obvious when workflows are translated into automation logic.

The tuning loop

Creatio deploys agents in a “bounded scope with clear guardrails,” followed by an “explicit” tuning and validation phase, Kawasaki explained. Teams review initial outcomes, adjust as needed, then re-test until they’ve reached an acceptable level of accuracy.

That loop typically follows this pattern:

-

Design-time tuning (before go-live): Performance is improved through prompt engineering, context wrapping, role definitions, workflow design, and grounding in data and documents.

-

Human-in-the-loop correction (during execution): Devs approve, edit, or resolve exceptions. In instances where humans have to intervene the most (escalation or approval), users establish stronger rules, provide more context, and update workflow steps; or, they’ll narrow tool access.

-

Ongoing optimization (after go-live): Devs continue to monitor exception rates and outcomes, then tune repeatedly as needed, helping to improve accuracy and autonomy over time.

Kawasaki’s team applies retrieval-augmented generation to ground agents in enterprise knowledge bases, CRM data, and other proprietary sources.

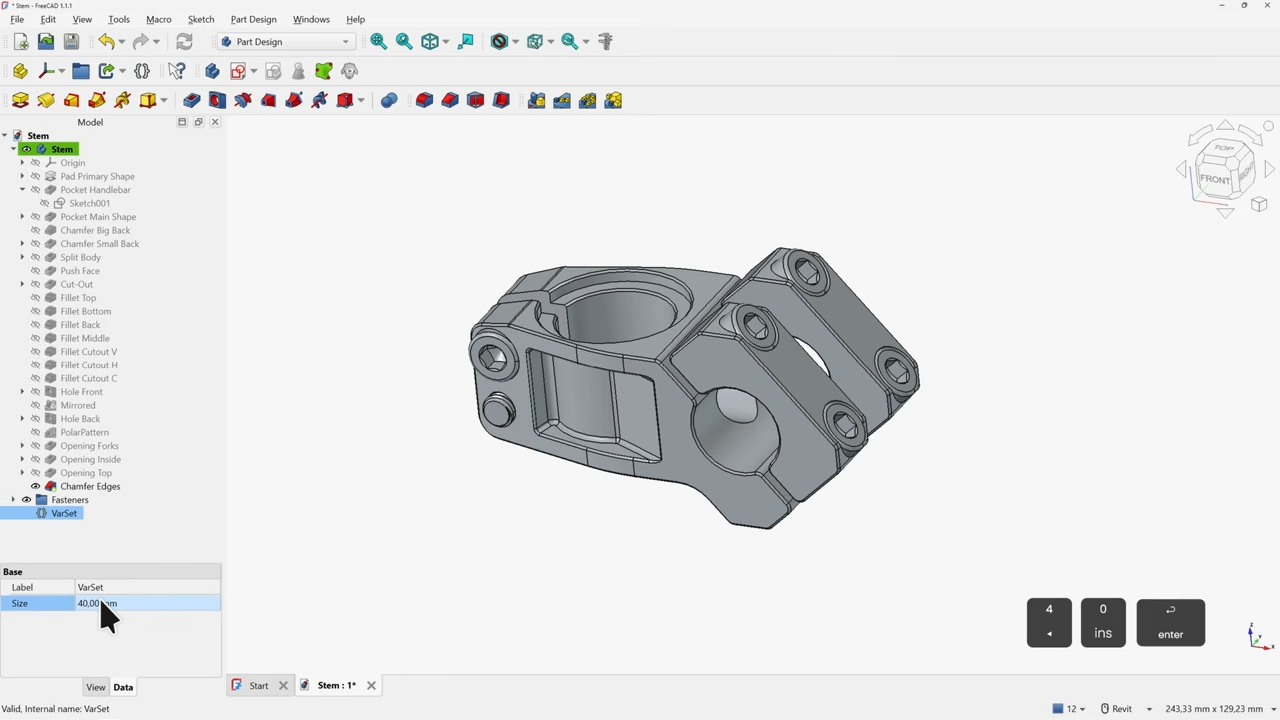

Once agents are deployed in the wild, they are monitored with a dashboard providing performance analytics, conversion insights, and auditability. Essentially, agents are treated like digital workers. They have their own management layer with dashboards and KPIs.

For instance, an onboarding agent will be incorporated as a standard dashboard interface providing agent monitoring and telemetry. This is part of the platform layer — orchestration, governance, security, workflow execution, monitoring, and UI embedding — that sits “above the LLM,” Kawasaki said.

Users see a dashboard of agents in use and each of their processes, workflows, and executed results. They can “drill down” into an individual record (like a referral or renewal) that shows a step-by-step execution log and related communications to support traceability, debugging, and agent tweaking. The most common adjustments involve logic and incentives, business rules, prompt context, and tool access, Kawasaki said.

The biggest issues that come up post-deployment:

-

Exception handling volume can be high: Early spikes in edge cases often occur until guardrails and workflows are tuned.

-

Data quality and completeness: Missing or inconsistent fields and documents can cause escalations; teams can identify which data to prioritize for grounding and which checks to automate.

-

Auditability and trust: Regulated customers, particularly, require clear logs, approvals, role-based access control (RBAC), and audit trails.

“We always explain that you have to allocate time to train agents,” Creatio’s CEO Katherine Kostereva told VentureBeat. “It doesn’t happen immediately when you switch on the agent, it needs time to understand fully, then the number of mistakes will decrease.”

“Data readiness” doesn’t always require an overhaul

When looking to deploy agents, “Is my data ready?,” is a common early question. Enterprises know data access is important, but can be turned off by a massive data consolidation project.

But virtual connections can allow agents access to underlying systems and get around typical data lake/lakehouse/warehouse delays. Kawasaki’s team built a platform that integrates with data, and is now working on an approach that will pull data into a virtual object, process it, and use it like a standard object for UIs and workflows. This way, they don’t have to “persist or duplicate” large volumes of data in their database.

This technique can be helpful in areas like banking, where transaction volumes are simply too large to copy into CRM, but are “still valuable for AI analysis and triggers,” Kawasaki said.

Once integrations and virtual objects are established, teams can evaluate data completeness, consistency, and availability, and identify low-friction starting points (like document-heavy or unstructured workflows).

Kawasaki emphasized the importance of “really using the data in the underlying systems, which tends to actually be the cleanest or the source of truth anyway.”

Matching agents to the work

The best fit for autonomous (or near-autonomous) agents are high-volume workflows with “clear structure and controllable risk,” Kawasaki said. For instance, document intake and validation in onboarding or loan preparation, or standardized outreach like renewals and referrals.

“Especially when you can link them to very specific processes inside an industry — that’s where you can really measure and deliver hard ROI,” he said.

For instance, financial institutions are often siloed by nature. Commercial lending teams perform in their own environment, wealth management in another. But an autonomous agent can look across departments and separate data stores to identify, for instance, commercial customers who might be good candidates for wealth management or advisory services.

“You think it would be an obvious opportunity, but no one is looking across all the silos,” Kawasaki said. Some banks that have applied agents to this very scenario have seen “benefits of millions of dollars of incremental revenue,” he claimed, without naming specific institutions.

However, in other cases — particularly in regulated industries — longer-context agents are not only preferable, but necessary. For instance, in multi-step tasks like gathering evidence across systems, summarizing, comparing, drafting communications, and producing auditable rationales.

“The agent isn’t giving you a response immediately,” Kawasaki said. “It may take hours, days, to complete full end-to-end tasks.”

This requires orchestrated agentic execution rather than a “single giant prompt,” he said. This approach breaks work down into deterministic steps to be performed by sub-agents. Memory and context management can be maintained across various steps and time intervals. Grounding with RAG can help keep outputs tied to approved sources, and users have the ability to dictate expansion to file shares and other document repositories.

This model typically doesn’t require custom retraining or a new foundation model. Whatever model enterprises use (GPT, Claude, Gemini), performance improves through prompts, role definitions, controlled tools, workflows, and data grounding, Kawasaki said.

The feedback loop puts “extra emphasis” on intermediate checkpoints, he said. Humans review intermediate artifacts (such as summaries, extracted facts, or draft recommendations) and correct errors. Those can then be converted into better rules and retrieval sources, narrower tool scopes, and improved templates.

“What is important for this style of autonomous agent, is you mix the best of both worlds: The dynamic reasoning of AI, with the control and power of true orchestration,” Kawasaki said.

Ultimately, agents require coordinated changes across enterprise architecture, new orchestration frameworks, and explicit access controls, Gogia said. Agents must be assigned identities to restrict their privileges and keep them within bounds. Observability is critical; monitoring tools can record task completion rates, escalation events, system interactions, and error patterns. This kind of evaluation must be a permanent practice, and agents should be tested to see how they react when encountering new scenarios and unusual inputs.

“The moment an AI system can take action, enterprises have to answer several questions that rarely appear during copilot deployments,” Gogia said. Such as: What systems is the agent allowed to access? What types of actions can it perform without approval? Which activities must always require a human decision? How will every action be recorded and reviewed?

“Those [enterprises] that underestimate the challenge often find themselves stuck in demonstrations that look impressive but cannot survive real operational complexity,” Gogia said.

You must be logged in to post a comment Login