Tech

How AI Assistants Are Moving the Security Goalposts

An anonymous reader quotes a report from KrebsOnSecurity: AI-based assistants or “agents” — autonomous programs that have access to the user’s computer, files, online services and can automate virtually any task — are growing in popularity with developers and IT workers. But as so many eyebrow-raising headlines over the past few weeks have shown, these powerful and assertive new tools are rapidly shifting the security priorities for organizations, while blurring the lines between data and code, trusted co-worker and insider threat, ninja hacker and novice code jockey.

The new hotness in AI-based assistants — OpenClaw (formerly known as ClawdBot and Moltbot) — has seen rapid adoption since its release in November 2025. OpenClaw is an open-source autonomous AI agent designed to run locally on your computer and proactively take actions on your behalf without needing to be prompted. If that sounds like a risky proposition or a dare, consider that OpenClaw is most useful when it has complete access to your entire digital life, where it can then manage your inbox and calendar, execute programs and tools, browse the Internet for information, and integrate with chat apps like Discord, Signal, Teams or WhatsApp.

Other more established AI assistants like Anthropic’s Claude and Microsoft’s Copilot also can do these things, but OpenClaw isn’t just a passive digital butler waiting for commands. Rather, it’s designed to take the initiative on your behalf based on what it knows about your life and its understanding of what you want done. “The testimonials are remarkable,” the AI security firm Snyk observed. “Developers building websites from their phones while putting babies to sleep; users running entire companies through a lobster-themed AI; engineers who’ve set up autonomous code loops that fix tests, capture errors through webhooks, and open pull requests, all while they’re away from their desks.” You can probably already see how this experimental technology could go sideways in a hurry. […] Last month, Meta AI safety director Summer Yue said OpenClaw unexpectedly started mass-deleting messages in her email inbox, despite instructions to confirm those actions first. She wrote: “Nothing humbles you like telling your OpenClaw ‘confirm before acting’ and watching it speedrun deleting your inbox. I couldn’t stop it from my phone. I had to RUN to my Mac mini like I was defusing a bomb.”

Krebs also noted the many misconfigured OpenClaw installations users had set up, leaving their administrative dashboards publicly accessible online. According to pentester Jamieson O’Reilly, “a cursory search revealed hundreds of such servers exposed online.” When those exposed interfaces are accessed, attackers can retrieve the agent’s configuration and sensitive credentials. O’Reilly warned attackers could access “every credential the agent uses — from API keys and bot tokens to OAuth secrets and signing keys.”

“You can pull the full conversation history across every integrated platform, meaning months of private messages and file attachments, everything the agent has seen,” O’Reilly added. And because you control the agent’s perception layer, you can manipulate what the human sees. Filter out certain messages. Modify responses before they’re displayed.”

Tech

Why the Wyze Cam Pan V4 4K Security Camera Earns a Spot on Your Wall or Desk

Security cameras have become commonplace in many neighborhoods, but their footage frequently leaves us scratching our heads and wondering what actually happened. That’s where the Wyze Cam Pan V4, priced at $45 (was $60), comes in, and its straightforward objective is to provide footage that will make sense later on. The camera records in sharp 4K (3840 by 2160 pixels each frame), so you may expect clear images of faces, clothes, license plates, and so on.

Movement tracking feels just as practical, with a 360-degree pan and 180-degree tilt that allows one camera to scan a whole room or yard with no blind spots. The base glides smoothly, while the head tilts up and down on demand, allowing you to define unique ‘waypoints’ that cause the camera to sweep over crucial locations at certain intervals. The AI tracking adds another dimension by following people, dogs, and vehicles, ignoring things like shifting leaves and focusing on what you want to see. Tests show that it can detect a person crossing the driveway and maintain them in view long enough to see all of their features and actions.

Sale

WYZE Cam Pan v4, 4K Smart Security Camera, AI Indoor/Outdoor Cameras for Home Security, Baby & Pet, Color…

- 4K Ultra HD Clarity with 360° Pan and 180° Tilt Coverage – Experience crystal-clear monitoring day and night with a wide field of view and remote…

- AI-Powered Motion Tracking for Pets & People – Next-gen CPU with integrated NPU enables faster processing and enhanced AI capabilities. Automatically…

- Robust Indoor/Outdoor Durability – Place your camera in or out, right-side up, up-side down, with an IP65 weather rating, this outdoor security camera…

Night vision doesn’t let you down either, with conventional infrared providing a basic view, but the built-in flashlight illuminates in full color when motion is detected. A built-in starlight sensor helps to keep the image from becoming hazy even when the light is not turned on yet. Clips shot in the evening or at night are just as clear as those made during the day, and users who put the camera near the front entrance or garage were able to identify delivery drivers and visitors without having to guess who they were.

Amazon Basics microSDXC Memory Card with Full Size Adapter, A2, U3, Read Speed up to 100 MB/s, 256GB…

- Universal Compatibility — NOT for Nintendo Switch 2, but Compatible with Nintendo Switch. Works seamlessly with GoPro/action cams, DSLRs, drones…

- Reliable Real-World Capacity – Labeled Capacities/Usable Capacities: 64GB/≥58GB; 128GB/≥116GB; 256GB/≥232GB; 512GB/≥465GB; 1TB/≥908GB (Due…

- 4K & Full HD Ready — Optimized for high-bitrate video recording and burst-mode photography. Handles RAW files, time-lapse sequences, and smooth 4K…

Storage is quite versatile and inexpensive: simply insert a microSD card (up to 512GB) and the camera will record continuously. The good news is that there is no monthly price for this option, as the card will last you several weeks depending on the size you choose. The Wyze app is also quite simple to use: you can go through your timeline and access quick filters for event replay. With two-way audio, you may quickly communicate with the person being recorded and even sound a loud siren if you notice something wrong.

Setup is fast; simply plug in the 6ft USB-C cable, connect to Wi-Fi, and follow the app steps via Bluetooth. It works equally well indoors and outside when combined with the optional outdoor power adaptor, and it has an IP65 designation, allowing it to withstand some rain and dust. It can also withstand temperatures ranging from minus 10 to plus 40 degrees, so you can place it anyplace without worrying about it melting or freezing.

Tech

Are Employers Using Your Data To Figure Out the Lowest Salary You’ll Accept?

MarketWatch looks at “surveillance wages,” pay rates “based not on an employee’s performance or seniority, but on formulas that use their personal data, often collected without employees’ knowledge.”

According to Nina DiSalvo, policy director at labor advocacy group Towards Justice, some systems use signals associated with financial vulnerability — including data on whether a prospective employee has taken out a payday loan or has a high credit-card balance — to infer the lowest pay a candidate might accept. Companies can also scrape candidates’ public personal social-media pages, she said…

A first-of-its-kind audit of 500 labor-management artificial-intelligence companies by Veena Dubal, a law professor at University of California, Irvine, and Wilneida Negrón, a tech strategist, found that employers in the healthcare, customer service, logistics and retail industries are customers of vendors whose tools are designed to enable this practice. Published by the Washington Center for Equitable Growth, a progressive economic think tank, the August 2025 report… does not claim that all employers using these systems engage in algorithmic wage surveillance. Instead, it warns that the growing use of algorithmic tools to analyze workers’ personal data can enable pay practices that prioritize cost-cutting over transparency or fairness…

Surveillance wages don’t stop at the hiring stage — they follow workers onto the job, too. The vendors that provide such services also offer tools that are built to set bonus or incentive compensation, according to the report. These tools track their productivity, customer interactions and real-time behavior — including, in some cases, audio and video surveillance on the job. Nearly 70% of companies with more than 500 employees were already using employee-monitoring systems in 2022, such as software that monitors computer activity, according to a survey from the International Data Corporation. “The data that they have about you may allow an algorithmic decision system to make assumptions about how much, how big of an incentive, they need to give to a particular worker to generate the behavioral response they seek,” DiSalvo said.

The article notes that Colorado introduced the “Prohibit Surveillance Data to Set Prices and Wages Act” to ban companies from setting pay rates with algorithms that use payday-loan history, location data or Google search behavior for algorithmically set.

Thanks to long-time Slashdot reader sinij for sharing the article.

Tech

Ursula K. Le Guin’s blog has been turned into a podcast

For those who will never tire of the words of Ursula K. Le Guin, a special treat is on the way. The esteemed late author’s blog, which she started in 2010 at the age of 81, is being rereleased as a podcast, In Your Spare Time. Le Guin’s blog ran until 2017, and a book collecting a selection of those posts was published that year. But, the podcast will include everything: essays, poems and “even the ones that are mostly cat pictures,” according to the announcement. The first episode will be released April 8 on Apple Podcasts, Spotify and other platforms.

From Le Guin’s official Instagram account, which is managed by her estate:

We always wanted to hear a version of the blog that includes every single post, even the ones that are mostly cat pictures. So for the next two years and change, we’ll release an episode every Wednesday. Each episode features a different reader of Ursula’s text, and each reader adds their own thoughts—about their relationship with Ursula and her work, or about the specific topic of the post, or whatever catches their fancy.

You can listen to a trailer here ahead of the first episode’s release this week. Post zero, “A Note at the Beginning,” will be read by David Mitchell.

Tech

The Social Media Addiction Verdicts Are Built On A Scientific Premise That Experts Keep Telling Us Is Wrong

from the we-keep-seeing-this-over-and-over dept

Last week, I wrote about why the social media addiction verdicts against Meta and YouTube should worry anyone who cares about the open internet. The short version: plaintiffs’ lawyers found a clever way to recharacterize editorial decisions about third-party content as “product design defects,” effectively gutting Section 230 without anyone having to repeal it. The legal theory will be weaponized against every platform on the internet, not just the ones you hate. And the encryption implications of the New Mexico decision alone should terrify everyone. You can read that post for more details on the legal arguments.

But there’s a separate question lurking underneath the legal one that deserves its own attention: is the scientific premise behind all of this even right? Are these platforms actually causing widespread harm to kids? Is “social media addiction” a real thing that justifies treating Instagram like a pack of Marlboros? We’ve covered versions of this debate in the past, mostly looking at studies. But there are other forms of expert analysis as well.

Long-time Techdirt reader and commenter Leah Abram pointed us to a newsletter from Dr. Katelyn Jetelina and Dr. Jacqueline Nesi that digs into exactly this question with the kind of nuance that’s been almost entirely absent from the mainstream coverage. Jetelina runs the widely read “Your Local Epidemiologist” newsletter, and Nesi is a clinical psychologist and professor at Brown who studies technology’s effects on young people.

And what they’re saying lines up almost perfectly with what we’ve been saying here at Techdirt for years, often to enormous pushback: social media does not appear to be inherently harmful to children. What appears to be true is that there is a small group of kids for whom it’s genuinely problematic. And the interventions that would actually help those kids look nothing like the blanket bans and sweeping product liability lawsuits that politicians and trial lawyers are currently pursuing. And those broad interventions do real harm to many more people, especially those who are directly helped by social media.

Let’s start with the “addiction” question, since that’s the framework on which these verdicts were built. Here’s Nesi:

There is much debate in psychology about whether social media use (or, really, any non-substance-using behavior outside of gambling) can be called an “addiction.” There is no clear neurological or diagnostic criteria, like a blood test, to make this easy, so it’s up for debate:

- On one hand, some researchers argue that compulsive social media use shares enough features (loss of control, withdrawal-like symptoms, continued use despite harm) to warrant the diagnosis for treatment.

- Others say the evidence for true neurological dependency is still weak and inconsistent because research relies on self-reported data, findings haven’t been replicated, and many heavy users don’t show true clinical impairment without pre-existing issues.

Her bottom line is measured and careful in a way that you almost never hear from the politicians and lawyers who claim to be acting on behalf of children:

Here’s my current take: There are a small number of people whose social media use is so extreme that it causes significant impairment in their lives, and they are unable to stop using it despite that impairment. And for those people, maybe addiction is the right word.

For the vast majority of people (and kids) using social media, though, I do not think addiction is the right word to use.

That’s a leading expert on technology and adolescent mental health, someone who has personally worked with hospitalized suicidal teenagers, telling you that for the vast majority of kids, “addiction” is the wrong word. And she has a specific, evidence-based reason for why that distinction matters — one that should be of particular interest to anyone who actually wants platforms held accountable for the kids who are being harmed.

Nesi argues that overusing the addiction label doesn’t just lack scientific precision. It actively weakens the case for meaningful platform accountability:

Preserving the precision of the addiction label — reserving it for the small number of kids whose use is genuinely compulsive and impairing — actually strengthens the case for platform accountability, rather than weakening it. It’s that targeted claim that has driven legal action and regulatory pressure. Expanding it to average use shifts focus from systemic design fixes to individual diagnosis, and dilutes the very argument that holds platforms responsible.

This is a vital point that runs counter to the knee-jerk reactions of both the trial lawyers and the moral panic crowd. If you say every kid using social media is an addict, you’ve made the concept of addiction meaningless, and you’ve made it dramatically harder to identify and help the kids who are actually suffering. You’ve also given platforms an easy out: if everyone’s addicted, then it’s just a feature of how humans interact with technology, and nobody is specifically responsible for anything. Precision is what creates accountability. Vagueness destroys it.

We highlighted something similar back in January, when a study published in Nature’s Scientific Reports found that simply priming people to think about their social media use in addiction terms — such as using language from the U.S. Surgeon General’s report — reduced their own perceived control, increased their self-blame, and made them recall more failed attempts to change their behavior. The addiction framing itself was creating a feeling of helplessness that made it harder for people to change their habits. As the researchers in that study put it:

It is impressive that even the two-minute exposure to addiction framing in our research was sufficient to produce a statistically significant negative impact on users. This effect is aligned with past literature showing that merely seeing addiction scales can negatively impact feelings of well-being. Presumably, continued exposure to the broader media narrative around social media addiction has even larger and more profound effects.

So we’re stuck with a situation where the dominant public narrative — “social media is addicting our children” — appears to be both scientifically imprecise and actively counterproductive for the people it claims to help. That’s a real problem. And it would be nice if the moral panic crowd would start to recognize the damage they’re doing.

None of this means there are no risks. Nesi is quite clear about that, drawing on her own clinical work:

A few years ago, I ran a study with adolescents experiencing suicidal thoughts in an inpatient hospital unit. Many of the patients I spoke to had complex histories of abuse, neglect, bullying, poverty, and other major stressors. Some of these patients used social media in totally benign, unremarkable ways. A few of them, though, were served with an endless feed of suicide-related posts and memes, some romanticizing or minimizing suicide. For those patients, it would be very hard to argue that social media did not contribute to their symptoms, even with everything else going on in their lives.

Nobody who has paid serious attention to this issue disputes that. There are kids for whom social media is a contributing factor in genuine mental health crises. The question has always been whether that reality justifies treating social media as an inherently dangerous product that harms all children — the premise on which these lawsuits and legislative bans are built.

The evidence consistently says no. When it comes to whether social media actually causes mental health issues, the newsletter is direct:

The scientific community has substantial correlational evidence and some, but not much, causal evidence of harm. Studies that randomly assigned people to stop using social media show mixed results, depending on how long they stopped, whether they quit entirely or just reduced use, and what they were using it for.

And:

It is still the case that if you take an average, healthy teen and give them social media, this is highly unlikely to create a mental illness.

This is consistent with what we’ve been reporting on for years, including two massive studies covering 125,000 kids that found either a U-shaped relationship (where moderate use was associated with the best outcomes and no use was sometimes worse than heavy use) or flat-out zero causal effect on mental health. Every time serious researchers go looking for the inherent-harm story that politicians keep telling, they come up empty.

One of the most fascinating details in the newsletter is the Costa Rica comparison. Costa Rica ranks #4 in the 2026 World Happiness Report. Its residents use just as much social media as Americans. And yet:

It doesn’t necessarily have fewer mental illnesses. And it certainly doesn’t have less social media use. What it has is a deep social fabric, and that may mean social media use reinforces real-world connections in Costa Rica, whereas in English-speaking countries, it may be replacing them.

In other words, cultural factors appear to be protective. The underlying challenges to social foundations — trust, connection, belonging, and safety — are what drive happiness. Friendships, being known by someone, the sense that you belong somewhere: these are the actual load-bearing pillars of mental health, more predictive of wellbeing than income, and more protective against mental illness than almost any intervention we have.

If social media were inherently harmful — if the “addictive design” of infinite scroll and autoplay and algorithmic recommendations were the core problem — Costa Rica would be suffering the same outcomes as the United States. They have the same platforms, same features, and same engagement mechanics. What actually differs is the strength of the social fabric, not the tools themselves.

This is a similar point I raised in my review of Jonathan Haidt’s book two years ago. If you go past his cherry-picked data, you can find tons of countries with high social media use where rates of depression and suicide have gone down. There are clearly many other factors at work here, and little evidence that social media is a key factor at all.

That realization completely changes how we should think about policy. If the problem is weak social foundations — not enough connection, not enough belonging, not enough adults showing up for kids — then banning social media or suing platforms into submission won’t fix it. You’ll have addressed the wrong variable. And in the process, you’ll have made the platforms worse for the many kids (including LGBTQ+ teens in hostile communities, kids with rare diseases, teens in rural areas) who rely on them for the connection and community that their physical environment doesn’t provide.

Nesi’s column has some practical advice that is pretty different than what that best selling book might tell you:

If you know your teen is vulnerable, perhaps due to existing mental health challenges or social struggles, you may want to be extra careful.

If your teen is using social media in moderation, and it does not seem to be affecting them negatively, it probably isn’t.

That sounds so obvious it feels almost silly to type out. And yet it is the exact opposite of the approach we see in the lawsuits and bans currently dominating the policy landscape, which assume social media is a universally dangerous product requiring universal restrictions.

The newsletter closes with a key line that highlights the nuance that so many people ignore:

Social media may be one piece of the puzzle, but it’s certainly not the whole thing.

We’ve been making this point at Techdirt for a long time now, often in the face of considerable hostility from people who are deeply invested in the simpler narrative. I’ve written about Danah Boyd’s useful framework of understanding the differences between risks and harms, and how moral panics confuse those two things. I’ve covered so many studies that find no causal link that I’ve lost count. I’ve pointed out how the “addiction” framing may be doing more damage than the platforms themselves.

That’s why it’s encouraging to see credentialed, independent researchers — people who work directly with the most vulnerable kids — end up in the same place through their own work. Because this conversation desperately needs more voices willing to acknowledge both realities: that some kids are genuinely harmed and need targeted help, and that the sweeping narrative of universal social media harm is not supported by the science and leads to policy responses that may hurt far more people than they help.

The kids who are in that small, genuinely vulnerable group deserve interventions designed for them — better mental health funding and access along with better identification of at-risk youth. What they don’t deserve is to have their suffering used as a blunt instrument and a prop to reshape the entire internet through lawsuits built on a scientific premise that the actual scientists keep telling us is wrong.

Filed Under: addiction, experts, jackqueline nesi, katelyn jetelina, mental health, social media

Companies: meta, youtube

Tech

Minnesota Kicks Off Legal Battle With Trump Administration To Hold ICE Shooters Accountable

from the occupied-territories dept

This story was originally published by ProPublica. Republished under a CC BY-NC-ND 3.0 license.

They asked nicely at first.

After an Immigration and Customs Enforcement agent shot and killed Renee Good, a 37-year-old mother of three who’d recently moved to Minneapolis, local law enforcement officials requested a partnership with the federal government to investigate the case, as they’d done in past shootings involving federal agents.

When the Trump administration refused to cooperate, Minnesota prosecutors ratcheted up their efforts. They sent a series of strongly worded legal letters demanding evidence in the Good shooting as well as the shootings of Julio Cesar Sosa-Celis, a Venezuelan immigrant who was wounded a week after Good was shot, and Alex Pretti, who was killed on Jan. 24.

Still, the administration rebuffed the requests.

This week, prosecutors from Hennepin County and the state of Minnesota took the next step to force the Trump administration’s hand. They filed a federal lawsuit against the departments of Homeland Security and Justice over the evidence in the shootings, an action that Hennepin County Attorney Mary Moriarty, whose jurisdiction covers Minneapolis, characterized as “unprecedented in American history.”

The Trump administration has declined to release the names of the agents involved in the shootings, even after the Minnesota Star Tribune and ProPublica identified the officers involved in the Good and Pretti incidents.

“The federal government has refused to cooperate with state law enforcement, which is unique, rare and simply cannot be tolerated,” Minnesota Attorney General Keith Ellison told reporters. “[We] can’t sit around and let them do it.”

In the standoff over evidence, the case has already become a game of constitutional chicken over states’ rights versus federal immunity, a battle that will have implications for others who wish to hold agents in the president’s immigration surge criminally accountable.

So far, neither side is showing signs of backing down, foreshadowing a fight that could take years. If prosecutors do eventually file charges against federal agents involved in the shootings, legal experts said the path to trial, much less winning convictions, will be filled with legal and procedural challenges.

“State prosecutors across the country are going to be watching what happens in Minnesota really closely,” said Alicia Bannon, director of the judiciary program at the nonprofit Brennan Center for Justice.

The first test for prosecutors, if they file charges, would be to prove the agents don’t qualify for immunity through the Constitution’s supremacy clause, a rarely invoked legal doctrine that protects federal officers from state prosecutions if they’re acting lawfully and within the scope of their duties.

Failing to pass that test would likely end the case.

The U.S. Supreme Court hasn’t taken up a case involving supremacy clause immunity in over 100 years, Bannon said, and judges have come down differently on legal issues related to its application.

There’s no easy answer as to whether Minnesota will be able to get past a supremacy clause defense, said Jill Hasday, a constitutional law professor at the University of Minnesota.

“That depends on the facts, but probably the odds are stacked against it,” she said.

Even if they survive such a fight, the cases could be dogged by a series of logistical challenges. Moriarty, who has been leading the investigations, has decided not to seek reelection and will leave office at the end of the year. That means whoever wins the election for her seat in November could inherit the prosecutions.

In addition to not having the names of the agents, prosecutors don’t know where those agents are now. Minnesota may need to extradite them, potentially from a MAGA-leaning state that may balk at sending them to Hennepin County to stand trial.

“Will the federal government or other states cooperate with that? I think the answer to that is sort of iffy,” said Ilya Somin, a law professor at George Mason University in Virginia. (Indeed, in a case involving a doctor charged with illegally mailing abortion medication to a Louisiana woman, the state of California has rejected an extradition request, citing its own laws protecting doctors from prosecution elsewhere.)

The fight is focused on three shootings. But Moriarty’s office has opened criminal investigations into 14 additional cases of potentially unlawful behavior by federal agents during Operation Metro Surge, which started in early December and has wound down over the past few weeks.

The other cases Moriarty is examining involve allegations of excessive force or other misconduct by federal agents, such as an incident in early January in which agents allegedly used force on staff and students on the grounds of a high school.

Prosecutors are also investigating Gregory Bovino, the outgoing Border Patrol commander who helped to lead immigration surges into several American cities and who was seen on video lobbing green-smoke canisters into crowds at a park in Minneapolis. A Department of Homeland Security spokesperson said at the time that Bovino and other agents were responding to a “hostile crowd.”

The tension has played out in a series of demand letters sent by Moriarty to the Justice and Homeland Security departments. “Public transparency is vitally important in these cases — not just for the people of Hennepin County and Minnesota, but for the public nationwide,” Moriarty wrote in one of the letters. “The only way to achieve transparency is through investigation conducted at a local level.”

In January, after the shooting of Good, federal officials had agreed to participate in a joint investigation with the Bureau of Criminal Apprehension — Minnesota’s state police agency tasked with examining use of deadly force cases — according to the letters signed by Moriarty.

State officials presumed they’d be able to examine evidence, such as the car Good was driving and the guns used to shoot her and the other victims. But the investigators later learned through public statements by high-ranking Trump administration officials that federal agents were no longer planning to share evidence, the letter states.

Local and state prosecutors don’t have the authority to subpoena them for evidence like in a typical criminal investigation. The demand letters, called Touhy letters, are formal written requests, used as an alternative to a subpoena, asking a federal agency to provide evidence or testimony in a case in which the government is not a party. Moriarty sought an extensive list of evidence in the shootings, from the guns fired by the agents in all three cases to official reports, agent GPS devices and witness statements. The Touhy letters asked for a response by Feb. 17.

Normally, the federal government complies with Touhy letters as a matter of protocol, as long as releasing the information doesn’t violate an internal policy, said Timothy Johnson, a political science and law professor at the University of Minnesota.

But on Feb. 13, the FBI told BCA investigators that it won’t share investigative materials in the Pretti case, BCA Superintendent Drew Evans said in a statement. Evans said the police agency had reiterated its requests for evidence in the Good and Sosa-Celis cases.

More than a month after the deadline set by prosecutors, the Trump administration still hasn’t turned over the materials.

“There has been no cooperation from federal authorities,” BCA spokesperson Michael Ernster said.

The agents involved in the shootings have not spoken publicly, but a spokesperson for the Department of Homeland Security defended Good’s shooting, saying the agent acted in self-defense. They said the Pretti shooting was under investigation by the FBI and the Department of Homeland Security, with the Border Patrol conducting its own investigation. Those investigations could result in discipline or charges, including for civil rights violations.

The Department of Homeland Security spokesperson said federal officials found that, after Sosa-Celis’ shooting, officers made false statements. But the agency did not say whether it would cooperate with the local authorities or follow a court ruling requiring it to do so.

The Justice Department did not respond to a request for comment or to questions. Neither agency has responded to the lawsuit.

Moriarty called the lawsuit “critically important” to investigating the shooting cases but also said she had not made any decisions on whether her office will file charges.

“There has to be an investigation anytime a federal agent or a state agent takes the life of a person in our community,” she said. “And ultimately the decision may be it was lawful. You don’t know, but that’s why you do the investigation. You are transparent with the results of that investigation, and you are public with your transparency about the decision and how you got there.”

But a lawsuit does not guarantee that prosecutors will get all they want. “The question then becomes, even if Hennepin County or Minneapolis wins the suit, will they comply then?” Johnson asked. “And the answer is probably no.”

If the Trump administration did eventually defy a judge’s order, he said, prosecutors could try to appeal up to the U.S. Supreme Court. As far as what could happen next: “It’s anyone’s guess.”

Filed Under: alex pretti, doj, ice, investigations, julio cesar sosa-celis, keith ellison, minnesota, murder, renee good

Tech

Game Jam Winner Spotlight: CARAMENTRAN

from the gaming-like-it’s-1930 dept

It’s time for the second in our series of spotlight posts looking at the winners of our eighth annual public domain game jam, Gaming Like It’s 1930! We’ve already covered the Best Adaptation winner, and this week we’re looking at the winner of Best Deep Cut: CARAMENTRAN by RedSPINE and poymakes.

Sometimes, we get entries that were designed for more than one game jam, and this is one of them. In this case, the game was also created for the Themed Horror game jam in which one of the themes was “macabre carnival”. CARAMENTRAN delves specifically into a Provençal carnival tradition from France, in which the “King of Carnival” or Caramentran is put on trial for all the year’s ills then burned at the stake in punishment. As the player, you are Caramentran himself, trying to ward off accusations from the villagers while extinguishing the flames at your feet in a grimy, unsettling horror arcade game.

It’s a fitting premise for a horror game, but what makes it special for this game jam is its visual assets, drawn from a variety of public domain sources. The game’s hauntingly hideous aesthetic comes from a collage of archive images and postcards of actual carnivals in Southern France, combined with figures taken from American magazines, ads, and fashion plates.

Many of the materials are from 1930, while many others are from earlier, and the combination of wildly different styles is viscerally jarring in a way that amplifies the horror. There are no widely recognized images or famous works of art here, only fragments of visual language plucked piece by piece from the vast sea of imagery in the public domain, and for that it’s this year’s Best Deep Cut.

Congratulations to RedSPINE and poymakes for the win! You can play CARAMENTRAN in your browser on Itch. We’ll be back next week with another winner spotlight, and don’t forget to check out the many great entries that didn’t quite make the cut. And stay tuned for next year, when we’ll be back for Gaming Like It’s 1931!

Filed Under: game jam, games, gaming, gaming like it’s 1930, public domain, winner spotlight

Tech

OCSF explained: The shared data language security teams have been missing

The security industry has spent the last year talking about models, copilots, and agents, but a quieter shift is happening one layer below all of that: Vendors are lining up around a shared way to describe security data. The Open Cybersecurity Schema Framework (OCSF), is emerging as one of the strongest candidates for that job.

It gives vendors, enterprises, and practitioners a common way to represent security events, findings, objects, and context. That means less time rewriting field names and custom parsers and more time correlating detections, running analytics, and building workflows that can work across products. In a market where every security team is stitching together endpoint, identity, cloud, SaaS, and AI telemetry, a common infrastructure long felt like a pipe dream, and OCSF now puts it within reach.

OCSF in plain language

OCSF is an open-source framework for cybersecurity schemas. It’s vendor neutral by design and deliberately agnostic to storage format, data collection, and ETL choices. In practical terms, it gives application teams and data engineers a shared structure for events so analysts can work with a more consistent language for threat detection and investigation.

That sounds dry until you look at the daily work inside a security operations center (SOC). Security teams have to spend a lot of effort normalizing data from different tools so that they can correlate events. For example, detecting an employee logging in from San Francisco at 10 a.m. on their laptop, then accessing a cloud resource from New York at 10:02 a.m. could reveal a leaked credential.

Setting up a system that can correlate those events, however, is no easy task: Different tools describe the same idea with different fields, nesting structures, and assumptions. OCSF was built to lower this tax. It helps vendors map their own schemas into a common model and helps customers move data through lakes, pipelines, security incident and event management (SIEM) tools without requiring time consuming translation at every hop.

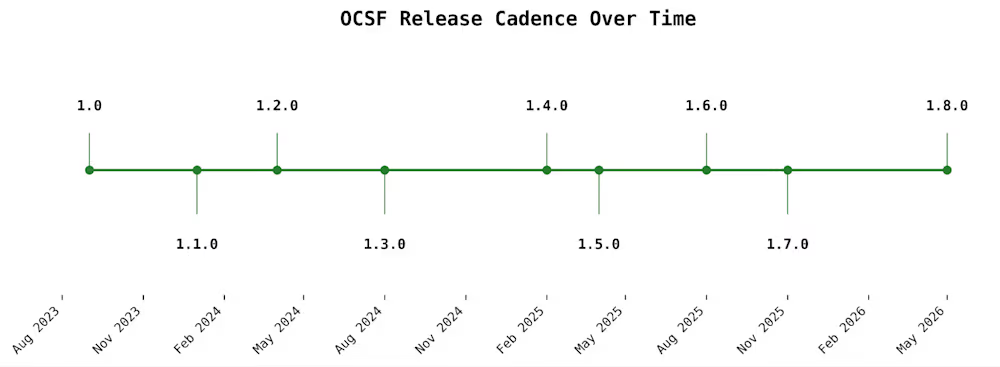

The last two years have been unusually fast

Most of OCSF’s visible acceleration has happened in the last two years. The project was announced in August 2022 by Amazon AWS and Splunk, building on worked contributed by Symantec, Broadcom, and other well known infrastructure giants Cloudflare, CrowdStrike, IBM, Okta, Palo Alto Networks, Rapid7, Salesforce, Securonix, Sumo Logic, Tanium, Trend Micro, and Zscaler.

The OCSF community has kept up a steady cadence of releases over the last two years

The community has grown quickly. AWS said in August 2024 that OCSF had expanded from a 17-company initiative into a community with more than 200 participating organizations and 800 contributors, which expanded to 900 wen OCSF joined the Linux Foundation in November 2024.

OCSF is showing up across the industry

In the observability and security space, OCSF is everywhere. AWS Security Lake converts natively supported AWS logs and events into OCSF and stores them in Parquet. AWS AppFabric can output OCSF — normalized audit data. AWS Security Hub findings use OCSF, and AWS publishes an extension for cloud-specific resource details.

Splunk can translate incoming data into OCSF with edge processor and ingest processor. Cribl supports seamless converting streaming data into OCSF and compatible formats.

Palo Alto Networks can forward Strata sogging Service data into Amazon Security Lake in OCSF. CrowdStrike positions itself on both sides of the OCSF pipe, with Falcon data translated into OCSF for Security Lake and Falcon Next-Gen SIEM positioned to ingest and parse OCSF-formatted data. OCSF is one of those rare standards that has crossed the chasm from an abstract standard into standard operational plumbing across the industry.

AI is giving the OCSF story fresh urgency

When enterprises deploy AI infrastructure, large language models (LLMs) sit at the core, surrounded by complex distributed systems such as model gateways, agent runtimes, vector stores, tool calls, retrieval systems, and policy engines. These components generate new forms of telemetry, much of which spans product boundaries. Security teams across the SOC are increasingly focused on capturing and analyzing this data. The central question often becomes what an agentic AI system actually did, rather than only the text it produced, and whether its actions led to any security breaches.

That puts more pressure on the underlying data model. An AI assistant that calls the wrong tool, retrieves the wrong data, or chains together a risky sequence of actions creates a security event that needs to be understood across systems. A shared security schema becomes more valuable in that world, especially when AI is also being used on the analytics side to correlate more data, faster.

For OCSF, 2025 was all about AI

Imagine a company uses an AI assistant to help employees look up internal documents and trigger tools like ticketing systems or code repositories. One day, the assistant starts pulling the wrong files, calling tools it should not use, and exposing sensitive information in its responses.

Updates in OCSF versions 1.5.0, 1.6.0, and 1.7.0 help security teams piece together what happened by flagging unusual behavior, showing who had access to the connected systems, and tracing the assistant’s tool calls step by step. Instead of only seeing the final answer the AI gave, the team can investigate the full chain of actions that led to the problem.

What’s on the horizon

Imagine a company uses an AI customer support bot, and one day the bot begins giving long, detailed answers that include internal troubleshooting guidance meant only for staff. With the kinds of changes being developed for OCSF 1.8.0, the security team could see which model handled the exchange, which provider supplied it, what role each message played, and how the token counts changed across the conversation.

A sudden spike in prompt or completion tokens could signal that the bot was fed an unusually large hidden prompt, pulled in too much background data from a vector database, or generated an overly long response that increased the chance of sensitive information leaking. That gives investigators a practical clue about where the interaction went off course, instead of leaving them with only the final answer.

Why this matters to the broader market

The bigger story is that OCSF has moved quickly from being a community effort to becoming a real standard that security products use every day. Over the past two years, it has gained stronger governance, frequent releases, and practical support across data lakes, ingest pipelines, SIEM workflows, and partner ecosystems.

In a world where AI expands the security landscape through scams, abuse, and new attack paths, security teams rely on OCSF to connect data from many systems without losing context along the way to keep your data safe.

Nikhil Mungel has been building distributed systems and AI teams at SaaS companies for more than 15 years.

Welcome to the VentureBeat community!

Our guest posting program is where technical experts share insights and provide neutral, non-vested deep dives on AI, data infrastructure, cybersecurity and other cutting-edge technologies shaping the future of enterprise.

Read more from our guest post program — and check out our guidelines if you’re interested in contributing an article of your own!

Tech

AT&T’s New OneConnect Bundles Mobile and Home Internet but There’s a Catch

It’s easier now to stay connected wherever you are, but getting to that point is still complicated. Wireless plans for phones and home internet plans are typically two separate things, with some crossover or discounts if you get them from the same provider.

AT&T OneConnect puts wireless and home service together in one bundle, with unlimited mobile data for up to 10 voice lines and gigabit broadband at home. However, it’s limited to new AT&T customers only. Here’s how the details break down.

OneConnect offers three pricing tiers, billed monthly:

-

Individual — $90: One member, one voice line, up to three data devices and one household with 1Gbps internet.

-

Duo — $120: Two members, two voice lines, up to six data devices and one household with 1Gbps internet.

-

Family — $225: Unlimited members, up to 10 voice lines, up to 10 data devices and one household with 1Gbps internet.

One notable detail is that the OneConnect subscription prices listed above include taxes and fees, a practice that’s quickly becoming increasingly rare among major carriers. On many plans, including AT&T’s newest wireless plans, those costs are added on top.

For comparison, an AT&T bundle for two people with unlimited wireless and gigabit-speed home internet would cost about $225, including two lines on the AT&T Premium 2.0 plan and AT&T Internet 1000 fiber at $65. For one person, a single Premium 2.0 wireless plan costs $90, plus $65 for home fiber. (It’s also important to note that speeds and availability vary depending on your location.)

As with any new connection plan, you’ll want to scrutinize the details so you know what you’re getting into.

For instance, OneConnect is currently limited to new customers; existing AT&T customers have no migration path to combine their broadband and wireless services under this digital umbrella. According to an AT&T spokesperson, “Once we gather customer feedback and validate the experience with our initial cohort, we will make OneConnect available to as many customers as possible.”

It’s also entirely BYOD — or ‘bring your own device’: “Limited to bring your own eSIM compatible, unlocked smartphones, tablets, and wearables,” reads the fine print on AT&T’s press statement. There are no phone deals tied to OneConnect, although the spokesperson didn’t rule out that possibility in the future.

Unlike AT&T’s standalone wireless plans, OneConnect follows a one-size-fits-all model. One benefit of AT&T mobile service is that each person on an account can select their own plan. For instance, a parent might choose AT&T Premium 2.0, while a teen could opt for the cheaper but more limited AT&T Value 2.0.

Other major carriers offer home internet and mobile service bundles, but they’re not packaged in the same way. Verizon and T-Mobile, for example, provide discounts if you’ve signed up for both types of plans.

AT&T is betting that account owners will want a simpler, bundled service instead of two separate plans. With unlimited talk, texting, data and AT&T’s Active Armor service for filtering out unwanted calls and texts, that’s a size that does seem to fit all.

Tech

Lucid blames dip in Q1 sales on seat supplier issue

Lucid Group finished 2025 on an upswing — building twice as many EVs as the previous year and reporting a 55% uptick in sales. Then the first quarter of 2026 arrived.

The company, which makes the Air sedan and Gravity SUV, reported Friday that it sold 3,093 vehicles in the first quarter, a 42% drop from the previous quarter and about 0.5% lower than the same period last year. It had built many more, about 5,500 in total.

Lucid said the sales dip, and the gap between production and deliveries, is not a demand problem. Instead, the company blames a supplier quality issue with its second-row seats, which disrupted deliveries of the Lucid Gravity for 29 days.

The supplier issue also prompted Lucid to recall more than 4,000 Gravity SUVs. Lucid told the National Highway Traffic Safety Administration that it discovered some of the anchors for the SUV’s second-row seat belts were not properly welded.

Lucid spokesperson Nick Twork confirmed to TechCrunch that the decrease in sales was tied to problems with the supplier. He said that due to an unapproved change made by a supplier, the company issued a stop on Gravity sales that lasted most of February to ensure proper vehicle quality before restarting them. Twork made a point of noting Lucid’s more recent success, saying that “following eight record quarters, we showed strong results in both January and March which very nearly achieved year-over-year growth on their own.”

Lucid said in its securities filing Friday that the issue has been addressed, and the company seems confident that disruption won’t affect its production goals.

Lucid reaffirmed its previously announce production guidance of between 25,000 and 27,000 vehicles this year. Lucid built 18,378 EVs in 2025. That would represent an increase of as much as 47% from last year.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

Lucid’s seat supplier troubles come as the company prepares to start building its first vehicle on a new lower-cost platform aimed at the mass market. Lucid has said that first vehicle will cost around $50,000, a price point that will put it in direct competition with the upcoming Rivian R2 SUV, as well as existing products like the Tesla Model Y, Tesla Model 3, and Chevrolet Equinox EV.

Tech

Seattle VR gaming studio Polyarc announces ‘significant’ layoffs

Polyarc, the Seattle-based VR gaming developer behind the award-winning Moss series, announced that it’s had to “significantly reduce the size of the company.”

The announcement, via LinkedIn on Monday, notes that the layoffs come after “an unsuccessful team-wide effort to secure funding following the cancellation of a major project.”

The company, which had around 52 employees according to LinkedIn, did not specify how many were affected, but said it plans to share a spreadsheet with information about those who were impacted to help them make connections in new job searches.

Polyarc was founded in 2016 by Tam Armstrong, Danny Bulla, and Chris Alderson, all three of whom had formerly worked on Destiny at Bungie. The studio’s debut project, the fantasy adventure Moss, came out in 2018 to critical and commercial success, which led to both a 2021 sequel and a multiplayer spinoff in 2023.

The Moss series, which began as exclusives for the PlayStation VR platform before going multiplatform, is on the short list of candidates for VR gaming’s “killer app.” In Moss, players take the role of a Reader, an unseen individual who discovers a magical book in a forgotten library. That book allows you to watch and affect events in the fantasy world of Moss, where a young mouse named Quill is on a quest to save her kingdom.

The problem for the VR market, however, is that much of it is driven by Meta, and Meta has been steadily stepping back from its VR endeavors for the better part of the last couple of years. In January, another wave of VR layoffs at Meta closed several studios and dramatically lowered the headcount at Bellevue, Wash.-based Camouflaj.

There are still major players in VR gaming, such as Valve and its upcoming Steam Frame. It’d be a mistake to say the sector is dead or dying, but Meta drove so much of the conversation around VR that its slow abandonment has destabilized the format.

In addition, the last three years have been a tough time to work in the video game industry, as numerous companies have been forced to slim or shut down. Other recently affected studios in the Pacific Northwest include Phoenix Labs, Monolith Productions, and Rec Room.

-

NewsBeat2 days ago

NewsBeat2 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business2 days ago

Business2 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion1 day ago

Fashion1 day agoWeekend Open Thread: Spanx – Corporette.com

-

Entertainment5 days ago

Fans slam 'heartbreaking' Barbie Dream Fest convention debacle with 'cardboard cutout' experience

-

Crypto World3 days ago

Crypto World3 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Tech6 days ago

Tech6 days agoThe Pixel 10a doesn’t have a camera bump, and it’s great

-

Crypto World4 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Entertainment7 days ago

Entertainment7 days agoLana Del Rey Celebrates Her Husband’s 51st Birthday In New Post

-

Tech6 days ago

Tech6 days agoAvatar Legends: The Fighting Game comes out in July and it looks pretty slick

-

Business3 days ago

Business3 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Sports4 days ago

Sports4 days agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Tech5 days ago

Tech5 days agoEE TV is using AI to help you find something to watch

-

Tech5 days ago

Tech5 days agoApple will hide your email address from apps and websites, but not cops

-

Fashion7 days ago

Fashion7 days agoAmazon Sundays: Soft Spring Layers

-

Politics5 days ago

Politics5 days agoShould Trump Be Scared Strait?

-

Tech6 days ago

Tech6 days agoElon Musk’s last co-founder reportedly leaves xAI

-

Fashion5 days ago

Fashion5 days agoThe Best Spring Trends of 2026

-

Tech4 days ago

Tech4 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

-

Crypto World5 days ago

Crypto World5 days agoU.S. rule change may open trillions in 401(k) funds to crypto

-

Tech5 days ago

Tech5 days agoFlipsnack and the shift toward motion-first business content with living visuals

You must be logged in to post a comment Login