If there’s anything that makes people more uncomfortable than highly advanced AI or nuclear weapons technology, it’s the combination of the two. But there’s been a symbiotic relationship between cutting-edge computing and America’s nuclear weapons program since the very beginning.

In the fall of 1943, Nicholas Metropolis and Richard Feynman, two physicists working on the top-secret atomic bomb project at Los Alamos, decided to set up a contest between humans and machines.

- Los Alamos National Laboratory recently partnered with OpenAI to install its flagship ChatGPT AI model on the supercomputers used to process nuclear weapons testing data. It’s the latest in a long history of symbiosis between America’s nuclear program and cutting edge computing.

- AI tools are already revolutionizing the way scientists are conducting research at Los Alamos, part of a larger program called Genesis Mission that aims to harness the technology to accelerate scientific research at America’s national labs.

- Comparisons of AI to the early days of nuclear weapons abound, both among critics and proponents, but Vox’s reporting trip to the lab found little evidence of the kind of doomsday fears the permeate conversations about AI elsewhere.

In the early days of the Manhattan Project, the only “computers” on site were humans, many of them the wives of scientists working on the project, performing thousands of equations on bulky analog desk calculators. It was painstaking and exhausting work, and the calculators were constantly breaking down under the demands of the lab, so the researchers began to experiment with using IBM punch-card machines — the cutting edge of computer technology at the time. Metropolis and Feynman set up a trial, giving the IBMs and the human computers the same complex problem to solve.

As the Los Alamos physicist Herbert Anderson later recalled, “For the first two days the two teams were neck and neck — the hand-calculators were very good. But it turned out that they tired and couldn’t keep up their fast pace. The punched-card machines didn’t tire, and in the next day or two they forged ahead. Finally everyone had to concede that the new system was an improvement.”

Today, at Los Alamos, a similar dynamic is taking place, as scientists at the lab increasingly rely on artificial intelligence tools for their most ambitious research. Like their punch-card ancestors, today’s AI models have a leg up on human researchers simply by virtue of not having to eat, sleep, or take breaks. Scientists say they’re also approaching tough problems in entirely new and unexpected ways, changing how research is conducted at one of America’s largest scientific institutions.

In recent weeks, in the wake of the feud between the Pentagon and Anthropic, as well as the reported use of AI software for targeting during the war in Iran, the partnership between the US military and leading AI companies has become a highly charged political topic. Less discussed has been the already extensive cooperation between these firms and the country’s nuclear weapons complex, under the supervision of the Department of Energy.

Last year, the Los Alamos National Lab (LANL) entered a partnership with OpenAI allowing it to install the company’s popular ChatGPT AI system on Venado, one of the world’s most powerful supercomputers. As of August, Venado was placed on a classified network, meaning that the AI chatbot now has access to some of the country’s most sensitive scientific data on nuclear weapons.

Supercomputers at Los Alamos’s high-performance computing center.Provided by Los Alamos National Laboratory/Joey Montoya, photographer

Supercomputers at Los Alamos’s high-performance computing center.Provided by Los Alamos National Laboratory/Joey Montoya, photographer

Supercomputers at Los Alamos’s high-performance computing center.Provided by Los Alamos National Laboratory/Joey Montoya, photographer

That wasn’t all. Later last year, the Department of Energy, which oversees Los Alamos and the country’s 16 other national laboratories, announced a $320 million initiative known as the Genesis Mission, which aims to “harness the current AI and advanced computing revolution to double the productivity and impact of American science and engineering within a decade.”

Few people are in a better position to think about the upsides and downsides of revolutionary new technologies than the people who today populate the mesa once occupied by Robert Oppenheimer, Feynman, and the other pioneers of the nuclear age. But when I visited the lab in January, I found that the researchers there were remarkably sanguine about the more existential risks that often come up in conversation about AI, even as they worked on the production of the world’s most dangerous weapons.

“They think we’re building Skynet; that’s not what’s going on here at all,” LANL’s deputy director of weapons, Bob Webster, said, referring to the superintelligent system from the Terminator movies. Geoff Fairchild, deputy director for the National Security AI Office, volunteered that he does not have a “p(doom),” the Silicon Valley shorthand for how likely one believes it is that AI will lead to globally catastrophic outcomes, and doesn’t believe most of his colleagues do either. “We don’t talk about it. I don’t think I’ve ever had that conversation,” he added.

For Alex Scheinker, a physicist who uses AI for the maintenance and operation of LANL’s massive particle accelerator, AI is an extraordinarily useful tool, but a tool nonetheless. “It’s just more math,” he said. “I don’t like to think about it like it’s magic.”

Still, the nuclear-AI comparison is unavoidable. Given the technology’s transformative potential, the dangers it could pose to humanity, and the potential for an innovation “arms race” between the United States and its international rivals, the current state of AI has frequently been compared to the early days of the nuclear age. And how people feel about the Manhattan Project — a triumphant union between the national security state and scientific visionaries? Or humanity opening Pandora’s box? — likely has a lot to do with how they view their work now.

Those making the comparison include OpenAI CEO Sam Altman who is fond of quoting Oppenheimer, and expressed disappointment that the 2023 biopic of the Los Alamos founder wasn’t the kind of movie that “would inspire a generation of kids to be physicists.” One of the film’s central conflicts is how a guilt-stricken Oppenheimer spent much of the second half of his life in an unsuccessful quest to control the spread of his creation. (Disclosure: Vox Media is one of several publishers that have signed partnership agreements with OpenAI. Our reporting remains editorially independent.)

The Trump administration has been explicit about the comparison. In the executive order announcing the mission, the White House invoked the creation of the atomic bomb, writing, “In this pivotal moment, the challenges we face require a historic national effort, comparable in urgency and ambition to the Manhattan Project that was instrumental to our victory in World War II.”

But if we really are in a new “Manhattan Project” moment, you wouldn’t know it in the place where the original Manhattan Project took place.

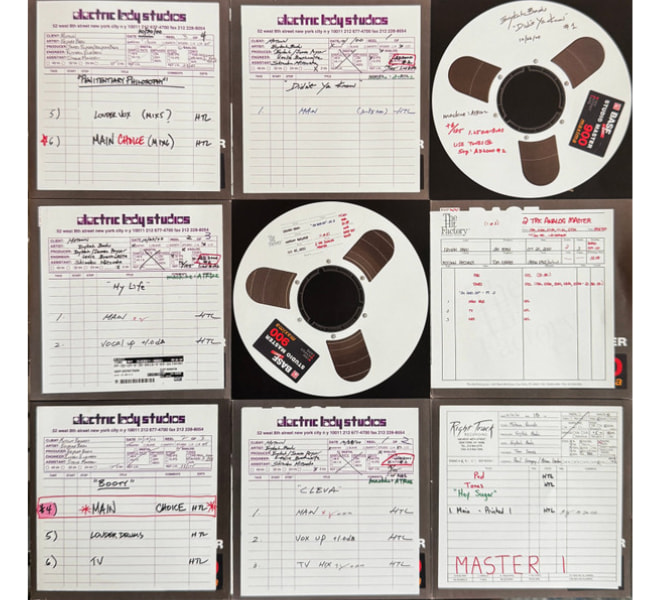

“The world’s nuclear information is right in there. You’re looking at it,” LANL’s director for high performance computing, Gary Grider, told me during my visit to Los Alamos in January.

We were staring through a glass window at a densely packed shelf of magnetic tapes, each of which could be accessed and read via a robotic system that resembled a high-end vending machine more than a hyperintelligent doomsday computer. The machine we were staring into contained nuclear data so sensitive it’s kept on physical drives rather than an accessible network, not that any of the data stored in the room I was standing in is exactly open source.

Magnetic tapes containing nuclear testing information at Los Alamos’s high-performance computing center.Provided by Los Alamos National Laboratory/Joey Montoya, photographer

I was in Los Alamos’s high-performance computing complex, a vast, brightly lit, 44,000-square-foot room in a building named for Nicholas Metropolis, containing six supercomputers with space cleared out for two more. The first thing that strikes visitors to the computing center, the refrigerator-like temperature and the roar of the overhead fans, both evidence of the gargantuan effort, in money and megawatts, that it takes to keep these machines cool. “Going into high-performance computing, I never thought that I’d be spending this much of my time thinking about power and water,” Grider told me. Computing at Los Alamos is an insatiable beast: The average lifespan of a supercomputer, the cost of which can run into the hundreds of millions of dollars, was once around five to six years. Now it’s around three to five.

Cutting-edge computing has been intertwined with the American nuclear enterprise from the beginning. Los Alamos scientists used the world’s first digital computer, ENIAC, to test the feasibility of a thermonuclear weapon. The lab got its own purpose-built cutting-edge computer, MANIAC, in the early ’50s. In addition to playing a role in the development of the hydrogen bomb, MANIAC was the first computer to beat a human at chess…sort of. It played on a 6×6 board without bishops and took around 20 minutes to make a move. In 1976, the Cray-1, one of the earliest supercomputers, was installed at Los Alamos. Weighing more than 10,000 pounds, it was the fastest and most powerful computer in the world at the time, though it would be no match for a modern iPhone.

Signatures of lab officials and executives, including Nvidia’s Jensen Huang, on the Venado Supercomputer.Provided by Los Alamos National Laboratory/Joey Montoya, photographer

I had visited Los Alamos to see MANIAC and Cray’s descendant, Venado, comprised of dozens of quietly humming 8-foot tall cabinets. Currently ranked as the 22nd most powerful computer in the world, Venado was built in collaboration with the supercomputer builder HPE Cray and chip giant Nvidia, which provided some 3,480 of its superchips for the system. It is capable of around 10 exaflops of computing — about 10 quintillion calculations per second. The signatures of executives, including Nvidia’s Jensen Huang, adorn one of the cabinets.

Last May, OpenAI representative, accompanied by armed security, arrived at Los Alamos bearing locked metal briefcases containing the “model weights” — the parameters used by AI systems to process training data — for its ChatGPT 03 model, for installation on Venado. It was the first time this type of reasoning model had been applied to national security problems on a system of this kind.

LANL’s computers are a closed system not connected to the wider internet, but the OpenAI software installed on Venado brings with it learning it has acquired since the company started developing it. Officials at the lab were not about to let a visiting reporter start asking the AI itself questions, but from all accounts, its users interface with it from their desktop computers essentially the same way the rest of us have learned to talk to ChatGPT or other chatbots when we’re generating memes or brainstorming weeknight recipes.

Those users include scientists at LANL itself as well as the country’s other main nuclear labs — Sandia, in nearby Albuquerque, and Lawrence Livermore, near San Francisco. Grider says demand for the new tool was immediately overwhelming. “I was surprised how fast people became dependent on it,” he told me.

Initially, the system was used for a wide array of scientific research, but in August, Venado was moved onto a secure network so it could be used on weapons research, in the hope that it can become an invaluable part of the effort to maintain America’s nuclear arsenal.

Whatever your attitude toward nuclear weapons, Los Alamos researchers argue that as long as we have them, we want to make sure they work.

Since the 1990s, the United States — along with every other country other than North Korea, has been out of the live nuclear testing business, notwithstanding Trump’s recent social media posts on the subject. But between the original Trinity detonation in 1945 and the most recent blast in an underground site in 1992, the United States conducted more than 1,000 nuclear tests, acquiring vast stores of information in the process. That information is now training data for artificial intelligence that can help the lab ensure that America’s nukes work without actually blowing one up.

Venado is effectively a massive simulation machine to test how a weapon would respond to being put under unique forms of stress in real-world conditions. We can “take a weapon and give it the disease that we want and then blow it up 1000 different ways,” as Grider puts it.

In some ways this fulfills the vision of Los Alamos’s founder Robert Oppenheimer, who opposed further nuclear tests after Hiroshima and Nagasaki on the grounds that we already knew these weapons worked and any other questions could be answered by “simple laboratory methods.”

Those methods are not so simple today. When Webster, the LANL deputy director of weapons, first got involved in nuclear testing in the 1980s, the “state of computing that we had was extremely primitive,” he said, and not a viable substitute for gathering new data. Today, he says, “we’re doing calculations I could only dream of doing” before.

Mike Lang, director of the lab’s National Security AI Office, suggested that using AI tools to analyze the data kept “behind the fence” could not only ensure the weapons work, but also improve them. “We’re using [the same] materials that we’ve been using for a very long time,” he said. “Could we make a new high explosive that is less reactive, so you can drop it, and nothing happens? [Or] that’s not made with toxic chemicals, so people handling it would be safer from exposures? We can go through and look at some of the components of our nuclear deterrence, and see how we can make it cheaper to manufacture, easier to manufacture, safer to manufacture.”

Whatever your attitude toward nuclear weapons, Los Alamos researchers argue that as long as we have them, we want to make sure they work.

“We don’t build the weapons to do something stupid,” Webster said. “We build them not to do something stupid.”

The Los Alamos lab’s mesa location, an oasis of pines in the midst of a stark desert landscape, is known to locals as “the Hill.” About 45 minutes north of Santa Fe (on today’s roads, that is), it was chosen during World War II for its remoteness, defensibility, and natural beauty. Oppenheimer, who had traveled in the region since his youth, had long expressed a desire to combine his two main loves, “physics and desert country.”

Eight decades after the days of Oppenheimer, the sprawling fenced-off Los Alamos campus feels a bit like a university town without the young people. Los Alamos County is the wealthiest in New Mexico and has the highest number of PhDs per capita in the country. The lab has around 18,000 employees and the population has boomed since the lab resumed production of plutonium pits — the explosive cores of nuclear weapons — as part of America’s ongoing $1.7 trillion nuclear modernization program. Federal officials recently adopted a plan for a significant expansion of the lab, including an additional supercomputing complex, which critics say fails to take account of the environmental impact of the facility’s electricity and water use as well as the hazardous waste caused by pit production.

“Gun site, the facility when the “Little Boy” bomb dropped on Hiroshima was assembled.Provided by Los Alamos National Laboratory/Joey Montoya, photographer

Officials at Los Alamos are quick to point out that despite what the lab is best known for, scientists there are working on more than just weapons of mass destruction. During my tour, I met with chemists using AI to design new targeted radiation therapies to improve cancer treatment and visited the Los Alamos Neutron Science Center, a kilometer-long particle accelerator that, in addition to weapons research, produces isotopes for medical research and pure physics experiments.

Critics point out that the vast majority of its budget is still devoted to weapons research, but still, Los Alamos is one of the best places in the world to observe the seismic impact AI is having on how scientific research is conducted. When the decision was made to move Venado onto a secure network, it cut off a number of ongoing scientific research projects, which is one big reason why two new supercomputers, known as Mission and Vision, are planned to debut this summer. Both are designed specifically for AI applications — one for weapons research, one for less classified scientific work.

AI projects, including at Los Alamos, are often criticized for their power use, but scientists at the lab say their work could ultimately result in safer and more abundant energy. There’s a long-running joke that nuclear fusion technology, which could deliver clean power in vast quantities, is perpetually 20 years away. LANL scientists are hopeful that AI could help crack the remaining scientific breakthroughs needed to get it off the ground. Several researchers mentioned the potential use of AI tools to design heat-resistant materials for use in nuclear fusion reactors. Scientists at LANL’s sister lab, Livermore, achieved the world’s first fusion ignition reaction a few years ago, though it lasted only a few billionths of a second. “The thing that excites me…is the notion that we can move out of this computational world and start interacting with these experimental facilities,” said Earl Lawrence, chief scientist at the National Security AI Office.

Researchers increasingly use AI for “hypothesis generation,” devising new potential compounds or materials for testing. But the main feature of AI that excited the Los Alamos scientists I spoke with the most harkens back to what Metropolis and Feynman discovered about using early computers 80 years ago: It can do more work, faster, and without breaks than any human. Increasingly, it can do the sort of physical real-world experiments that post-docs and junior researchers were responsible for as well.

Asked about how he envisioned the future of scientific research in a world of AI, Lawrence quipped, “I hope it’s more coffee shops and walks in the woods.” Grider, a career computer programmer, said, “I hope to hell we can get out of the code business.”

There are downsides to that ease, as well. The sort of grunt work that AI can now do more efficiently is how scientists once learned their craft, assisting senior scientists with research. As in other fields, the pathways to those careers could narrow.

“We need to be intentional about how we train the next generation of scientists,” Lawrence said.

From the atomic age to the AI age

Reminders of Los Alamos’s history are everywhere on the Mesa. During my visit to the lab, I toured the sites, now eerie abandoned historical monuments maintained by the National Parks Service, where the bomb detonated by Oppenheimer and company in the 1945 Trinity test, and Little Boy, dropped on Hiroshima, were assembled. They’re possibly the only US National Parks locations where visiting involves a safety briefing on radiation and nearby live explosives testing.

1/5Industrial boilers used in the original Manhattan Project.Provided by Los Alamos National Laboratory/Joey Montoya, photographer

But the heirs to Oppenheimer and Feynman have mixed feelings about the Manhattan Project metaphor when it comes to AI.

Lang felt it was a mistake to characterize AI as a weapon, or frame development as an arms race, with China the main competitor this time instead of Germany. He preferred to think of today’s research as continuing the Manhattan Project’s model of “giving a bunch of multidisciplined scientists a goal to really go after and try to make progress on.” Others pointed to the scientists who were concerned at the time about the risk of a nuclear explosion igniting the earth’s atmosphere as somewhat equivalent to today’s AI “doomers.”

There’s also a fundamental difference between the two in how knowledge is disseminated. “In the very early days of nuclear energy, there were only a handful of people who had the knowledge and understanding to even know what was going on,” said Fairchild, the deputy director for LANL’s National Security AI Office. Plus, supplies of uranium and plutonium could be tightly controlled. “These days, everybody knows what’s going on…and much of it is happening in open source.”

AI is also developing in a very different way from previous technologies with national security implications. In the past, the government and military have often dictated academic research into futuristic tech to meet their own needs, with commercial applications only being found later: The internet may be the prime example. Now, as LANL’s partnership with OpenAI shows, it’s the government and military racing to react to cutting-edge applications developed first by private industry for commercial use.

“For the very first time, I would argue, on a really big scale, we find ourselves not in a leadership role here,” said Aric Hagberg, leader of LANL’s computational sciences division.

There may also be an AI-atomic parallel in the sheer size of investment proponents should be devoted to the advancement of the technology. Ilya Sutskever, OpenAI’s former chief scientist once remarked (maybe jokingly) that in a world of superintelligent AI “it’s pretty likely the entire surface of the Earth will be covered with solar panels and data centers.” The remark brings to mind another one by the Nobel Prize-winning physicist Niels Bohr, who had been skeptical that the United States would be able to build an atomic bomb “without turning the whole country into a factory.” When Bohr first visited Los Alamos, he felt, stunned, that the Americans had “done just that.”

The majority of the Manhattan Project was not the work done on chalkboards on the Hill by physicists, but the industrial scale efforts to enrich uranium and produce plutonium in Oak Ridge, Tennessee and Hanford, Washington. The latter site, carried out in large part by chemical firm Dupont — a “public-private partnership” of its era — produced radioactive waste that is still being cleaned up today. Likewise, the work of producing the AI future is as much or if not more about a massive build-out of data centers and the power needed to keep them cool and humming as it is the cutting edge research coming out of Silicon Valley or government labs.

When you visit Los Alamos, it’s hard not to be struck by the amount of ingenuity — in everything from nuclear physics, to explosive design, to revolutionary new techniques in high-speed photography — as well as the sheer industrial output that turned theoretical physics into a workable bomb in just three years.

You can still see the raw intellectual talent and can-do spirit that built the most advanced civilization the world has ever seen at Los Alamos today, and can easily imagine how it might build an even better one tomorrow. But it’s also impossible not to wonder if you’re seeing something else: Humanity’s thirst for power over the material world meeting with its instincts toward fear and aggression to engineer new nightmares. Perhaps we’ll get an answer soon.

This story was produced in partnership with Outrider Foundation and Journalism Funding Partners.

You must be logged in to post a comment Login