Artificial intelligence didn’t roll out slowly. In fact, at times it feels like it landed all at once.

In just a few years, systems that began as internal experiments are now embedded in customer support, fraud detection, software development, and even IT infrastructure operations.

But there’s a problem.

CEO and co-founder, Fortanix.

While AI capabilities have advanced, the way we secure them hasn’t kept up.

Most organizations are still applying traditional security models to a fundamentally different kind of workload, and it’s leaving a critical gap at runtime, or the exact moment when AI systems do their work.

The Illusion of Coverage

For years, enterprise security has focused on two primary states of data: when it’s stored and when it’s moving. Encryption for data at rest and in transit, with identity and access controls for both.

These controls still matter. But there’s a third state that’s far more complex and far less protected: data in use.

When an AI model runs, sensitive data is actively processed in memory. Model weights, which are often the most valuable intellectual property an organization owns, are loaded into memory. Prompts, responses and contextual data are generated and transformed in real time.

In most environments, all of that becomes visible to the underlying system. The uncomfortable reality is that even well-secured environments can expose their most valuable assets at the moment they’re being used.

Where AI Security Actually Breaks

When security teams investigate AI-related risks, the root cause rarely traces back to perimeter defenses. The issues tend to emerge deeper in the lifecycle across three key phases:

1. Training: When data quietly leaks into models. Training pipelines span storage systems, shared compute environments, orchestration layers and debugging tools. They can be messy: data moves constantly, intermediate artifacts are created and cached, and logs accumulate quickly.

In this environment, sensitive information might surface in unexpected places. Models themselves may unintentionally retain elements of the sensitive data they were trained on. And model weights, which encapsulate that learning, are often handled more casually than they should be.

This all creates a subtle but serious risk where exposure doesn’t always come from a direct attack. Sometimes it comes from normal development practices.

2. Inference: An overlooked exposure layer. Once a model is deployed, attention shifts to inference, or the point at which inputs become outputs.

On the surface, it looks simple. But in practice, inference workflows involve multiple streams of sensitive data, including user prompts and queries, generated responses, internal enterprise data retrieved to ground outputs, and the model itself.

Much of this data is processed through monitoring tools, logging systems and debugging pipelines, often in plaintext.

Even without a breach, sensitive information can be exposed through routine operations. Troubleshooting dashboards might capture more than intended, or logs could persist longer than expected. Shared infrastructure also introduces more potential for leakage.

Inference security isn’t only about blocking access. It’s about controlling what happens during execution, and most organizations aren’t doing that yet.

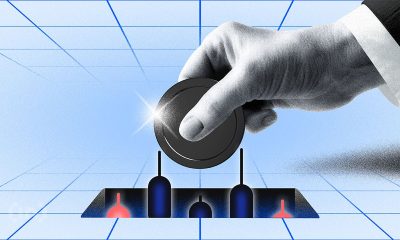

3. Runtime: The blind spot in modern security. The most critical yet least protected phase is the runtime phase. This is where models actually execute, encrypted data is decrypted, and model weights exist in memory. And it’s precisely where traditional security models fall short.

Even in environments with strong identity management controls and encryption policies, runtime assumes a certain level of trust in the underlying system. If that system is compromised, or even simply misconfigured, the protections around it don’t matter because keys are still released, workloads still run, and sensitive assets are still exposed.

This is why runtime is currently the weakest link, and why it has emerged as the true security boundary for AI systems.

Why the Problem Becomes Worse at Scale

As organizations expand their use of AI tools, the risks don’t just increase. They multiply. AI workloads are rarely isolated. They more commonly run across distributed environments, shared accelerators, and multi-tenant infrastructure. They interact with internal systems and external services, and they operate continuously, not intermittently.

This creates a compounding effect:

1. More data flowing through more systems.

2. More models deployed across more environments.

3. More opportunities for exposure during execution.

At the same time, the value of what’s being processed is going way up. Proprietary models are becoming core business assets, and sensitive enterprise data is being used to fine-tune outputs and drive decisions.

In this context, a single weak point at runtime becomes a major systemic risk.

Top Priority: Rethinking Trust in AI Systems

The core issue isn’t a lack of security tools. It’s a mismatch in assumptions when it comes to trusting the infrastructure AI runs on.

With traditional security, the assumption has always been that once a workload is inside a trusted environment, it can be relied upon to behave securely. But AI changes this because these systems are dynamic. They process sensitive data continuously, rely on complex stacks that are difficult to fully validate, and often run in environments that organizations don’t fully control.

In other words, crossing the perimeter isn’t the hard part anymore. Staying secure after crossing it is.

To address this, security needs to move closer to the workload itself. So, instead of focusing only on protecting access to systems, organizations need to protect what happens inside them, particularly during execution. That means:

1. Ensuring that data remains protected even while it’s being processed,

2. Preventing unauthorized access to model weights during runtime,

3. Verifying that workloads are running in trusted environments before allowing them to execute.

This is where approaches like Confidential Computing and hardware-based isolation are making a difference. By creating protected execution environments and tying access to cryptographic verification, the industry is moving security from assumption-based trust to proof-based trust.

In simple terms: don’t trust the system. Make it prove it’s secure.

Security Has Moved to the Moment of Use

For years, organizations have invested in securing where data lives and how it moves. But with AI, the most important moment is when the model runs, and data, logic and decision-making converge in real time.

That’s where the real risks are, and that’s where security needs to be focused.

The organizations that recognize this shift early will set themselves up to scale AI safely. Those that don’t may find that their most advanced systems, built on an outdated trust models, are highly vulnerable.

In modern AI, security isn’t defined by the perimeter. It’s defined by what happens inside it.

This article was produced as part of TechRadar Pro Perspectives, our channel to feature the best and brightest minds in the technology industry today.

The views expressed here are those of the author and are not necessarily those of TechRadarPro or Future plc. If you are interested in contributing find out more here: https://www.techradar.com/pro/perspectives-how-to-submit

You must be logged in to post a comment Login