However you feel about AI writing, it has a few giveaways. According to the writer Imogen West-Knights, “there’s things like negative parallelisms…or excessive use of metaphor and similes, especially ones that don’t quite make sense or that come very rapidly, one after another. Every noun having an adjective attached, certain kinds of repetitive syntactical blocks that appear.”

Tech

Amazon unifies Alexa+ and Rufus as AI rivals move into online shopping

Amazon.com and Alexa are finally talking to each other.

The tech giant on Wednesday announced Alexa for Shopping, a new capability that connects its Rufus e-commerce chatbot with its Alexa+ assistant, aiming to unify product research, user preferences and shopping activities across Amazon’s apps, websites and Echo devices.

The move comes as consumers increasingly turn to popular AI assistants like OpenAI’s ChatGPT and Google’s Gemini for shopping advice. By creating a more integrated AI shopping experience, Amazon is aiming to keep that research and the resulting purchases on its own platforms.

Several of Amazon’s new features reflect the broader push into agentic AI, which takes action on a customer’s behalf. For example, Alexa for Shopping can monitor prices and automatically purchase an item when it hits a target, or restock household essentials on a schedule.

With the integration, Amazon is retiring the “Rufus” name from its shopping interface, replacing the chatbot with Alexa for Shopping branding in its app and on its website. Amazon says Rufus will continue to power parts of the experience behind the scenes.

Broader landscape: ChatGPT, Gemini and Perplexity have all launched shopping features in recent months, with Google enabling in-chat checkout from retailers like Walmart and Wayfair. (OpenAI pulled back on its in-chat checkout feature in March after it failed to gain traction).

Amazon is also looking to keep rival AI agents off its platform: A federal judge in March blocked Perplexity’s Comet browser from shopping on Amazon on behalf of users, though the order was stayed pending appeal. In a statement at the time, Amazon called it “an important step in maintaining a trusted shopping experience for Amazon customers.”

On the product front, Amazon is betting that a unified and personalized experience will matter more to customers than the ability to compare products across retailers in a general-purpose AI assistant.

Rollout details: Alexa for Shopping will roll out in the U.S. over the coming week, the company says. It will be available for free to customers signed into an Amazon account through the Amazon Shopping app and Amazon.com, with no Prime membership, Echo device or Alexa app required.

The company is also bringing the full Amazon shopping experience to Echo Show devices, starting with Alexa+ customers on the latest Echo Show 15 and 21, with other devices to follow.

Use cases and features: Rajiv Mehta, Amazon’s vice president of Conversational Shopping, said the company saw customers starting shopping “missions” in one place and restarting them somewhere else because Rufus and Alexa didn’t share memory or context.

The idea is that “the customer doesn’t have to think about where they started a discussion with Amazon,” Mehta said in an interview with GeekWire. The feature uses what customers have already told Amazon once, then makes that context available on other Amazon devices, sites, and apps.

For example, citing his own usage, Mehta said a customer could brainstorm a science fair project with Alexa on an Echo device, then open the Amazon app and ask for supplies without re-explaining the project. Or a shopper could research laptops in the Amazon app, set a price alert, and get notified on their Echo when the price drops, and buy it with a voice command.

Other features in Alexa for Shopping include:

- Asking questions directly in the main Amazon search bar, rather than opening a separate chat window.

- Scheduled actions that automate tasks like restocking household essentials, getting alerts when a favorite author releases a new book, or adding a product to a cart when it drops below a set price.

- Custom shopping guides for big purchases that compare features, prices and reviews across Amazon and the web.

- Product price history expanded to a full year, up from 30 and 90 days.

Privacy and personalization: Amazon says customers can review and manage their Alexa interactions and conversations through the Alexa Privacy Dashboard. They can also ask Alexa for Shopping what it knows about them and update personal details like family members, pets, interests and dietary needs.

Amazon’s evolution: Rufus launched in 2024 and was used by more than 300 million customers in 2025, according to the company. On Amazon’s most recent earnings call, CEO Andy Jassy said monthly active users of Rufus were up more than 115% and engagement was up nearly 400% year over year.

Jassy compared third-party AI shopping agents to the early days of search engines referring business to e-commerce. Those agents lack personalization features and shopping history and often can’t get pricing or product information right, he said, noting that customers who want to shop at a specific retailer will often start with its own assistant as a result.

Amazon’s ambition, Jassy said, is to develop “the best shopping assistant anywhere.”

Tech

Campfire’s Chimera is a hybrid of premium headphone sound

Campfire Audio has built its most complex in-ear monitor to date with the Chimera, a nine-driver flagship IEM that like the figure from Greek mythology that it’s named after, combines many elements, including dynamic, balanced armature, electrostatic, and bone-conduction drivers.

These drivers are all housed inside a single CNC-machined magnesium shell, which is hand-assembled at the brand’s Portland, Oregon facility.

The drivers look to divide responsibility across the frequency range, with a newly developed 10mm True-Glass dynamic driver covering low and low-mid frequencies. A dual-diaphragm balanced armature handles the midrange, with two dedicated high-frequency balanced armatures for clarity and articulation. Then there are four electrostatic super-tweeters that extend into the uppermost range for air and precision.

Alongside those eight conventional drivers sits a bone-conduction unit embedded directly into the magnesium shell, a first for Campfire Audio. It allows low-frequency energy to be felt physically through the shell as well as heard acoustically, adding tactile weight to bass content that a acoustic driver arrangement supposedly cannot replicate.

Internally, a pressure valve regulates airflow behind the dynamic driver while a final-stage tuning damper sits integrated into the nozzle, two components that work alongside Campfire’s acoustic routing to maintain coherence across a driver array that combines four different transducer technologies operating at the same time.

The shell pairs that magnesium body with a carbon fibre and brass Damascus faceplate, where layers of brass are folded into carbon fibre and then CNC-machined to produce a patterned surface that carries subtle variation between units, with the finish available in black and gold PVD variants.

Each Chimera ships with the ALO Audio Valence-6 cable, marking the reintroduction of the ALO Audio brand and features four high-purity copper conductors alongside two mixed copper and silver-plated copper conductors, finished with black anodised aluminium hardware throughout.

Pre-sale opens on 16th May 2026 ahead of an expected June shipping window, with the Chimera priced at £6,999 / $7,500, with limited initial quantities available worldwide.

Tech

Is it possible to tell if a book is written by AI?

So naturally, when an author uses AI to write their book, the publishing industry can easily spot it, right? As it turns out, not necessarily. AI models are built using human writing, the good and the bad, which is why it can be hard to tell whether something was written by a chatbot or by a person who loves a bad metaphor. The problem is all the more acute with smaller fragments of text, where there’s less room for AI’s telltale patterns and flatness to emerge.

To find out just how good AI has gotten at imitating human writing, the writer and journalist Vauhini Vara decided to run an experiment on the people who know her writing the best. She thinks there is a misconception among writers and readers that “there’s a certain kind of way that AI generates language and it’s super different from the way writers do.” So could her friends distinguish between her work and an AI-generated imitation of her work? She told Today Explained co-host Noel King about what happened next.

Below is an excerpt of their conversation, edited for length and clarity. There’s much more in the full podcast, so listen to Today, Explained wherever you get podcasts, including Apple Podcasts, Pandora, and Spotify.

Nothing we love more at Today, Explained than a person running an experiment on herself! Vauhini Vara, writer, journalist, author of Searches, in paperback now, tell me everything.

There’s a researcher named Tuhin Chakrabarty whose work I’ve covered before, and he had already conducted this experiment. He and colleagues basically trained AI models on the work of established, accomplished writers.

What that means is he basically got the AI model to generate language that looked a lot like language from those authors. And then he had readers who were graduate writing students read those passages generated by AI and also read imitations by fellow graduate writing students and say which one they liked better. And they tended to like the ones by the AI models more than the ones by actual human beings.

I had him do the same thing with my work, but a twist on it. I had him train an AI model on my three previous books, on pieces of journalism I’ve written. And then I had him get his AI model to generate passages sounding like something from a forthcoming novel that I haven’t published yet or shared with anyone. I put that alongside passages that I had written. I sent those to people who know my work really well. I’m talking about my best friend since I was 13, writer friends who I’ve known since I was 19, 20 years old. And I asked if they could tell the difference and none of them could.

So the people who know you best in the world don’t know you that well, apparently. Or AI is exceptionally good at what it is doing. Give me some examples of what happened here. Can you read me something that you wrote and then something that the AI wrote, and let’s see if I can tell any differences?

It’s funny, I can’t remember now which ones are mine and which ones are the AI!

Gaia said, it seemed to her that we’d been on similar trajectories. We’d both spent many years creating something that we cared deeply about with my journalism. She with her startup, and then gone on to focus on empowering others to do the same. She said she’d been surprised to find that mentoring other founders was even more meaningful than running her own startup In business terms, the ROI was higher if you were willing to count fulfillment as a return.

That’s nice. I like that. Yeah, I would say as writing, that was nice. Beginning, middle, end, lands on a point. I enjoyed it.

That one was actually AI.

Damn. AI, you landed in such a nice spot. Okay. Read me something that you wrote, please.

Okay, now we have a spoiler that I’m going to read you something, something from me.

I’d like to argue that we write because we feel compelled to no matter whether anyone will read them, but is that true? When I was younger, I used to keep a journal for myself. I didn’t want anyone else to ever read it, which meant I didn’t need to describe the people in places I was writing about or explain why they mattered. When my mom did read my journal in the ninth grade, I considered it the biggest betrayal I’d ever experienced. But the saving grace was knowing that she could not have possibly understood most of what I was writing about. I had an audience of one myself.

I don’t know — I set you up to say that!

No, no, no. Actually, you didn’t. I would be very honest and I did sort of want to curveball you, but that was very pretty. Do me a favor, read the first two sentences of what you wrote one more time for me.

I’d like to argue that we write because we feel compelled to no matter whether anyone will read them, but is that true?

What is the “them” referring to?

It’s an error! It’s a grammatical error on my part. And good job catching it because a lot of people assumed that one was AI, and I think the best indication that it was actually me is that there is that grammatical error. AI wouldn’t have made a grammatical error like that.

This is the thing that I would like us to talk about: AI does not make mistakes. And in the first half of the show, our guest, also a writer, described AI as kind of soulless. And I think that was part of what she was pointing to.

What you read me by the AI wasn’t bad. So here’s a question for you: When all this was said and done [and] people could not tell what was you — people who know you well — how did you feel about that? Did you feel threatened? Did you feel suspicious of your friends and family?

I was of two minds, because on the one hand I didn’t feel threatened, but I found myself questioning my own assumption about myself, which is that I identify as a writer who is very invested in originality, who really wants every new book to be completely different from the previous books. And so the fact that this AI was trained on my previous books and could predict the style of the writing in the new book suggested that I wasn’t as original as I thought that my new book wasn’t as different from the previous books as I thought.

At the same time, on the other hand, I actually felt vindicated because I disagree with the other author who was your previous guest about the soullessness of AI-generated text. I don’t think that AI-generated text is by definition easily distinguishable from human text because of a kind of soullessness inherent in the text.

Can readers tell something that is AI versus something written by a human?

It seems like they can’t, and I can’t myself. And this actually gets back to what we were discussing earlier about the question of whether AI generated text is convincing or soulless.

I think the reason a lot of people assume AI writing is going to sound soulless is that AI companies, in their most recent versions of their products, have created these products that are specifically designed to sound a certain way, a certain kind of corporate customer service speak. And so people think that’s just inherently the way AI sounds, but it’s not true. AI can sound any number of ways.

It’s technically very easy actually to build an AI, to train an AI model that sounds human-like even literary. The reason we’re not that familiar with it is that that’s not what the products look like currently.

Ultimately, do you think AI is going to end up changing our relationship to literature, or do you think everybody who reads is going to be as skeptical and skeeved out as you and I are?

Research shows not only that in some cases people prefer AI-generated text to a human-generated text, but also that if they’re told that a piece of text is AI-generated, they become uninterested in it. And so it seems clear that the reading public does not want to read text generated by AI if they know that it’s generated by AI.

I think we focus a lot on this human/technology binary — on, “‘Oh, it’s weird if a machine creates the language.” But I think a big part of it is that we want to be communicating with one another. We don’t want to be receiving our art from enormous tech companies that have a lot of wealth and have a lot of power and want to control us.

Tech

Man who lost Bitcoin wallet password while high recovers $400,000 using Claude AI after 11-year lockout

An X user with the handle cprkrn writes that he was locked out of his wallet over 11 years ago because he got stoned, changed his password, and forgot it.

Read Entire Article

Source link

Tech

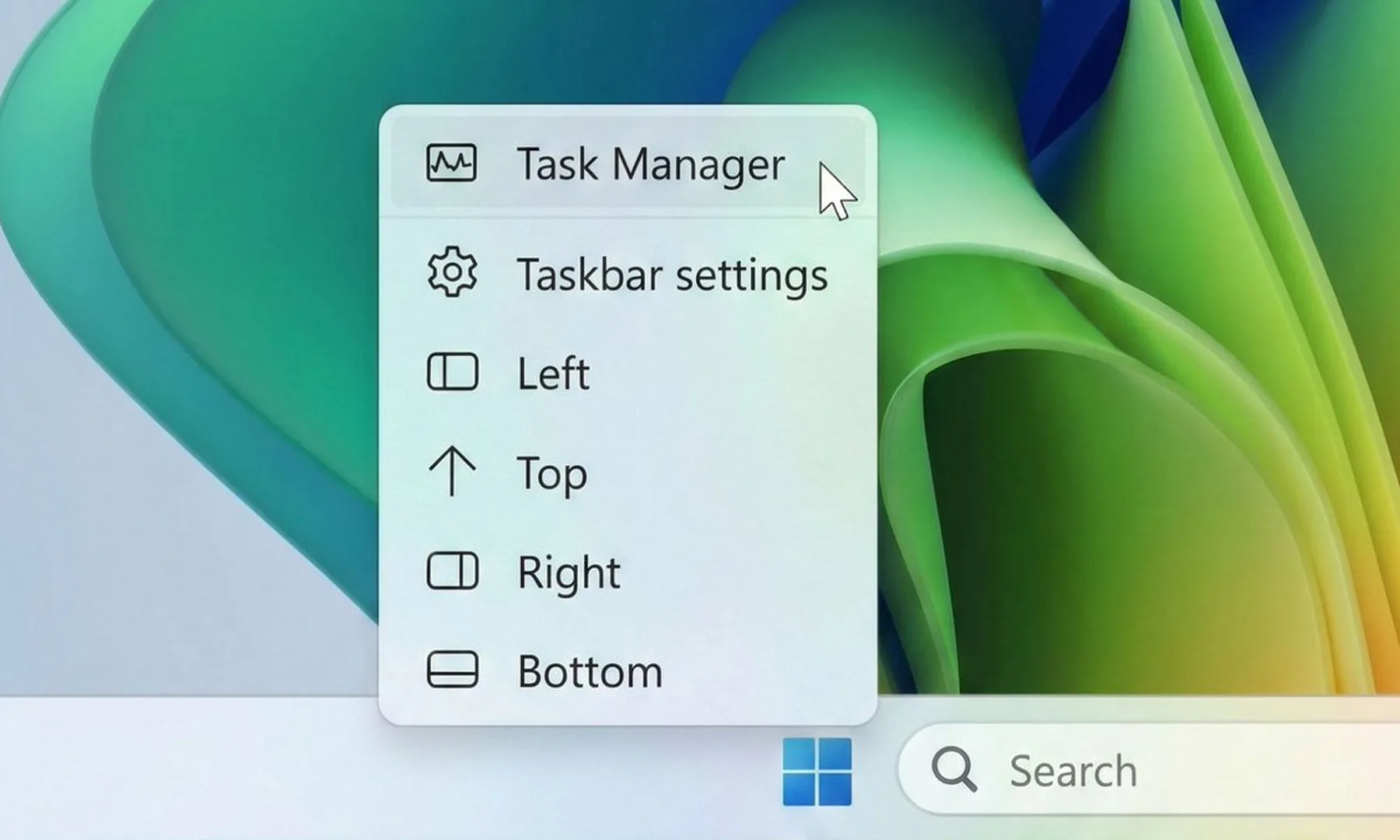

Microsoft is finally fixing the most annoying thing about Windows 11

For many Windows users, the taskbar in Windows 11 has always felt strangely restrictive. Microsoft redesigned the interface with a cleaner, more modern look, but in the process removed several customization options people had been using for years. One of the biggest complaints? The inability to freely move the taskbar around the screen. Now, Microsoft finally seems ready to loosen things up.

The company has started testing a major overhaul of the taskbar and Start menu for Windows 11 Insiders in its Experimental channel. And honestly, this feels like Microsoft acknowledging that users want their PCs to feel personal again.

Windows 11 could soon feel far more flexible

The biggest change here is the return of a movable taskbar. Instead of being locked to the bottom of the screen, users in the test build can now shift it to the top or even place it vertically along either side of the display. That might sound like a small tweak, but for longtime Windows users, it’s a pretty significant reversal. Earlier versions of Windows allowed this kind of flexibility for years before Windows 11 simplified everything into a more rigid layout.

Microsoft is also testing different taskbar sizes, including a compact version that could be especially useful on smaller laptops and tablets where screen space matters more. Even the Start menu is becoming more adjustable. Users will reportedly be able to resize it and switch between smaller and larger layouts, depending on how they prefer to organize apps and shortcuts.

The company is finally listening

Beyond the visual changes, Microsoft is also trying to clean up parts of the Start menu that many people found cluttered or unnecessary. New controls will let users decide which sections appear inside the menu, including areas for pinned apps, recommendations, and app lists. Interestingly, Microsoft is also renaming the “Recommended” section to “Recent,” which honestly makes the feature easier to understand at a glance. The section mainly surfaces recently used files and newly installed apps anyway, so the older name often felt vague.

There are also smaller but thoughtful privacy-focused touches being added. For example, users can hide their profile photo and account name from the Start menu, which could come in handy during presentations or screen-sharing sessions. Microsoft says these changes will roll out to Insider testers over the next few weeks. More importantly, the company openly admits that the Start menu and taskbar are where users judge Windows the hardest. And after years of complaints about Windows 11’s limited customization, this update feels like Microsoft is finally taking that criticism seriously.

Tech

A light laptop that means business

Verdict

The Acer TravelMate P6 14 AI is a capable Windows business laptop with solid internal grunt from its Lunar Lake processor that comes alongside a competent port selection, lightweight chassis and excellent battery life. For the price, it’s a solid option, although I do bemoan the lack of an OLED screen in any guise.

-

Lightweight and sturdy chassis

-

Solid power inside

-

Excellent battery life

-

An OLED screen would have been nice

Key Features

-

Sub 1kg weight:

The TravelMate P6 14 AI tips the scales at less than a kilo, making it one of the lighter laptops of its kind.

-

Intel Core Ultra 7 258V processor inside:

It also features a potent eight-core processor that provides a solid amount of power for productivity tasks.

-

65Whr battery inside:

This Acer laptop also has a capacious battery inside that allows it to power through a working day or two away from the mains.

Introduction

The Acer TravelMate P6 14 AI offers a clever blend of lightness and durability for a humble business laptop.

It’s unique in that it tips the scales at just under a kilo, just like the new Asus Zenbook A14 (2026), but comes with more business-class sensibilities with its ports, solid IPS screen, good software selection and a competent Lunar Lake processor inside.

For the £1599.99 price tag, this feels quite reasonable in the current market, not least with price rises across the board from other manufacturers that leave key rivals to the likes of the Dell XPS 14 (2026).

Other business-class options, such as the Lenovo ThinkPad T14s 2-in-1 Gen 1 and Dell Pro 14 Premium, are some way up the road in price, giving this Acer choice a potentially clear run as one of the best laptops we’ve tested. I’ve been putting it through its paces to find out.

Design and Keyboard

- Lightweight and sturdy chassis

- Solid port selection

- Tactile keyboard and trackpad

It seems that Acer has attempted to replicate the excellent Swift Edge 14 AI with the TravelMate P6 14 AI in some respects, with this laptop coming with cleverly engineered materials to make it sleek and light, not least for a business machine.

It’s got a blend of a carbon fibre lid (as proudly displayed with a little logo) and magnesium-aluminium alloy elsewhere to allow this laptop to tip the scales at 990g without it feeling like it’s much cheaper than the retail price suggests. This is a lightweight laptop without any real flex or bend at the corners or in the keyboard tray, which is excellent.

The ports on the TravelMate P6 14 AI are strong, too, with a pair of Thunderbolt 4-capable USB-C ports on the left alongside a USB-A and an HDMI port. The right side has another USB-A, a headphone jack, and security slot. For most use cases, this is more than adequate, although pros may wish for an SD card reader to supplement.

The keyboard here is also a reminder of other Acer laptops I’ve tested, with a snappy and short tactile travel in a smaller form factor layout that’s comfortable for extended periods. It’s also backlit with a bright, white light that’s sure to help for after-dark working.

As for the trackpad, it’s more about the width than depth, but nonetheless gives your fingers excellent real estate to work with. It doesn’t feel like a haptic trackpad, instead choosing to actuate with a defined mechanical click under finger.

Display and Sound

- High-res IPS screen is decent

- Okay contrast and black levels

- Reasonable speakers

Acer bundles an IPS panel with the TravelMate P6 14 AI, opting against an OLED for some reason. Nonetheless, it’s a solid 14-inch 3K (or 2880×1800) resolution panel with upwards of a 120Hz refresh rate for detailed and smooth action.

In a general sense, this panel performs as you’d expect a decent IPS option to, with reasonably deep blacks (0.10 at 50%, rising to 0.38 at peak brightness) and okay contrast (1260:1 contrast ratio), plus a near-perfect 6600K colour temperature. I also measured 471.2 nits of peak SDR brightness here, making this a punchy panel in that sense.

Colour accuracy here is perfectly cromulent for mainstream workloads, with 97% coverage of the mainstream sRGB gamut, alongside 79% DCI-P3 and Adobe RGB results. This means this panel is okay for general productivity tasks, although it isn’t the best for more creative, colour-sensitive tasks.

The TravelMate P6 14 AI’s speakers are okay, but nothing really to write home about. There’s decent mids and some bass, but you’ll want to be using the headphone jack or Bluetooth 5.4 connectivity for much better audio.

Performance

- Tried-and-tested Intel Lunar Lake processor

- Beefy iGPU against Snapdragon alternatives

- Capacious SSD, but a little on the slow side

Inside, this TravelMate P6 14 AI is technically using a last-gen Intel chip, although for the non-X-prefixed Panther Lake chips found in base model ultrabooks for the 2026 model year, such as the Dell XPS 14 (2026), the needle hasn’t moved much beyond Lunar Lake.

If you need a quick refresher, the Core Ultra 7 258V processor inside this laptop is an eight-core and eight-thread chip with a boost clock of up to 4.8GHz. It’s designed to provide a solid amount of grunt without sacrificing too much on endurance and longevity, making it a good choice for laptops such as this one.

The scores this Acer laptop achieved in both the Geekbench 6 and Cinebench R23 tests are in the ballpark for the processor inside, matching well against key rivals. That means strong single-core performance and decent, if a little disappointing, multi-threaded scores, owing to the lack of hyperthreading against AMD’s crop of modern laptop chips.

Both the PCMark 10 and 3DMark Time Spy scores were excellent, too, proving the suitability of the TravelMate P6 14 AI for productivity tasks and how powerful the Arc 140V integrated graphics are. The score it garnered here is several times that of the Adreno iGPU inside the first-gen Snapdragon X-powered laptops, and still remains ahead of the new Snapdragon X2 Elite SoC’s integrated graphics found in the likes of the Asus Zenbook A14 (2026).

Acer has also been quite generous with the TravelMate P6 14 AI’s RAM and storage configuration, given what’s going on at the moment. 32GB of RAM provides ample headroom for multi-tasking and more intensive loads, while a 1TB ASSD gives you good room for storin’ stuff. With this in mind, a slight chink in this laptop’s armour is that it isn’t the fastest SSD I’ve come across, with tested read and write speeds of 4794.88MB/s and 3911.05MB/s, respectively.

Software

- Full-fat Windows 11 installed

- Some Acer-specific apps present

- Copilot+ PC functionality is here

The TravelMate P6 14 AI comes running proper Windows 11, although it comes with some unnecessary apps or shortcuts, such as a taskbar one for Booking.com, oddly.

There are more enterprise-centric apps, as you’d expect on a business laptop, such as Acer’s catch-all TravelMateSense app. This provides access to elements such as a file shredder, USB device filter, built-in file encryption and even AI-generated wallpaper in a separate tab.

Elsewhere, this is also a Copilot+ PC and has enough AI power to warrant the inclusion of Microsoft’s tools. Chief among these is the addition of the Copilot assistant, which you can ask questions and to undertake tasks, if you so wish.

In addition, there is also generative AI functionality baked into the Photos and Paint apps, if you want it. The most useful set of AI tools is the Windows Studio effects for the webcam, which provides a convenient means of auto framing, background blur and even for making sure you maintain eye contact.

Battery Life

- Lasted for 17 hours 59 minutes in the battery test

- Capable of lasting for two working days

Acer bundles a solid 65Whr battery inside the TravelMate P6 14 AI, which is quite a large one considering the size and lightness of this laptop. There are no specific claims made about its endurance, although with a decent capacity cell and a Lunar Lake chip in tow, I had quite high hopes.

In dialling the brightness down to the requisite 150 nits, and running the PCMark 10 Modern Office battery benchmark test, this Acer laptop was able to run for 17 hours and 59 minutes, making it a dead cert for two working days away from the mains. With some hypermiling, you may be able to eke out a third. That’s a strong result, and puts this well among its Lunar Lake-powered contemporaries, even if the Dell Pro 14 Premium still remains king with closer to 24 hours runtime.

The TravelMate P6 14 AI comes with a 100W power brick that isn’t as fast as rivals to put go-juice back into the laptop. The 38 minutes to get it back to 50% is good, although the 94 minutes for a full charge is a little more middle of the road.

Should you buy it?

You want a lightweight business laptop

The TravelMate P6 14 AI offers the benefits of a lightweight business laptop without the same hefty cost as some of the dearer alternatives with similar spec sheets.

On consumer and pro-grade laptops, it is more common to get OLED screens with better definition and fidelity than an equivalent IPS.

Final Thoughts

The Acer TravelMate P6 14 AI is a capable Windows business laptop with solid internal grunt from its Lunar Lake processor, a competent port selection, a lightweight chassis, and excellent battery life. For the price, it’s a solid option, although I do bemoan the lack of an OLED screen in any guise.

The Asus Zenbook A14 (2026) is closest in price and offers similar performance and endurance thanks to its Qualcomm Snapdragon X2 Elite processor, while also netting a sub-1kg weight with clever materials and coming with an OLED screen. It is more of a consumer-grade laptop, and enterprise users have different requirements that Acer’s choice is more likely to fulfil.

Dell’s new XPS 14 (2026) in the base model configuration I’ve tested also equals the TravelMate P6 14 AI in price, although it sacrifices portability and ports against either of the above to make it a serious contender. For more choices, check out our list of the best laptops we’ve tested.

How We Test

This Acer laptop has been put through a series of uniform checks designed to gauge key factors, including build quality, performance, screen quality and battery life. These include formal synthetic benchmarks and scripted tests, plus a series of real-world checks, such as how well it runs popular apps.

FAQs

The Acer TravelMate P6 14 AI weighs under 1kg at 990g, making it especially lightweight in any guise.

Test Data

| Acer TravelMate P6 14 AI |

|---|

Full Specs

| Acer TravelMate P6 14 AI Review | |

|---|---|

| UK RRP | £1599.99 |

| CPU | Intel Core Ultra 7 258V |

| Manufacturer | Acer |

| Screen Size | 14 inches |

| Storage Capacity | 1TB |

| Front Camera | 1080p webcam |

| Battery | 65 Whr |

| Battery Hours | 17 59 |

| Size (Dimensions) | 313.6 x 227.1 x 15.9 MM |

| Weight | 985 G |

| Operating System | Windows 11 |

| Release Date | 2025 |

| First Reviewed Date | 08/05/2026 |

| Resolution | 2880 x 1800 |

| Refresh Rate | 120 Hz |

| Ports | 2x Thunderbolt 4 USB-C, 2x USB-A, 1x HDMI, 1x 3.5mm jack |

| GPU | Intel Arc 140V iGPU |

| RAM | 32GB |

| Colours | Black |

| Display Technology | IPS |

| Screen Technology | IPS |

| Touch Screen | No |

| Convertible? | No |

Tech

Silicon Valley startup wants suburban homeowners hosting powerful AI data centers directly beside their homes for free electricity benefits

- SPAN plans to install AI-powered GPU boxes outside ordinary suburban homes

- Homeowners offered subsidized electricity for hosting remote computing infrastructure equipment

- Each neighbourhood node contains sixteen expensive Nvidia GPUs inside compact enclosure

A San Francisco start‑up called SPAN has proposed placing small data center nodes outside suburban houses.

The company says it aims to install thousands of liquid‑cooled boxes called XFRA nodes, each containing powerful Nvidia GPUs.

Homeowners would receive subsidized or even free electricity and internet access in exchange for hosting this equipment on their property.

A quiet box with sixteen GPUs

Each XFRA node attaches to the exterior wall of a house like an additional utility box.

The unit holds sixteen Nvidia RTX Pro GPUs and runs with minimal noise, according to the company’s announcements.

SPAN claims it can install eight thousand such nodes for five times less money than building a conventional data center with the same computing power.

“Data centers are loud, ugly, and often drive up local electricity bills,” said Chris Lander, vice president of XFRA at SPAN.

“This is quiet, discreet, and makes energy more affordable for the host and community.”

The system taps into excess electrical capacity that already exists in most modern American homes.

“Virtually all homes with 200‑amp utility services have 80 amps available at all times, so we set that as the maximum power consumption for a single XFRA node,” Lander explained.

“This home backup is provided to the host at no cost to them, contributing to greater energy resilience in addition to affordability,” he added.

Benefits for utility companies and communities

SPAN argues distributed nodes help grid operators avoid costly infrastructure upgrades, and that increasing electricity sales over existing grid infrastructure makes power more affordable for everyone.

The approach focuses on AI inference tasks rather than model training, which requires thousands of GPUs working together.

However, not everyone shares SPAN’s optimism. Ari Peskoe, a director at Harvard Law School, cautioned that utility companies may need to adapt their local grid management for neighbourhoods with many such nodes.

“If there’s a block that has several homes with these devices, maxing out compute and energy would force a lot of power to that local area,” Peskoe said.

However there are security concerns over the project, as thieves may also target these boxes, since each GPU sells for around $10,000.

The company plans a 100‑home pilot deployment in 2026, followed by rapid scaling to 80,000 units across the United States by 2027.

Whether suburban homeowners will accept this arrangement, which many may not understand, remains uncertain.

Meanwhile, the willingness of utility regulators and local zoning boards to approve such a decentralized computing experiment remains to be seen.

The pitch sounds appealing on paper, yet the real test will come when actual residents discover what it means to live next to a box of expensive electronics that strangers control remotely.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

Tech

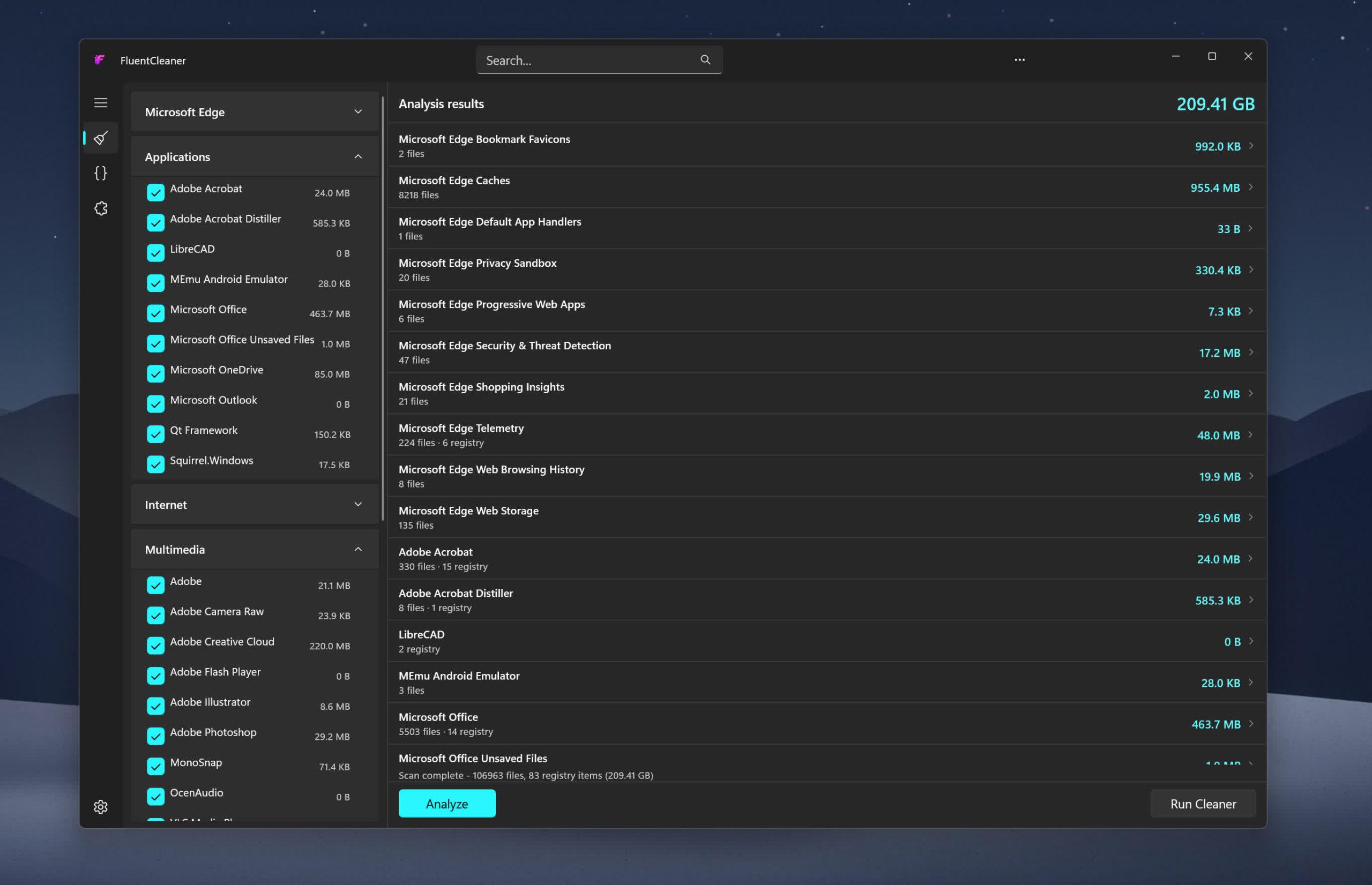

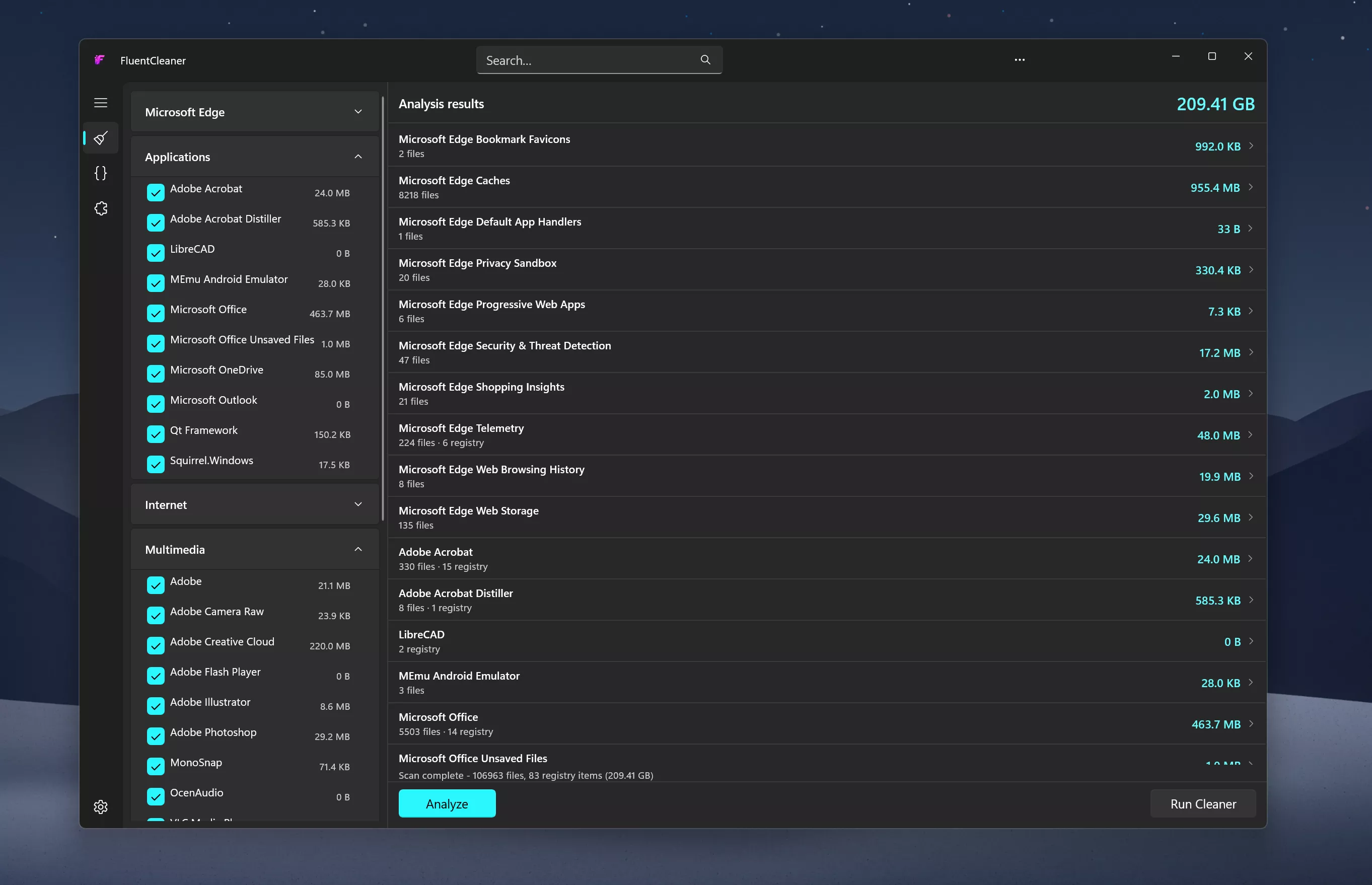

FluentCleaner Download | TechSpot

Inspired by the early CCleaner era, FluentCleaner reimagines the classic PC cleanup utility for modern Windows systems. Built with WinUI 3, it focuses on a cleaner, lightweight experience centered around removing temporary files, cache, logs, and leftover clutter – without aggressive registry tweaks, bundled extras, or scareware-style prompts.

The project also embraces the community-driven spirit that helped make older cleanup tools popular in the first place. FluentCleaner supports the well-known winapp2.ini cleaning database format, allowing it to leverage a large ecosystem of community-maintained cleaning rules while keeping the app fast, transparent, and easy to use.

Features

- Portable design with no installation required

- One-click system analysis and cleanup

- Support for optional maintenance extensions

- Optimized for Windows 11

- Clear and easy-to-understand cleanup results

System Requirements:

- Windows 10 2004 (Build 19041) and later

- Windows 11

No Windows 11 requirement. Despite using WinUI 3, the app is intentionally built to remain compatible with modern Windows 10 systems as well

What’s New

If I had to give this release a codename, it’d be ~WRL0001.tmp, so you never knew what it was, but deleting it always felt satisfying

- [Added] Cancel button: the Analyze button morphs into Cancel the moment a scan or clean starts. Abort any scan or clean mid-run

- [Added] Junk growth tracker: Settings now keeps a history of past clean runs (date, bytes freed, items removed)

- [Improved] Scan speed significantly improved for entries with multiple file pattern; the directory tree is now traversed once total instead of once per pattern, cutting scan times by up to 28× on some entries

- [Added] AI explanations for Winapp2 entries via Groq (experimental, requires a free API key in Settings):fully opt-in, nothing is sent anywhere without your own key. The idea was to explain individual Winapp2 entries, file paths and registry keys in plain English before cleanup. Many users asked to keep it in to test it themselves;a local LLM option may come later. Honestly, i built this because I had no idea what half the entries in my own app were doing 😄 Turns out FluentCleaner knew about apps I’d completely forgotten I had installed.See it in action on X/Twitter

- [Improved] Upgraded from Windows App SDK 1.8 >> 2.0.1 (stable, released April 29 2026)

- [Improved] Scanner no longer loops forever on junction points and symlinks;reparse points are skipped during traversal (fixes the C:\Users\All Users >> C:\ProgramData infinite loop trap)

- [Improved] Search now auto-expands categories so results are actually visible

- [Improved] Tools page got a full visual overhaul inspired by the Windows Clock app widget style;extensions now feel like interactive widgets rather than a plain list

- [Improved] Hamburger button moved into the title bar: native Windows 11 pattern (File Explorer / Settings style)

- [Improved] Settings button is now the built-in NavigationView control, complete with the native rotation animation on click

- [Improved] Navigation cleaned up to follow the official WinUI 3 way;replaced custom workarounds with native patterns

- [Improved] Dropped x86 build target: x64 and ARM64 only

- [Added] New cleanup rules for Microsoft Edge and TeamViewer cache

- [Updated] Winapp2.ini to version 260512

FluentCleaner 26.05.02

- [Changed] Large parts of the app were refactored to use more native WinUI 3 behavior and APIs. Theme handling, TitleBar integration and system theme detection are now much cleaner and more consistent

- [Compatibility] FluentCleaner officially supports Windows 10 2004 (Build 19041) and later;no Windows 11 required

- [Added] Automatic update checks; the Settings page now silently checks for new versions on load.

- If an update is available, FluentCleaner shows a small red banner with a direct download button. You can also trigger update checks anytime from the […] menu in Settings.

- [Added] Responsive TitleBar search; the search box now collapses into a compact search icon + flyout on smaller window sizes for a cleaner layout.

- [Added] Native-style hamburger menu; moved the pane toggle into the TitleBar to better match modern WinUI apps

- [Added] Terminal startup system info; Windows version, CPU and RAM are now shown when opening the terminal

- [Updated] CommunityToolkit.Mvvm 8.3.2 > 8.4.2

- [Fixed] ARM64 builds not resolving correctly. Turns out MSBuild is case-sensitive and arm64 ≠ ARM64. Added explicit RuntimeIdentifiers so self-contained builds correctly detect their target platform.

- [Changed] Migrated all [ObservableProperty] backing fields to partial properties. This is the new standard for CommunityToolkit.Mvvm 8.4+ on .NET 10. No behavior changes, just cleaner code and no more compiler warnings

Tech

AI-generated code is ‘pain waiting to happen’

INTERVIEW Enthusiasm among managers to adopt AI tools has outpaced developers’ ability to learn those tools and use them effectively.

Moshe Sambol, VP of customer solutions at software observability outfit Lightrun, told The Register in an interview that he speaks with a lot of companies. Some of the developers in those organizations, he said, are very comfortable with AI tools.

“But the reality is that a lot of developers are much earlier in the curve,” he said. “The expectations of businesses are getting ahead of where the developers are in terms of their mental model and in terms of the training that they’re providing, the enablement they’re providing to make their teams comfortable with the tools, and the rate at which these tools are evolving.”

Sambol said the degree of AI tool adoption varies.

“I absolutely have customers who’ve told their developers, ‘You don’t write code anymore. You review code. No one should write a line of code unless for some reason you failed after three attempts getting GenAI to do it,’” he said. “I have customers like that. I don’t know if I should name them, but absolutely.”

And he said on the other side of the spectrum, there are organizations like banks that are just starting to roll AI tools out due to compliance obligations and traditional industry caution.

“It’s an exciting time to be adopting these tools and learning these tools, but it puts a lot of pressure on the developer,” he said. “It puts this expectation of being more productive.”

Not everyone manages that, and Sambol said he has a lot of sympathy for developers who have been directed to use AI tools without training and organizational guidance. Generative AI models will produce a lot of code quickly, he said, and because the code seems correct initially, it often gets pushed forward.

“If it’s not creating bugs en masse today, it’s just pain waiting to happen,” he said. “The number one question I think we have to be asking developers is, ‘Can you explain that code? Have you validated that the code actually fits in the context of the broader system?’”

Sambol said the answer isn’t necessarily yes or no because developers have different levels of experience and often work on large projects where they focus only on a specific part of the code base. It’s common in enterprises, he said, that no one person will understand the entire system end-to-end, which is why problem resolution often requires a group of people.

The issue he sees is that generative AI systems don’t help bridge the missing knowledge gap. They don’t provide the context to understand all the components involved.

Sambol went on to describe an incident in which a developer was using an AI assistant to build an Ansible automated workflow. “The generative AI was creating the Ansible template for him, which seems like a perfect match – it’s drudge work,” he explained. “And it’s much better at getting the syntax exactly right.”

It worked. And then it stopped working.

“The system that he was deploying to, all of a sudden, he could not get the component up,” Sambol said. “It just wouldn’t start. A process that had been going smoothly for a couple of hours in the morning, now all of a sudden, his service is down and it will not run.

“And he’s pulling his hair out trying to unstitch the day’s work so far to figure out what went wrong, why is the service not working,” he said, adding that the AI agent proved unhelpful by going off in the wrong direction, reinstalling the operating system, and undertaking other ineffective steps to effect repairs.

What happened, Sambol explained, is that earlier in the day, the developer had installed the component in a certain way – it was running in a container with a systemd service.

As such, it needed access to the ports on the device, which precluded running the component in Docker.

“So the AI model re-wrapped it, repackaged it, and deployed it in a different way, but kept the original one running,” he explained. “So it was simply a matter of the fact that the one he had initially deployed was still running and it was blocking the port and the second one couldn’t run.

“It’s a fairly simple, easy-to-understand problem once you see it, but he lost the entire afternoon going down all kinds of dead ends with the AI looking at this, looking at that, because the AI model didn’t remember that it had guided him to deploy the system a certain way earlier in the day.”

Sambol said various studies show a significant percentage of AI generated code contains errors and creates technical debt.

That’s not to say human developers are without fault. Sambol said developers have their own weaknesses. Many companies, he said, have offshored or globally distributed development teams, so there’s a lot of variation. He argues that it’s important to acknowledge that imperfection and work toward processes that improve results.

One way to do that is to automate the prompting process in a way that makes it more repeatable. “When you do that, you identify where you’re starting to get good results and you don’t expect everybody to come up with a well-structured long prompt.”

Sambol added, “I think these tools are absolutely getting better. And so I’m reluctant to call any of them junk or deeply flawed. They’re getting better shockingly rapidly. If you can take advantage of a couple of different ones – with a human being in the loop – then you are more likely to get output that is at least as good as you were getting before.” ®

Tech

AI could steal fingerprints from high-resolution selfies, experts warn

Reports circulating in China this week reignited concerns around the issue after experts claimed that photos showing fingers facing directly toward a camera from within roughly five feet could potentially reveal enough detail to recreate fingerprints. In theory, attackers could use the resulting images to spoof biometric scanners tied to…

Read Entire Article

Source link

Tech

Anthropic’s Mythos Helped Build a Working macOS Exploit in Five Days

“The vulnerability is simple in practice,” writes Tom’s Hardware: “run a command as a standard user and gain root (administrator) access to the machine.”

And it was Mythos Preview that helped the security researchers at Palo Alto-based Calif bypass a five-year Apple security effort in just five days. The blog 9to5Mac reports:

Last year, Apple introduced Memory Integrity Enforcement (MIE), a hardware-assisted memory safety system designed to make memory corruption exploits much harder to execute… [The researchers note it’s built into Apple all models of the iPhone 17 and iPhone Air, and some MacBooks] They explain they have a 55-page technical report on the hack, but they won’t release it until Apple ships a fix for the exploit. But they do note in broad terms that Anthropic’s Mythos Preview model helped them identify the bugs and assisted them throughout the entire collaborative exploit development process.

“Mythos Preview is powerful: once it has learned how to attack a class of problems, it generalizes to nearly any problem in that class. Mythos discovered the bugs quickly because they belong to known bug classes. But MIE is a new best-in-class mitigation, so autonomously bypassing it can be tricky. This is where human expertise comes in. Part of our motivation was to test what’s possible when the best models are paired with experts. Landing a kernel memory corruption exploit against the best protections in a week is noteworthy, and says something strong about this pairing….”

[I]n a time when even small teams, with the help of AI, can make discoveries such as this one, “we’re about to learn how the best mitigation technology on Earth holds up during the first AI bugmageddon.”

-

Crypto World21 hours ago

Crypto World21 hours agoBloFin War of Whales 2026 Grand Prix opens registration for $5M trading championship

-

Fashion1 day ago

Fashion1 day agoWeekend Open Thread: Theory – Corporette.com

-

Fashion5 days ago

Fashion5 days agoCoffee Break: Travel Steam Iron

-

Crypto World1 day ago

Crypto World1 day agoE-Estate Announces 1 Year Live: Washington DC Summit as Real Estate Tokenization Enters Its Next Phase

-

Fashion6 days ago

Fashion6 days agoWhat to Know Before Buying a Curling Wand or Curling Iron

-

Politics5 days ago

Politics5 days agoWhat to expect when you’re expecting a budget

-

Tech7 days ago

Tech7 days agoAuto Enthusiast Carves Functional Two-Stroke Engine from Solid Metal

-

Tech2 days ago

Tech2 days agoTech Moves: Microsoft AI leader jumps to OpenAI; former AI2 exec joins Meta; and more

-

Tech6 days ago

Tech6 days agoGM Agrees To Pay $12.75 Million To Settle California Lawsuit Over Misuse Of Customers’ Driving Data

-

Crypto World7 days ago

Crypto World7 days agoCZ says US crypto rivals tried to block Trump pardon

-

Tech5 days ago

Tech5 days agoGM agrees to $12.75M California settlement over sale of drivers’ data

-

Crypto World4 days ago

Bitcoin Suisse expands with Digital Asset License and Investment Business Act Registration Approval in Bermuda

-

Politics4 days ago

Politics4 days agoPakistan to enter Chinese capital market as war inflation bites

-

Crypto World2 days ago

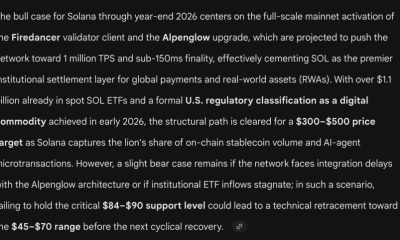

Crypto World2 days agoGoogle’s Gemini AI Predicts Incredible Solana Price by the End of 2026

-

Politics7 days ago

Politics7 days agoSky News Presenter Says Keir Starmer Is Not Waving But Drowning

-

Crypto World4 days ago

Crypto World4 days agoBitcoin Suisse expands with Digital Asset License and Investment Business Act Registration Approval in Bermuda

-

Business1 day ago

Business1 day agoH&R Real Estate Investment Trust (HR.UN:CA) Q1 2026 Earnings Call Transcript

-

Fashion7 days ago

Fashion7 days agoAmazon Sundays: Spring Glassware & Vases

-

Tech21 hours ago

Tech21 hours agoGoogle reimburses Register sources who were victims of API fraud

-

Entertainment6 days ago

Entertainment6 days agoPrime Video’s Forgotten but Brilliant 2-Part Horror Anthology Is a Perfect Binge

You must be logged in to post a comment Login