Tech

48 days of exposed projects, closed bug reports, & the structural failure of vibe coding security

Summary: Lovable, the $6.6 billion vibe coding platform with eight million users, has faced three documented security incidents exposing source code, database credentials, and thousands of user records, with the most recent BOLA vulnerability left open for 48 days after the company closed a bug bounty report without escalation. The incidents are representative of a structural problem across vibe coding: 40-62% of AI-generated code contains vulnerabilities, 91.5% of vibe-coded apps had at least one AI hallucination-related flaw in Q1 2026, and the market’s incentive structure rewards growth over security at a moment when 60% of all new code is projected to be AI-generated by year end.

Lovable, the vibe coding platform valued at $6.6 billion with eight million users, has spent the past two months dealing with security incidents that collectively exposed source code, database credentials, AI chat histories, and the personal data of thousands of users across projects built on its platform. The most recent disclosure, published on 20 April by a security researcher, revealed a broken object-level authorisation vulnerability in Lovable’s API that allowed anyone with a free account to access another user’s profile, public projects, source code, and database credentials in as few as five API calls. The researcher reported the flaw to Lovable’s bug bounty programme on 3 March. Lovable patched it for new projects but never fixed it for existing ones, marked a follow-up report as a duplicate, and closed it. As of reporting, the vulnerability had been open for 48 days.

Lovable’s response followed a pattern that security researchers found more telling than the vulnerability itself. The company first posted on X that it “did not suffer a data breach,” calling the exposed data “intentional behaviour.” It then blamed its own documentation, saying that what “public” implies “was unclear.” It then blamed its bug bounty partner HackerOne, saying reports were “closed without escalation because our HackerOne partners thought that seeing public projects’ chats was the intended behaviour.” Later that day, it issued a partial apology acknowledging that “pointing to documentation issues alone was not enough.” Cybernews headlined its coverage: “Lovable goes on ego trip denying vulnerability, then blames others for said vulnerability.”

What was exposed

The April incident affected projects created before November 2025. The researcher demonstrated that extracting a user’s source code from Lovable’s API also yielded hardcoded Supabase database credentials embedded in that code. One affected project belonged to Connected Women in AI, a Danish nonprofit. Its exposed data contained real user records including names, job titles, LinkedIn profiles, and Stripe customer IDs, with records linked to individuals at Accenture Denmark and Copenhagen Business School. Employees at Nvidia, Microsoft, Uber, and Spotify reportedly have Lovable accounts tied to affected projects.

This was the third documented security incident involving the platform. In February, a tech entrepreneur named Taimur Khan found 16 vulnerabilities, six of them critical, in a single app hosted on Lovable and featured on its own Discover page with more than 100,000 views. The most severe was an inverted authentication logic that granted anonymous users full access while blocking authenticated users. The app, an AI-powered EdTech tool, exposed 18,697 user records including 4,538 student accounts from institutions including UC Berkeley and UC Davis, with minors likely on the platform. Khan reported his findings through Lovable’s support channel. His ticket was closed without a response.

An earlier study in May 2025 found that 170 out of 1,645 sampled Lovable-created applications had issues allowing personal information to be accessed by anyone. Approximately 70% of Lovable apps had row-level security disabled entirely.

The structural problem

Lovable is not uniquely insecure. It is representatively insecure. The platform generates full-stack applications using React, Tailwind, and Supabase in response to natural language prompts, a process the industry calls vibe coding after Andrej Karpathy coined the term in February 2025. The approach lets anyone describe an application and have it built by an AI model without writing or reviewing code. Collins English Dictionary named it Word of the Year for 2025. Gartner forecasts that 60% of all new code will be AI-generated by the end of this year.

The security data across the entire category is consistent. Between 40 and 62% of AI-generated code contains security vulnerabilities, depending on the study. AI-written code produces flaws at 2.74 times the rate of human-written code, according to an analysis of 470 GitHub pull requests. A first-quarter 2026 assessment of more than 200 vibe-coded applications found that 91.5% contained at least one vulnerability traceable to AI hallucination. More than 60% exposed API keys or database credentials in public repositories. The vulnerability classes are the same across every major vibe coding platform: disabled row-level security, hardcoded secrets, missing webhook verification, injection flaws, and broken access controls.

Bolt.new ships with row-level security off by default. Cursor has had multiple CVEs patched, including a case-sensitivity bypass enabling persistent remote code execution. Researchers at Pillar Security demonstrated a “rules file backdoor” attack in which hackers inject hidden malicious instructions into configuration files used by Cursor and GitHub Copilot. A separate “Agent Commander” attack in March showed that prompt injection into AI coding agents could convert autonomous coding tools into remotely controlled malware delivery platforms. In January, the vibe-coded social network Moltbook was breached within three days of launch, exposing 1.5 million API authentication tokens and 35,000 email addresses through a misconfigured Supabase database with no row-level security.

The economic incentive problem

Security firms are raising money specifically to address the gap. Escape raised $18 million to replace manual penetration testing with AI agents that scan vibe-coded applications, citing over 2,000 high-impact vulnerabilities and hundreds of exposed secrets found in live production systems. Lovable itself partnered with Aikido to bring automated pentesting to its platform. But the fundamental incentive structure of the market works against security.

Lovable hit $4 million in annual recurring revenue in its first four weeks and $10 million in two months with a team of 15 people. It raised $200 million at a $1.8 billion valuation in July 2025 and $330 million at $6.6 billion in December, more than tripling its valuation in five months. Enterprise adoption of vibe coding grew 340% year over year. Non-technical user adoption surged 520%. Eighty-seven percent of Fortune 500 companies have adopted at least one vibe coding platform. The market rewards speed and accessibility. Security is a cost centre that slows both.

The result is a category in which the dominant platforms generate code that is insecure by default, the users generating that code lack the expertise to identify the vulnerabilities, and the platforms themselves have financial incentives to prioritise growth over remediation. Lovable’s handling of the March and April incidents illustrates the dynamic precisely: a bug bounty report was closed without escalation, a vulnerability affecting thousands of projects was patched for new users but not existing ones, and the public response cycled through denial, deflection, and a partial apology within a single day.

The regulatory gap

The EU AI Act’s high-risk obligations take effect on 2 August, requiring transparency, human oversight, and data governance for AI systems. California’s S.B. 53 and New York’s RAISE Act require frontier AI developers to publish safety frameworks and report incidents. But none of these regulations specifically address the security of code generated by AI models for end users, and the adoption data suggests the market is moving faster than regulators can respond. Financial services and healthcare, the two most regulated sectors, show the lowest vibe coding adoption rates at 34 and 28% respectively, which indicates that the market itself recognises the compliance gap even if regulations have not yet caught up.

As Trend Micro framed it: “The real risk of vibe coding isn’t AI writing insecure code. It’s humans shipping code they never had a chance to secure.” The 84% surge in App Store submissions driven by vibe coding tools suggests the volume of unreviewed code entering production is accelerating. Thirty-five CVEs were disclosed in March alone from AI-generated code, up from six in January, and Georgia Tech estimates the actual figure is five to ten times higher than what is detected.

Lovable is the fastest-growing software startup in history by several measures. It is also a company that closed a critical vulnerability report without reading it, left thousands of projects exposed for 48 days, and responded to public disclosure by denying a breach, blaming its documentation, blaming its bug bounty partner, and then apologising for the apology. The pattern is not unique to Lovable. It is the pattern of a category that has built extraordinary tools for creating software and almost nothing for securing it.

Tech

Mars Rover Detects Never-Before-Seen Organic Compounds In New Experiment

NASA’s Curiosity rover has identified a diverse set of organic molecules on Mars, including a nitrogen-bearing compound similar in structure to DNA precursors. The finding strengthens the case that ancient organic material can survive in the Martian subsurface, though it does not prove past life because the compounds could also come from geology or meteorites. Phys.org reports: The study was led by Amy Williams, Ph.D., a professor of geological sciences at the University of Florida and a scientist on the Curiosity and Perseverance Mars rover missions. Curiosity landed on Mars in 2012 to find evidence that ancient Mars had conditions that could support microbial life billions of years ago; the Perseverance rover, which landed in 2021, was sent to look for signs of any ancient life that might have formed.

Among the 20-plus chemicals identified by the experiment, Curiosity spotted a nitrogen-bearing molecule with a structure similar to DNA precursors — a chemical never before spotted on Mars. The rover also identified benzothiophene, a large, double-ringed, sulfurous chemical often delivered to planets by meteorites. “The same stuff that rained down on Mars from meteorites is what rained down on Earth, and it probably provided the building blocks for life as we know it on our planet,” Williams said. The findings have been published in the journal Nature Communications.

Tech

Energy in Motion: Unlocking the Interconnected Grid of Tomorrow

More Information

The U.S. power grid was not designed for this moment, when large industrial loads, data centers, renewable buildout, and extreme weather are colliding at scale. Incremental transmission upgrades may no longer be enough to maintain reliability, affordability, and flexibility across regions. This white paper invites engineers, planners, utilities, and policymakers to step back and examine what a more interconnected grid could enable—and what’s at risk if regional systems continue to operate in isolation. It offers a grounded, accessible look at how an Interregional Transmission Overlay could reshape planning assumptions and unlock new options for the decade ahead.

Tech

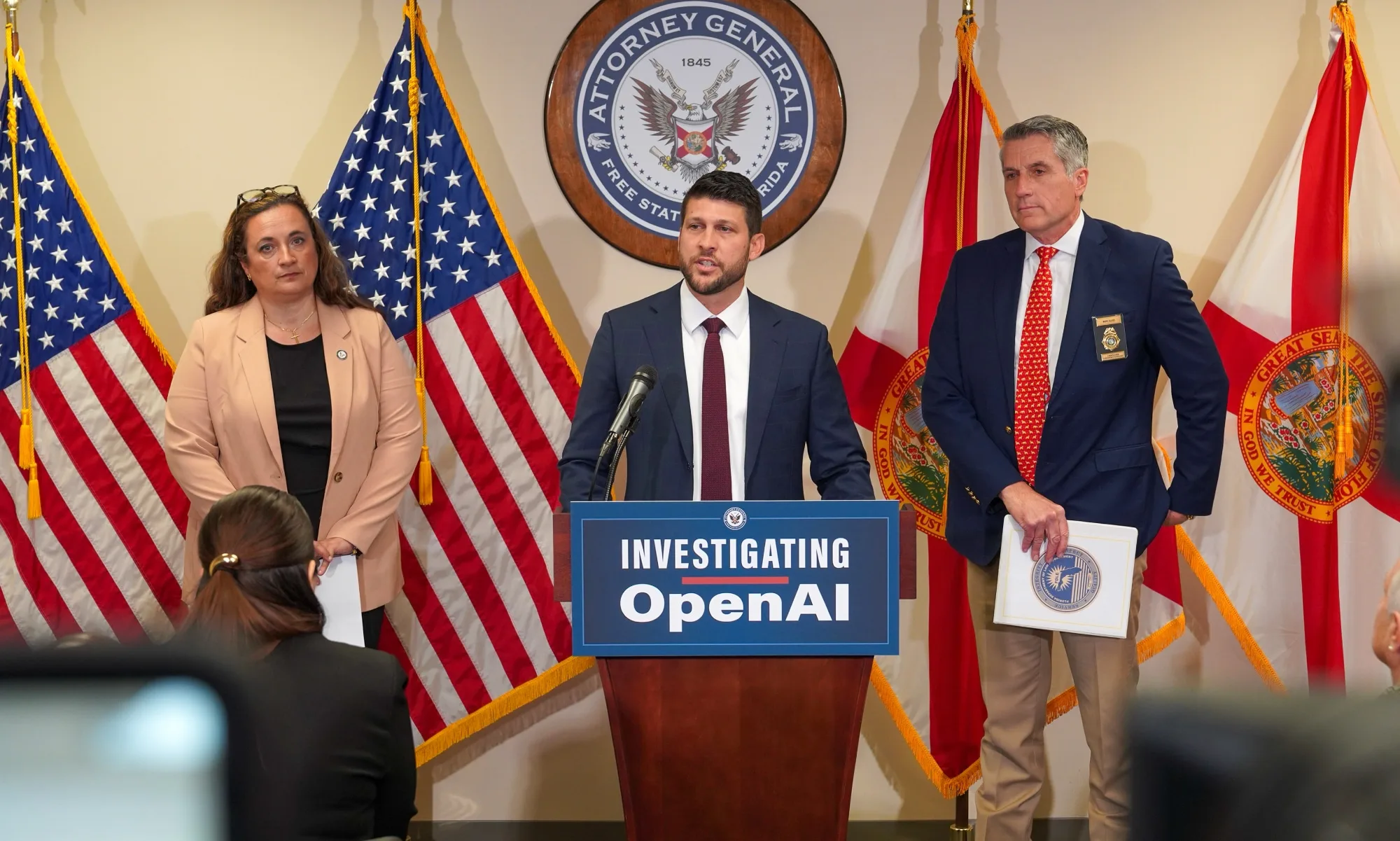

Florida opens first criminal AI probe into OpenAI

Attorney General James Uthmeier said prosecutors reviewed chat logs showing ChatGPT advised the suspect on weapons, ammunition, and timing. The probe is the first criminal investigation into an AI company over an alleged role in a mass shooting in the US.

Florida Attorney General James Uthmeier announced on Tuesday that the state’s Office of Statewide Prosecution has opened a criminal investigation into OpenAI over the alleged role of its ChatGPT chatbot in the April 2025 mass shooting at Florida State University.

The shooting, which killed two people and injured six others near the student union on FSU’s Tallahassee campus, was carried out by Phoenix Ikner, 21, a student at the university at the time. His trial is set to begin on 19 October 2026. More than 200 AI messages have been entered into evidence in the case.

Uthmeier said an initial review of Ikner’s ChatGPT chat logs showed the suspect had used the tool to seek advice before carrying out the attack, including what type of gun to use, what ammunition was appropriate, what time of day to go to campus to encounter more people, and which locations on campus would have a higher population.

“My prosecutors have looked at this and they’ve told me, if it was a person on the other end of that screen, we would be charging them with murder,” Uthmeier said at a press conference in Tampa.

“ChatGPT offered significant advice to the shooter before he committed such heinous crimes. We cannot have AI bots that are advising people on how to kill others.”

OpenAI has been subpoenaed for information about its policies and internal training materials regarding user threats of harm to others and self-harm, as well as its policies for reporting possible crimes.

The company’s spokesperson Kate Waters said: “Last year’s mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime.”

OpenAI said it had proactively shared information about the alleged shooter’s account with law enforcement after the shooting and continues to cooperate with authorities. The company has maintained that ChatGPT provided only general, factual responses based on widely available information.

A criminal investigation into an AI company over an alleged role in a mass shooting is, as multiple legal experts have noted, unprecedented in the United States.

Uthmeier had already announced a civil investigation into ChatGPT’s role in the FSU shooting, which is ongoing. Attorneys representing the family of one of the victims have announced plans to sue OpenAI.

The criminal probe is a significant escalation: it opens the question of whether an AI company could be held criminally liable for responses its system generates, a question with no established legal precedent under current US law.

The Florida investigation is part of a broader pattern of legal pressure on AI chatbot companies over alleged contributions to violent incidents.

OpenAI is already facing a lawsuit from the family of a victim critically injured in a mass shooting in British Columbia in February 2026 that killed eight people and injured dozens more, an 18-year-old alleged gunman who had discussed gun violence scenarios with ChatGPT and was banned from the platform months before the shooting, but reportedly evaded detection and created another account.

OpenAI said it had identified and banned the user but did not alert law enforcement at the time. Separately, a wrongful death lawsuit filed against Google in March over the suicide of a Florida man alleges that its Gemini chatbot pushed the man toward planning a mass casualty attack.

OpenAI has said it is working with mental health experts to improve how ChatGPT responds to signs of mental or emotional distress, and that it has taken steps to strengthen its safeguards after the British Columbia case, including changing when it chooses to alert law enforcement about potentially violent activities.

Tech

ChatGPT lawsuit claims it advised a shooter on how and where to strike

Florida’s attorney general has launched a criminal investigation into OpenAI, alleging that ChatGPT helped plan the mass shooting at Florida State University that killed two people last year.

According to The Washington Post, Attorney General James Uthmeier made the announcement at a news conference on Tuesday, claiming the chatbot gave tactical advice to the suspected shooter. “The chatbot advised the shooter on what type of gun to use, on which ammo went with which gun, on whether or not a gun would be useful at short range,” Uthmeier said.

He didn’t hold back on the implications either: “If it was a person on the other end of that screen, we would be charging them with murder.” His office has also sent subpoenas to OpenAI, asking the company to explain its policies on how it handles user conversations involving threats of violence.

Is OpenAI responsible for what users do with it?

OpenAI has pushed back firmly. Spokesperson Kate Waters said, “Last year’s mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime.”

The company claims the ChatGPT provided factual responses to questions that could be found anywhere on the internet and that it did not encourage or promote illegal activity.

Is this just the beginning?

This investigation is part of a growing concern around AI chatbots. OpenAI is already under scrutiny after a separate mass shooting in Canada and multiple lawsuits from families who claim ChatGPT contributed to the deaths of loved ones by suicide.

AI experts point out that chatbots’ guardrails are imperfect. As Carnegie Mellon professor Ramayya Krishnan put it, “The guardrails are not 100 percent effective.”

Whether OpenAI can be held criminally liable is a question up to the courts to answer, but no one can deny that these AI chatbots can have severe implications on a person’s mental health and should only be used with great care.

Tech

Gemini can now look through your Google Photos to create AI images, with a few caveats

In a new Gemini feature tied to Google Photos, the company says its AI can tap into your photo library. The goal is to “use actual images of you and your loved ones” when generating AI content.

Read Entire Article

Source link

Tech

OpenAI shifts ChatGPT ads to cost-per-click as $60 CPM erodes in ten weeks and ad revenue targets hit $2.5 billion

Summary: OpenAI has shifted ChatGPT’s advertising from CPM to cost-per-click pricing, with bids between $3 and $5, after the $60 CPM it charged at launch in February eroded to as low as $25 within ten weeks. The move puts OpenAI in direct competition with Google and Meta for performance ad budgets, while Perplexity and Anthropic have positioned themselves as explicitly ad-free alternatives. OpenAI projects $2.5 billion in ad revenue for 2026, scaling to $100 billion by 2030, as the company faces projected losses of $14 billion this year and an $852 billion valuation that investors are already questioning.

OpenAI has shifted ChatGPT’s advertising model from cost-per-thousand impressions to cost-per-click, a change that puts the company in direct competition with Google and Meta for performance advertising budgets ten weeks after it first placed ads inside the chatbot. Advertisers can now set bids between $3 and $5 per click, according to screenshots of OpenAI’s new ads manager, while the minimum spend has been cut from $250,000 to $50,000. The pivot, reported by The Information on 15 April, was driven by a practical problem: the $60 CPM that OpenAI charged at launch in February had eroded to as low as $25 in some cases, making a volume-dependent impressions model unsustainable for a company projecting $14 billion in losses this year.

The ads appear at the bottom of ChatGPT responses, labelled “sponsored” and visually separated from the answer. Product-led queries can include sponsored product cards similar to those on Google Shopping. Users on the free tier and the $8-per-month Go plan see ads. Paid subscribers on the Plus, Pro, Business, Enterprise, and Education tiers do not. OpenAI says advertisers cannot see user conversations, chat history, names, email addresses, or IP addresses, and receive only aggregated performance data showing total views and clicks. Targeting is contextual, matched to the topic of the current conversation, rather than demographic or third-party data.

From experiment to ad platform in ten weeks

OpenAI first introduced advertising into ChatGPT on 9 February with a CPM model, a $200,000 to $250,000 minimum spend, and a roster of early advertisers that included Target, Ford, Adobe, Mrs. Meyer’s, and Expedia. Within two months, the pilot topped $100 million in annualised revenue with several hundred advertisers participating. OpenAI projects $2.5 billion in advertising revenue for 2026, scaling to $11 billion by 2027 and $100 billion by 2030.

The speed of the buildout has been striking. OpenAI hired Shivakumar Venkataraman, a 21-year Google veteran who led Google’s search ads business, as vice president in June 2024. Since February, the company has partnered with StackAdapt for programmatic placement, built a conversion tracking pixel supporting events including lead creation, order creation, and subscription starts, and launched a self-serve ads manager that opened to global advertisers on 15 April. International expansion to Australia, New Zealand, and Canada followed within 48 hours. OpenAI has also partnered with Smartly and Criteo to build conversational ad formats that go beyond static placements, connecting to Criteo’s network of 17,000 advertisers.

The CPC shift reflects the reality that impressions-based pricing was already softening. A leaked StackAdapt deck, shared with select buyers on 27 March, offered CPMs as low as $15, a quarter of the launch rate. Cost-per-click pricing ties revenue to demonstrable user engagement rather than passive exposure, aligning OpenAI’s model with how advertisers already evaluate performance on Google and Meta. OpenAI is also exploring action-based ad formats designed to drive purchases or app downloads directly from within a conversation.

The Altman pivot

Sam Altman spent two years building a public position against advertising. He called it a “momentary industry” in 2024. At Harvard, he described ads as a “last resort.” He told interviewers that “ads-plus-AI is sort of uniquely unsettling to me” and that he liked “that people pay for ChatGPT and know the answers they’re getting are not influenced by advertisers.” In a Stratechery interview, he said Instagram changed his mind, arguing that its ads “added value” to him.

On 9 February, as ads went live, Altman posted on X: “We are starting to test ads in ChatGPT free and Go tiers. Most importantly, we will not accept money to influence the answer ChatGPT gives you, and we keep your conversations private from advertisers.” Chris Lehane, OpenAI’s vice president of global affairs, defended the move by arguing that advertising helps “expand democratic access” to ChatGPT. In response to Anthropic’s Super Bowl commercials, which ran spots titled “Deception,” “Betrayal,” “Treachery,” and “Violation” with the tagline “Ads are coming to AI. But not to Claude,” Altman argued that Anthropic “serves an expensive product to rich people” while OpenAI needs to bring AI to “billions of people who can’t pay for subscriptions.”

The competitive landscape is splitting

The major AI companies are now pursuing divergent monetisation strategies in a way that would have seemed unlikely a year ago. Google is weaving advertising into 25.5% of AI-generated search results, extending its existing ad infrastructure into AI Overviews. OpenAI is building a parallel ad platform from scratch. And the rest of the market is running the other way.

Perplexity tested sponsored follow-up questions in 2024 and 2025, then abandoned advertising altogether, citing user trust concerns. It is now targeting $500 million in subscription revenue as the explicitly ad-free alternative. Anthropic positioned Claude as ad-free before OpenAI even launched its programme, spent millions on Super Bowl spots attacking ChatGPT’s ad decision, and saw an 11% jump in daily active users and a climb to seventh on the App Store as a result.

The split matters because it tests a foundational question about AI products: whether users treat a chatbot more like a search engine, where ads are tolerated, or more like a therapist, where they are not. An Ipsos survey found that nearly two-thirds of US adults say ads in AI search make them trust the results less. A boycott campaign called QuitGPT has gathered more than 200,000 sign-ups since late January.

The privacy question

The day ads launched, Zoe Hitzig, a researcher at OpenAI, resigned. Writing in the New York Times, she described ChatGPT’s conversation logs as “an archive of human candor that has no precedent” and warned that OpenAI risks following “the same path as Facebook.” Her concern was specific: while advertisers do not see user conversations, OpenAI must process conversation content internally to serve contextually relevant ads. Queries about health conditions, financial distress, relationship problems, and personal struggles are being analysed and categorised by the system to determine which ads to show.

OpenAI says it does not build audience segments based on demographics or third-party data and does not show ads to users it identifies as under 18. It updated its privacy policy to coincide with the ads expansion. But the structural tension remains: the same conversations that users treat as private are the signal that makes the ads work.

Why now

The financial pressure behind the pivot is not subtle. OpenAI generated $13 billion in revenue in 2025, a 236% increase from $3.7 billion in 2024, and is currently producing roughly $2 billion per month. It closed a $122 billion funding round at an $852 billion valuation on 31 March, led by Amazon, Nvidia, and SoftBank. But the company is projected to lose approximately $14 billion in 2026 on compute, research, and infrastructure costs. It does not expect to reach profitability until 2030. Internal targets include an IPO filing in the second half of this year and a 2027 listing at a potential valuation of up to $1 trillion.

Advertising is the fastest path to closing the gap between revenue and expenditure without raising subscription prices or conducting another funding round. The US market for AI search advertising is projected to grow from $1 billion in 2025 to $25.9 billion by 2029, representing 13.6% of all search ad spending. OpenAI’s $2.5 billion target for this year would make it a significant player in that market immediately.

The question is whether cost-per-click changes the calculation for advertisers who found the CPM model expensive and difficult to measure. CNBC reported in March that the test was “moving too slowly to meet the hype” and that OpenAI “can’t prove the ads are working” due to the absence of mature measurement tools. CPC at least gives advertisers a metric they understand: someone clicked. Whether that click leads to a purchase, and whether the broader disruption of AI search makes ChatGPT a necessary advertising channel regardless of its current limitations, will determine whether OpenAI’s advertising ambitions prove as transformative as its AI ones or as fleeting as the CPM rates that collapsed in ten weeks.

Tech

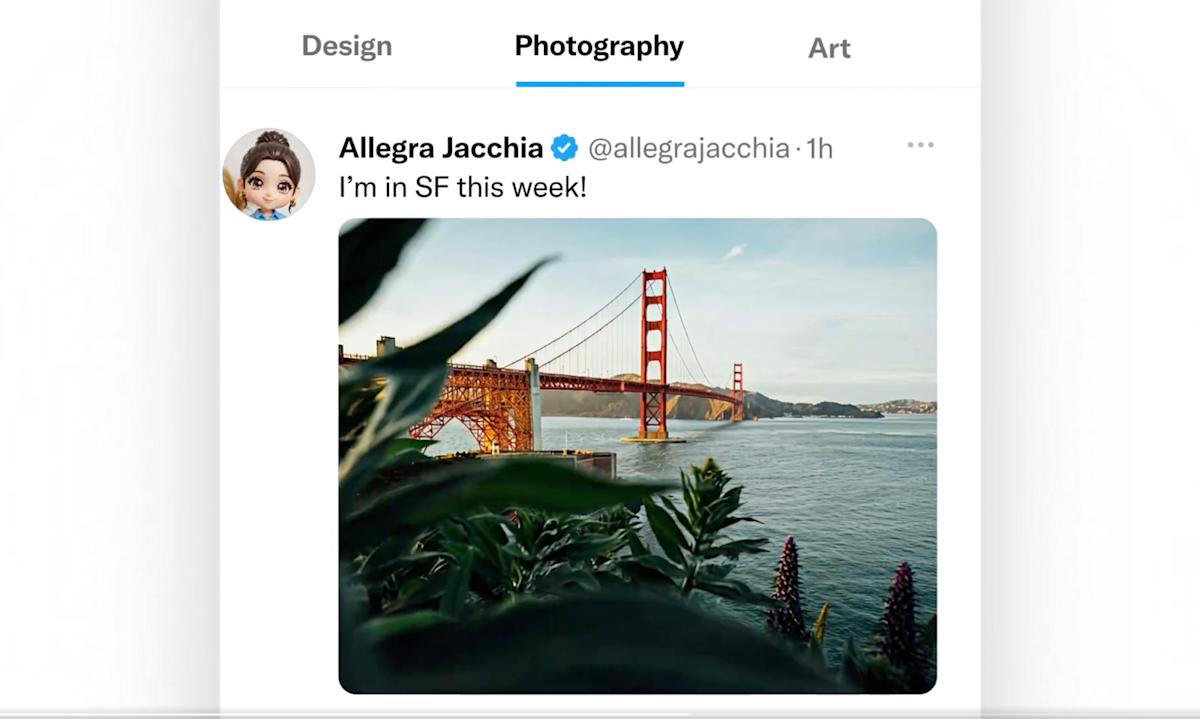

X finally adds custom timelines

Nikita Bier, X’s head of product, has announced the launch of custom timelines, which lets you curate what you see on your feed based on your topics of interest. He called the update “one of the biggest changes to X” and a ”huge undertaking” that took the team “many months” to develop. The feature lets you pin specific topics to your home tab, so you can switch from one to the other to see the latest discussions about your interests and hobbies.

Bier said that X’s custom timelines is “powered by Grok’s understanding of every post with the algorithm’s personalization.” You have 75 topics to choose from, including food, art, photography, business, finance, movies and TV. As you’d expect, the personalization aspect of the feature works better if it’s a topic you already engage with regularly. X’s new feature is similar to Bluesky’s and Threads’ custom feeds, which also allow you to pin topic-based timelines to the home screens of the apps, and which their users have been enjoying since 2023 and 2024, respectively.

At the moment, X’s custom timelines is still in its early access phase and is only available to Premium subscribers on iOS. It will be rolling out to Premium users on Android “very soon,” as well. Bier has also announced that X has released a tool to snooze topics on the For You tab. With the tool, you’ll be able to hide certain topics, such as politics or sports, for 24 hours from your feed. It’s now available for Premium users on iOS and the web.

Tech

Amazon Prime Broadcast Fails Completely During Several Minutes Of NBA Playoff Game

from the prime-time dept

With the streaming world turning into a wild, chaotic, fractured mess, there is no better example of how terrible this can all be than with live sports. We’ve already seen all kinds of issues among streaming services when it comes to sports. Buffering live games piss people off. Exclusivity deals worked out among several services for a single league can make finding where a game is being showed a Sherlock-ian experience. Local blackout rules abound and suck for the consumer.

But if there is one thing a streaming service cannot do, it’s got to be buying the exclusive rights to important games and then throwing “technical difficulties” at the viewer. And that’s exactly what happened during part of an overtime period in an NBA playoff game between the Hornets and the Heat. For several minutes at the start of the overtime period, the stream simply cut out.

As reported by ESPN, Prime Video started showing a message that read “technical difficulties” seconds after cutting off the game’s commentator in the middle of a sentence. Viewers missed a Hornets possession that included a score by LaMelo Ball. By the time the stream came back online, 22.1 seconds of playing time had passed, per ESPN, and viewers were dismayed.

“Tell me the game didn’t just cut off?!!? Am I trippin?? WTH,” LeBron James, a Los Angeles Lakers player who previously won two championships with the Heat, said, adding a face-planting emoji, on X.

Prime Video’s fumble is made worse by the fact that the streaming service had exclusive rights to air the game. The only other way to experience the game was in person or by listening to select radio stations.

Imagine someone signed up for Prime because of this deal with the NBA. Sure, that isn’t going to be a huge percentage of the viewership, but it won’t be zero percent of it, either. To have the stream cut out in the opening minutes of overtime is going to be incredibly frustrating.

It’s also worth noting that more traditional broadcasts also have had equipment failures, but they don’t have the resources Amazon has. And, frankly, Amazon’s streaming service doesn’t have the best reputation to begin with.

The latter point is especially concerning because, after four years of this, viewers are still complaining about audio-syncing problems on Prime Video this season. We’ve experienced this firsthand at Ars Technica and have heard commentators announce a completed three-point shot before the stream shows it happening.

“The entire year the audio has been a split second ahead of the video on half of the Amazon games we’ve watched,” Bill Simmons, a former sportswriter and current host of The Bill Simmons Podcast, said in today’s episode: “The three-pointer’s halfway toward the basket. It’s like, ‘BANG! It’s good!’ And you hear the crowd, and it’s, like, the ball hasn’t even gone in yet. How have we not figured this out yet? You guys, [Amazon], have 8 kajillion dollars.”

At some point, the NBA itself is going to have to step in here, because its reputation is going to take a hit along with Amazon’s. The league risks alienating fans that are pissed off that the league foisted broadcast partners that apparently can’t deliver a product of the quality of cable TV, of all entities. And I refuse to believe that these streaming contracts don’t come with contractual requirements for quality of service.

Streaming is both the present and the future. It isn’t going away. Neither are live sports. This has to be figured out and delivered in a way that fans don’t completely miss important parts of games. The alternative is lost fans for the leagues and I can promise you that won’t be stood for.

Filed Under: amazon prime, basketball, live sports, streaming, technical difficulties

Companies: amazon, nba

Tech

SpaceX is working with Cursor and has an option to buy the startup for $60B

SpaceX said it has struck a deal with Cursor to develop a next-generation “coding and knowledge work AI,” which includes a surprising provision — an option to buy the popular software development platform for $60 billion later this year.

Partnering with and potentially purchasing a leader in the hottest AI product category can only be seen in the context of SpaceX’s much-anticipated public offering. Investors seeking more value in the IPO might see its engagement with Cursor as another way to extract value from Elon Musk’s increasingly sprawling tech conglomerate.

The deal won’t shock those who follow the industry closely. Last week, it was reported that xAI would begin renting computing power from its data centers to Cursor, with the coding startup using tens of thousands of xAI chips to train its latest AI model. And last month, two of Cursor’s most senior engineering leaders, Andrew Milich and Jason Ginsberg, left the company to join xAI, where both report directly to Musk.

SpaceX described the partnership as a project combining Cursor’s “product and distribution to expert software engineers” with SpaceX’s Colossus supercomputer, which the company claims has the equivalent compute power of a million Nvidia H100 chips.

SpaceX also said that at some undisclosed point later this year, it will either pay Cursor $10 billion for its work or acquire the company for $60 billion. Last week, TechCrunch reported that Cursor was eyeing a $50 billion valuation in an upcoming private fundraising round. That figure itself reflects an astonishing series of leaps. Cursor was valued at just $2.5 billion in January of last year, climbed to $9 billion by last May, and was assigned a $29.3 billion post-money valuation when it closed on $2.3 billion in Series D funding in November.

Either figure would represent a significant expense for SpaceX, which is widely seen to be losing money following the acquisition of xAI and the social media network X and is planning extensive capital investment. The brief statement did not say if either deal could be paid in SpaceX stock.

In the meantime, the move could shore up weaknesses at each company, but it also reveals them. Neither Cursor nor xAI has proprietary models that can match the leading offerings from Anthropic and OpenAI — the same companies now competing directly with Cursor for the developer market.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

Cursor still uses and sells access to Claude and GPT models even as both firms roll out their own coding tools, an awkward arrangement that this new SpaceX partnership may be designed to eventually escape.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

EV batteries that can charge in just over six minutes are here

CATL held its Super Technology Day in Beijing, and if you care about EVs at all, this one is worth paying attention to. As reported by PR Newswire, the company unveiled several new battery technologies, and the headline act is the third-generation Shenxing Superfast Charging Battery.

The numbers are pretty incredible. Charging from 10% to 80% takes 3 minutes and 44 seconds. Charging from 10% to 98% takes 6 minutes and 27 seconds. Even at minus 30 degrees Celsius, the battery can charge from 20% to 98% in about 9 minutes.

For context, that is faster than BYD’s blade battery and Geely’s fast charging technologies. Hell, most people take more time to fill a gas tank and grab a snack than this battery needs to charge.

CATL is also promising that the battery will retain over 90% of its capacity after 1,000 full charging cycles, which addresses the usual trade-off between fast charging and battery longevity.

What else did CATL announce?

CATL did not stop at one announcement. The third-generation Qilin battery targets premium EVs with a cell energy density of 280 Wh/kg and a claimed range of 1,000 km. That should remove any range anxiety EV owners experience.

The entire battery pack weighs 625 kg, which is 255 kg lighter than comparable systems. According to the company, the weight reduction alone improves braking distance, acceleration, and even tyre life by over 30%.

For hybrid drivers, the second-generation Freevoy Super Hybrid battery extends pure electric range to up to 600 km, with total vehicle range exceeding 2,000 km. And for those in extreme climates, the new Naxtra Sodium-ion battery is finally moving into large-scale production by the end of 2026.

What does this mean for you?

Charging and range anxiety have always been the biggest arguments against switching to an EV. CATL is making it increasingly harder to use that excuse. With fast-charging times approaching gas-station speeds and batteries that can go 1,000 kilometers on a single charge and handle extreme cold, the gap between EVs and traditional cars is getting smaller fast.

CATL plans to build 4,000 integrated charge and swap stations across China by the end of 2026. China has come to the forefront of EV technology advancements, and it’s no wonder US customers want access to their cars.

-

Fashion5 days ago

Fashion5 days agoWeekend Open Thread: Theodora Dress

-

Sports5 days ago

Sports5 days agoNWFL Suspends Two Players Over Post-Match Clash in Ado-Ekiti

-

Politics5 days ago

Politics5 days agoPalestine barred from entering Canada for FIFA Congress

-

Entertainment3 days ago

NBA Analyst Charles Barkley Chimes in on Ice Spice McDonald’s Fiasco

-

Business3 days ago

Business3 days agoPowerball Result April 18, 2026: No Jackpot Winner in Powerball Draw: $75 Million Rolls Over

-

Politics3 days ago

Politics3 days agoZack Polanski demands ‘council homes not luxury flats for foreign investors’

-

Crypto World5 days ago

Crypto World5 days agoRussia Pushes Bill to Criminalize Unregistered Crypto Services

-

Tech3 days ago

Tech3 days agoAuto Enthusiast Scores Running Tesla Model 3 for Two Grand and Turns It Into Bare-Bones Go-Kart

-

Politics2 days ago

Politics2 days agoGary Stevenson delivers timely reminder to register to vote as deadline TODAY

-

Tech7 days ago

Tech7 days ago‘Avatar: Aang, The Last Airbender’ Leaked Online. Some Fans Say Paramount Deserves the Fallout

-

Business6 days ago

Business6 days agoCreo Medical agree sale of its manufacturing operation

-

Business4 hours ago

Business4 hours agoRolls-Royce Voted UK’s Most Iconic Trade Mark as IPO Register Hits 150

-

Crypto World5 days ago

Crypto World5 days agoRussia Introduces Bill To Criminalize Unregistered Crypto Services

-

Crypto World4 days ago

Crypto World4 days agoKelp DAO rsETH Bridge Hack Drains $292M as DeFi Losses Top $600M in Two Weeks

-

Sports6 days ago

Sports6 days agoBritish climbers complete new route in Swiss Alps

-

Tech6 days ago

Tech6 days agoFord EV and tech chief leaving automaker

-

Sports6 days ago

Sports6 days ago“Felt Much Better Today”: Josh Hazlewood Opens Up On His Recovery Win Over LSG

-

Entertainment7 days ago

Entertainment7 days agoRuby Rose Accuses Katy Perry Of Sexual Assault, Police React

-

Business6 days ago

Business6 days agoCheaper Doritos and Lays helps PepsiCo win back struggling snackers

-

Entertainment6 days ago

Entertainment6 days agoClavicular Says Streaming May Not Work Without Substances

You must be logged in to post a comment Login