Microsoft’s professional network becomes the latest name on a list that now includes Meta, Amazon, Oracle, and IBM, even as the same companies are guiding $725 billion of AI capital spending this year.

LinkedIn is cutting roughly 5% of its staff, the latest reduction at a Microsoft-owned business and the most recent entry in a year-long Big Tech contraction that has now displaced more than 100,000 workers across the sector.

Chief executive Dan Shapero, who took over from Ryan Roslansky in late April when Roslansky moved into a new AI role inside Microsoft, set out the cuts in a memo to employees, citing the need to operate “more profitably” and to reinvent how the company works with smaller, more agile teams. Bloomberg reported the memo on Wednesday.

LinkedIn employed roughly 17,500 staff at the start of 2026, implying a cut in the region of 900 to 1,000 roles.

The company has not confirmed an absolute number, but multiple outlets briefed by sources put the figure at about 875 jobs, with engineering, product, marketing, and the Global Business Organization carrying most of the impact.

The bigger number is the one that frames everything else. By 13 May, the global technology sector had announced more than 100,000 layoffs across some 250 separate events, an average of roughly 880 a day, according to industry trackers.

The TrueUp layoffs tracker had logged 286 events affecting 128,270 workers, the highest reading since the 2023 contraction.

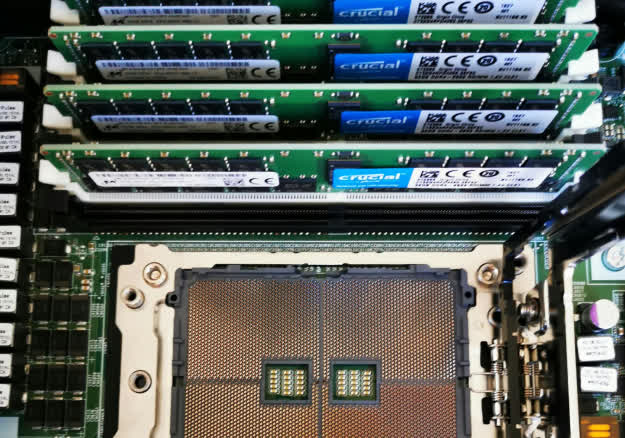

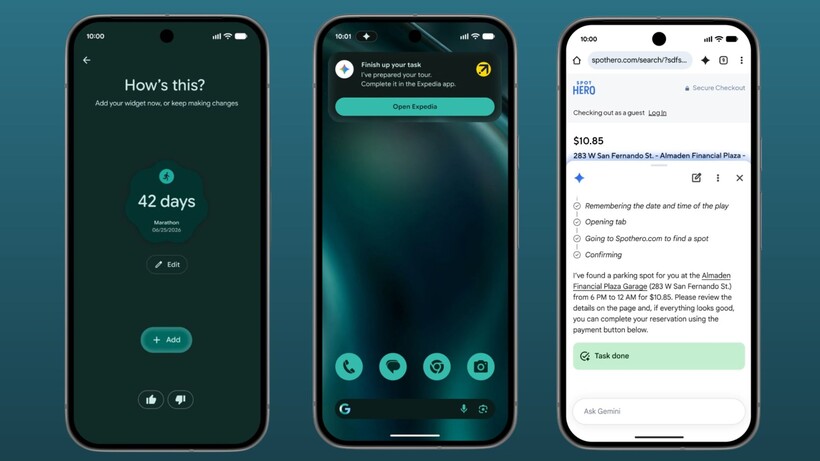

The defining feature is the divergence between payroll and capital expenditure. Amazon, Microsoft, Alphabet, and Meta are collectively guiding to roughly $725 billion of capital spending in 2026, almost all of it directed at AI infrastructure, GPUs, and data centres.

That figure is up from $410 billion in 2025, and rising faster than at any point since the cloud build-out of the late 2010s. Headcount, meanwhile, is going the other direction at the same firms.

The biggest single tranche still ahead this week is Meta’s. The company will begin companywide layoffs on 20 May, cutting approximately 8,000 employees, or about 10% of its 78,865-person workforce, with further reductions planned for the second half of 2026.

Microsoft has taken a different shape. Rather than involuntary cuts, the company in April opened a voluntary-separation programme to around 8,750 US employees, roughly 7% of its domestic headcount, structured under a “Rule of 70” formula in which years of service plus age must total at least 70.

It is the first such programme in the company’s 51-year history. Final notifications went out on 7 May, with a 30-day decision window. LinkedIn’s cuts now layer on top of those Microsoft moves.

Amazon has been quieter but is on a larger absolute trajectory. The company confirmed in January that it was cutting 16,000 corporate roles, bringing total reductions since October 2025 to roughly 30,000, the largest workforce contraction in its history.

Chief executive Andy Jassy framed the cuts as a flattening of layers built up during the 2020-2022 hyper-growth phase, not a direct AI substitution.

The smaller players are following the same pattern at a different scale. Oracle has cut roughly 30,000 positions, around 18% of its global workforce. IBM, Salesforce, Cisco, and SAP have all confirmed cuts over the year, and defence-adjacent contractors tied to federal technology procurement have shed several thousand roles since the start of the year.

For LinkedIn, the framing is narrower. Shapero’s memo pointed to slower revenue growth and an organisational flattening rather than an AI substitution, and the cuts are part of a wider Microsoft-group rebalance that began with the April Rule-of-70 programme.

LinkedIn’s revenue still grew 12% year on year in the most recent quarter, which makes the cut a profitability call, not a top-line one.

Whether the AI-substitution reading holds across the rest of the sector will probably be settled by the second-half 2026 round of disclosures, particularly Meta’s.

Until then, the running 2026 total is the only honest summary of the labour story: more than 100,000 jobs out, $725 billion of capex going in, and a widening gap between where the money sits and where the people do.

You must be logged in to post a comment Login