Crypto World

What Can We Expect from Digital Healthcare in 2021?

by Gonzalo Wangüemert Villalba

•

4 September 2025

Introduction The open-source AI ecosystem reached a turning point in August 2025 when Elon Musk’s company xAI released Grok 2.5 and, almost simultaneously, OpenAI launched two new models under the names GPT-OSS-20B and GPT-OSS-120B. While both announcements signalled a commitment to transparency and broader accessibility, the details of these releases highlight strikingly different approaches to what open AI should mean. This article explores the architecture, accessibility, performance benchmarks, regulatory compliance and wider industry impact of these three models. The aim is to clarify whether xAI’s Grok or OpenAI’s GPT-OSS family currently offers more value for developers, businesses and regulators in Europe and beyond. What Was Released Grok 2.5, described by xAI as a 270 billion parameter model, was made available through the release of its weights and tokenizer. These files amount to roughly half a terabyte and were published on Hugging Face. Yet the release lacks critical elements such as training code, detailed architectural notes or dataset documentation. Most importantly, Grok 2.5 comes with a bespoke licence drafted by xAI that has not yet been clearly scrutinised by legal or open-source communities. Analysts have noted that its terms could be revocable or carry restrictions that prevent the model from being considered genuinely open source. Elon Musk promised on social media that Grok 3 would be published in the same manner within six months, suggesting this is just the beginning of a broader strategy by xAI to join the open-source race. By contrast, OpenAI unveiled GPT-OSS-20B and GPT-OSS-120B on 5 August 2025 with a far more comprehensive package. The models were released under the widely recognised Apache 2.0 licence, which is permissive, business-friendly and in line with requirements of the European Union’s AI Act. OpenAI did not only share the weights but also architectural details, training methodology, evaluation benchmarks, code samples and usage guidelines. This represents one of the most transparent releases ever made by the company, which historically faced criticism for keeping its frontier models proprietary. Architectural Approach The architectural differences between these models reveal much about their intended use. Grok 2.5 is a dense transformer with all 270 billion parameters engaged in computation. Without detailed documentation, it is unclear how efficiently it handles scaling or what kinds of attention mechanisms are employed. Meanwhile, GPT-OSS-20B and GPT-OSS-120B make use of a Mixture-of-Experts design. In practice this means that although the models contain 21 and 117 billion parameters respectively, only a small subset of those parameters are activated for each token. GPT-OSS-20B activates 3.6 billion and GPT-OSS-120B activates just over 5 billion. This architecture leads to far greater efficiency, allowing the smaller of the two to run comfortably on devices with only 16 gigabytes of memory, including Snapdragon laptops and consumer-grade graphics cards. The larger model requires 80 gigabytes of GPU memory, placing it in the range of high-end professional hardware, yet still far more efficient than a dense model of similar size. This is a deliberate choice by OpenAI to ensure that open-weight models are not only theoretically available but practically usable. Documentation and Transparency The difference in documentation further separates the two releases. OpenAI’s GPT-OSS models include explanations of their sparse attention layers, grouped multi-query attention, and support for extended context lengths up to 128,000 tokens. These details allow independent researchers to understand, test and even modify the architecture. By contrast, Grok 2.5 offers little more than its weight files and tokenizer, making it effectively a black box. From a developer’s perspective this is crucial: having access to weights without knowing how the system was trained or structured limits reproducibility and hinders adaptation. Transparency also affects regulatory compliance and community trust, making OpenAI’s approach significantly more robust. Performance and Benchmarks Benchmark performance is another area where GPT-OSS models shine. According to OpenAI’s technical documentation and independent testing, GPT-OSS-120B rivals or exceeds the reasoning ability of the company’s o4-mini model, while GPT-OSS-20B achieves parity with the o3-mini. On benchmarks such as MMLU, Codeforces, HealthBench and the AIME mathematics tests from 2024 and 2025, the models perform strongly, especially considering their efficient architecture. GPT-OSS-20B in particular impressed researchers by outperforming much larger competitors such as Qwen3-32B on certain coding and reasoning tasks, despite using less energy and memory. Academic studies published on arXiv in August 2025 highlighted that the model achieved nearly 32 per cent higher throughput and more than 25 per cent lower energy consumption per 1,000 tokens than rival models. Interestingly, one paper noted that GPT-OSS-20B outperformed its larger sibling GPT-OSS-120B on some human evaluation benchmarks, suggesting that sparse scaling does not always correlate linearly with capability. In terms of safety and robustness, the GPT-OSS models again appear carefully designed. They perform comparably to o4-mini on jailbreak resistance and bias testing, though they display higher hallucination rates in simple factual question-answering tasks. This transparency allows researchers to target weaknesses directly, which is part of the value of an open-weight release. Grok 2.5, however, lacks publicly available benchmarks altogether. Without independent testing, its actual capabilities remain uncertain, leaving the community with only Musk’s promotional statements to go by. Regulatory Compliance Regulatory compliance is a particularly important issue for organisations in Europe under the EU AI Act. The legislation requires general-purpose AI models to be released under genuinely open licences, accompanied by detailed technical documentation, information on training and testing datasets, and usage reporting. For models that exceed systemic risk thresholds, such as those trained with more than 10²⁵ floating point operations, further obligations apply, including risk assessment and registration. Grok 2.5, by virtue of its vague licence and lack of documentation, appears non-compliant on several counts. Unless xAI publishes more details or adapts its licensing, European businesses may find it difficult or legally risky to adopt Grok in their workflows. GPT-OSS-20B and 120B, by contrast, seem carefully aligned with the requirements of the AI Act. Their Apache 2.0 licence is recognised under the Act, their documentation meets transparency demands, and OpenAI has signalled a commitment to provide usage reporting. From a regulatory standpoint, OpenAI’s releases are safer bets for integration within the UK and EU. Community Reception The reception from the AI community reflects these differences. Developers welcomed OpenAI’s move as a long-awaited recognition of the open-source movement, especially after years of criticism that the company had become overly protective of its models. Some users, however, expressed frustration with the mixture-of-experts design, reporting that it can lead to repetitive tool-calling behaviours and less engaging conversational output. Yet most acknowledged that for tasks requiring structured reasoning, coding or mathematical precision, the GPT-OSS family performs exceptionally well. Grok 2.5’s release was greeted with more scepticism. While some praised Musk for at least releasing weights, others argued that without a proper licence or documentation it was little more than a symbolic gesture designed to signal openness while avoiding true transparency. Strategic Implications The strategic motivations behind these releases are also worth considering. For xAI, releasing Grok 2.5 may be less about immediate usability and more about positioning in the competitive AI landscape, particularly against Chinese developers and American rivals. For OpenAI, the move appears to be a balancing act: maintaining leadership in proprietary frontier models like GPT-5 while offering credible open-weight alternatives that address regulatory scrutiny and community pressure. This dual strategy could prove effective, enabling the company to dominate both commercial and open-source markets. Conclusion Ultimately, the comparison between Grok 2.5 and GPT-OSS-20B and 120B is not merely technical but philosophical. xAI’s release demonstrates a willingness to participate in the open-source movement but stops short of true openness. OpenAI, on the other hand, has set a new standard for what open-weight releases should look like in 2025: efficient architectures, extensive documentation, clear licensing, strong benchmark performance and regulatory compliance. For European businesses and policymakers evaluating open-source AI options, GPT-OSS currently represents the more practical, compliant and capable choice. In conclusion, while both xAI and OpenAI contributed to the momentum of open-source AI in August 2025, the details reveal that not all openness is created equal. Grok 2.5 stands as an important symbolic release, but OpenAI’s GPT-OSS family sets the benchmark for practical usability, compliance with the EU AI Act, and genuine transparency.

Crypto World

Ethereum price forms double top as market reacts to Iran tensions, will it crash?

Ethereum price retraced some of its gains from Monday after U.S. President Donald Trump brushed off an Iranian proposal to end the war and warned that strikes would target key infrastructure if Tehran fails to reopen the Strait of Hormuz by the deadline.

Summary

- Ethereum price fell 3.4% below $2,100 as Trump rejected Iran’s proposal and warned of strikes if the Strait of Hormuz is not reopened.

- Risk sentiment weakened across crypto and equities, with Asian markets flat to lower and investors pulling capital amid geopolitical uncertainty.

- A confirmed breakdown below $2,000 could trigger a double top pattern and liquidations of up to $1.41B in long positions.

According to data from crypto.news, Ethereum (ETH) price fell 3.4% below the $2,100 support after reports emerged that Trump will not call off the strikes at Iranian infrastructure unless Tehran commits to reopening the Strait of Hormuz, a key maritime corridor that handles 20% of all the global oil trade. This came after the U.S. President dismissed a proposal from the Iranian leadership to end the war while keeping the Strait closed.

Fears of a very aggressive strike on Iran are likely causing investors to withdraw capital as they await the conclusion of this geopolitical standoff. Investors are withdrawing from both crypto and traditionally safe-haven assets such as gold and silver, a sign of broader derisking across global markets as uncertainty intensifies.

Asian tech stocks such as Japan’s Nikkei 225, Hong Kong’s Hang Seng, and the Shanghai Composite all remained either still or dropped as uncertainty weighed on the region.

Besides these factors lowering risk sentiment, retail investors are also likely panicking as they potentially face liquidation of $1.41 billion worth of long positions being erased from the market should Ethereum price slide further down to $2,040.

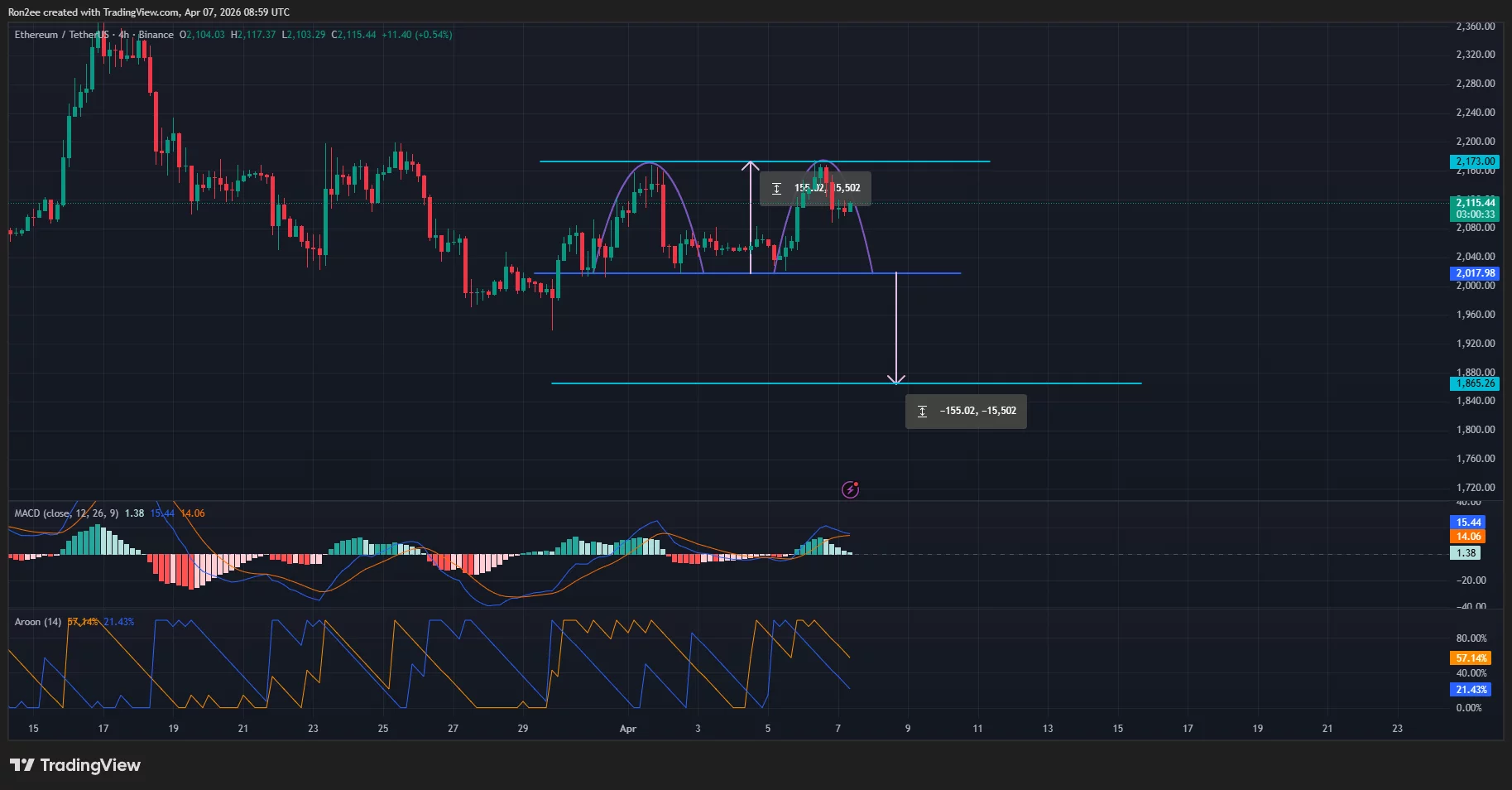

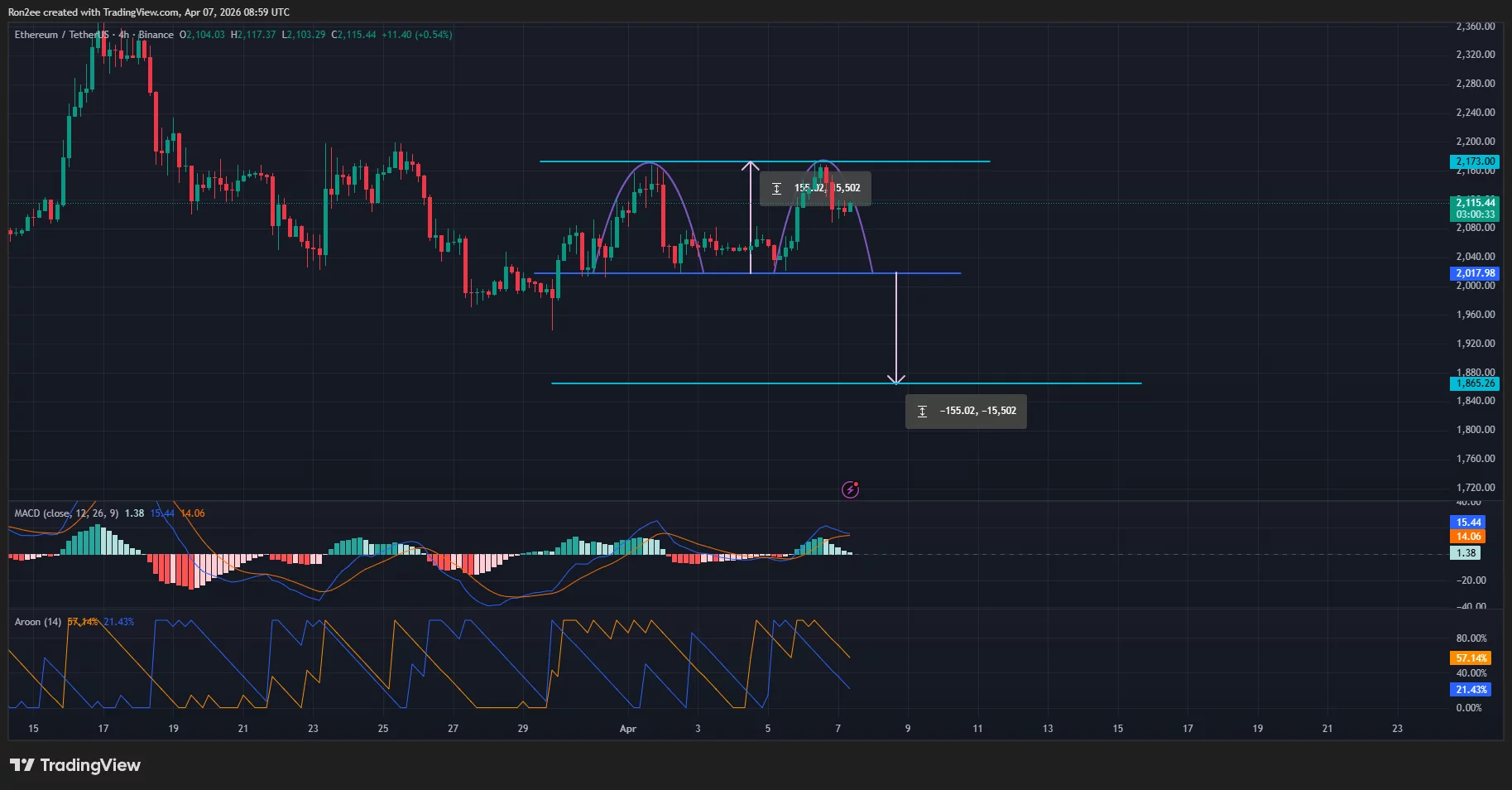

On the daily chart, Ethereum price has been forming a double top pattern on the 4-hour chart. Double top patterns are some of the most common bearish signals in technical analysis. The neckline of the pattern lies at around $2,017.

If Ethereum price falls below the $2,000 support, it would confirm the double top pattern and will continue to drop lower to under $1,900 as calculated by subtracting the height of the double tops formed from the point at which the neckline stands.

Technical indicators show that bears are starting to take control. Notably, the Aroon Up and Down lines have pointed sharply downwards, suggesting a strong downtrend. The MACD lines are close to forming a bearish crossover, a sign that selling pressure is mounting.

For now, the immediate support level that decides the trend lies at $2,000. Failure to hold this level would confirm both the double top pattern and the long liquidation cascade.

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

Crypto World

NVDA Shares Approach Key Resistance

Nvidia’s chip production is concentrated with Taiwanese contractor TSMC, increasing the company’s exposure to geopolitical risks and US export policy. Restrictions on shipments to China, including decisions related to H20-series chips, have led to significant financial adjustments, which the market estimates at several billion dollars, linked to inventory and expected demand.

At the same time, the revenue structure remains resilient — around 69% of income is generated in the US domestic market, where hyperscalers continue to expand purchases of data centre accelerators. In the fourth quarter of fiscal 2026, revenue reached $68.1 billion, marking a 73% year-on-year increase, while full-year revenue totalled $215.9 billion (+65%).

In late March, the company announced an expansion of its strategic partnership with Marvell Technology, including a $2 billion investment and integration via the NVLink Fusion ecosystem, strengthening its position in the physical AI and robotics segments. At the same time, the broader macro backdrop remains subdued.

Technical Outlook

After reaching an all-time high near 210 in late October 2025, the stock entered a corrective downtrend. The correction bottomed at 165 on 30 March 2026, followed by a rebound, although prices remain around 177, showing no clear signs of a sustained recovery. The volume profile adds further clarity.

The highest concentration of trading activity during the observed period is located in the 181–183 range, where the Point of Control (POC) is positioned — this is the level where market participants were most active over several months, making it a key reference zone. Above current levels, the volume profile remains dense up to 189, which aligns with local highs from the second half of 2025 and acts as the nearest resistance.

The RSI stands at 49.37, remaining in neutral territory and offering no clear directional bias. The latest session’s volume, at 107.11 million, indicates continued market participation. However, it is worth noting that the most pronounced spikes in volume and volatility typically occur around earnings releases — and with the next report scheduled for May 2026, the stock may continue to consolidate within the current range.

Summary

NVIDIA remains in a prolonged consolidation phase, supported by strong operational performance but weighed by a subdued macro environment. The volume profile highlights significant activity above current price levels, while RSI remains neutral. Market participants appear to be assessing incoming signals without rushing to conclusions.

Buy and sell stocks of the world’s biggest publicly-listed companies with CFDs on FXOpen’s trading platform. Open your FXOpen account now or learn more about trading share CFDs with FXOpen.

This article represents the opinion of the Companies operating under the FXOpen brand only. It is not to be construed as an offer, solicitation, or recommendation with respect to products and services provided by the Companies operating under the FXOpen brand, nor is it to be considered financial advice.

Crypto World

Anthropic secures multi gigawatt TPU deal with Google and Broadcom

Anthropic has struck a major infrastructure deal with Google and Broadcom to secure multi-gigawatt computing capacity, as demand for its Claude AI models continues to climb.

Summary

- Anthropic has secured access to roughly 3.5 gigawatts of TPU compute through an expanded partnership with Google and Broadcom.

- Most of the new infrastructure will be built in the United States.

- The AI firm’s Annualized revenue has surpassed $30 billion.

Details disclosed in a recent securities filing show the semiconductor firm will support future iterations of Google’s tensor processing units, with part of that capacity allocated to Anthropic. The arrangement is expected to unlock roughly 3.5 gigawatts of compute, with deployments set to begin scaling from 2027.

Anthropic said its annualized revenue has now crossed $30 billion, up sharply from around $9 billion at the end of last year. The company also reported that more than 1,000 enterprise customers are each spending over $1 million annually, a figure that has doubled within weeks.

“We are making our most significant compute commitment to date to keep pace with our unprecedented growth,” Anthropic’s chief financial officer Krishna Rao said, adding that the partnership would “build the capacity necessary to serve the exponential growth we have seen in our customer base.”

Most of the new infrastructure will be based in the United States, extending an earlier pledge to invest $50 billion into domestic compute capacity. The expansion also builds on Anthropic’s existing relationships with Google Cloud and Broadcom, following earlier TPU capacity announcements.

From Broadcom’s side, the deal adds to a growing pipeline of AI-linked revenue. CEO Hock Tan had previously confirmed that the company was already supplying around 1 gigawatt of compute for Anthropic through Google’s TPU systems, with demand expected to climb past 3 gigawatts in 2027.

For Broadcom, the latest deal adds to a quickly growing list of AI infrastructure partnerships. During the company’s March earnings call, Broadcom CEO Hock Tan said it was already supplying roughly 1 gigawatt of compute for Anthropic and added that this was expected to surpass 3 gigawatts by 2027.

Wall Street estimates suggest the partnership could translate into significant earnings. Analysts at Mizuho have projected that Broadcom may generate about $21 billion in AI-related revenue from Anthropic in 2026, potentially doubling to $42 billion the following year.

At the same time, competition across AI infrastructure remains intense. AI developers, including Anthropic and its peers, continue to rely on a mix of hardware platforms, including Nvidia GPUs, Google TPUs, and custom chips.

Broadcom is also working with OpenAI on separate silicon efforts, while cloud providers such as Amazon, Google, and Microsoft remain central to delivering that compute at scale.

Anthropic noted that its Claude models are now deployed across all three major cloud ecosystems, allowing workloads to be distributed depending on performance needs.

Crypto World

Planet Labs (PL) Stock Slides 2.6% Following CFO’s $7M Share Sale Despite Analyst Upgrades

Key Takeaways

- Planet Labs (PL) shares declined 2.6% during Monday’s session, reaching an intraday low of $34.21 before settling near $34.96.

- The company’s CFO and President, Ashley F. Johnson, offloaded 200,000 shares on April 2, generating approximately $7 million in proceeds.

- Fourth quarter fiscal 2026 revenue reached $86.82M—representing a 41.1% annual increase and exceeding analyst projections—though EPS significantly underperformed at ($0.48) versus the ($0.05) consensus.

- Multiple Wall Street firms increased their price targets, with Needham and Wedbush both moving to $40, while Citi upgraded to $35.

- The satellite imaging company also disclosed plans to redeem all outstanding public warrants on April 27, 2026, at a price of $0.01 per warrant.

Planet Labs (PL) experienced a 2.6% decline on Monday as market participants weighed a substantial insider transaction from a senior executive against the company’s latest quarterly performance.

Ashley F. Johnson, who serves as both CFO and President, divested 200,000 Class A shares on April 2, collecting approximately $7 million from the transaction. The sale occurred in two separate blocks—51,460 shares were sold at prices ranging from $34.57 to $34.94, while another 148,540 shares moved at prices between $34.585 and $35.87.

Additionally, on April 6, Johnson executed a transfer of 525,708 shares to The Johnson Joint Revocable Trust, an entity controlled by Johnson and her spouse as co-trustees.

Insider selling activity has been notably active in recent months. Throughout the previous quarter, company insiders disposed of a combined 218,566 shares valued at approximately $5.9 million. Board member Vijaya Gadde also participated in January by selling 20,000 shares.

Such consistent selling activity often triggers caution among investors—regardless of whether underlying business metrics are strengthening.

Top Line Strength, Bottom Line Weakness

Planet Labs delivered fourth quarter fiscal 2026 revenue of $86.82 million, comfortably surpassing the $78.17 million consensus forecast. This represents a robust 41.1% increase compared to the prior year period.

However, the earnings picture proved less encouraging. The company reported an EPS loss of ($0.48), substantially worse than the ($0.05) loss analysts had anticipated. Planet Labs continues to operate at a loss, reflected in a negative net margin of 80.22% and a negative return on equity of 69.61%.

Looking ahead, management issued Q1 fiscal 2027 revenue guidance approximately 5% above Street expectations—a factor that contributed to maintaining positive analyst sentiment despite the earnings shortfall.

Goldman Sachs increased its price objective to $18 while maintaining a Neutral stance. Needham elevated its target to $40, highlighting revenue and EPS beats of 11% and $0.02 respectively, and reaffirmed its Buy recommendation. Wedbush similarly raised its target from $30 to $40 with an Outperform rating. Citi upgraded its target from $30 to $35 while maintaining a Buy rating.

Technical indicators show the stock’s 50-day moving average positioned at $26.21, with the 200-day average at $19.64—both substantially below Monday’s closing price.

Satellite Deployments and AI Integration

From an operational standpoint, Planet Labs recently delivered three Pelican satellites to Vandenberg Space Force Base in California, preparing for an upcoming SpaceX rideshare launch. These satellites are equipped with NVIDIA’s Jetson AI platform, enabling onboard data processing capabilities.

Warrant Redemption Notice

The company has declared its intention to redeem all outstanding public warrants for Class A common stock on April 27, 2026, at a redemption price of $0.01 per warrant.

Trading volume on Monday registered approximately 12.5 million shares—roughly 11% below the average daily volume of 14.1 million.

Planet Labs currently maintains a market capitalization of $12.10 billion, operates with a debt-to-equity ratio of 2.37, and exhibits a beta of 1.83.

Crypto World

6 profitable AI crypto quant trading bots of 2026 offering lucrative options

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

AI-powered quant trading bots lead crypto evolution in 2026, automating strategies and boosting efficiency.

Summary

- AI trading bots dominate 2026 crypto markets, automating strategies and driving passive income adoption

- BitsStrategy gains attention for high-frequency trading, using AI to capture rapid market fluctuations

- Automated quant trading tools rise as investors shift from manual trading to data-driven execution

The cryptocurrency market has continued to evolve in 2026, and AI-powered quant trading bots are now at the forefront of this transformation. These bots utilize cutting-edge artificial intelligence to analyze massive amounts of market data, execute trades with lightning speed, and maximize profit potential. For those looking to tap into crypto’s profit potential without the need for constant manual trading, these AI-driven bots can automate their trading strategies and generate passive income on their behalf.

In this article, we’ll highlight the 6 most profitable AI crypto quant trading bots of 2026, each offering innovative features and proven strategies that cater to a variety of trading needs.

Why turn to AI quant trading bots for profit?

AI trading bots bring several significant advantages to crypto traders:

- Data-Driven Precision: These bots make decisions based on vast amounts of real-time market data, ensuring that trades are backed by analysis rather than instinct.

- Round-the-Clock Trading: AI bots never rest, executing trades 24/7, and ensuring lucrative market movements are never missed out.

- Emotional Discipline: Bots make decisions based on logic and strategy, avoiding emotional biases that can often lead to costly trading mistakes.

- Ease of Use: With many bots requiring no technical skills, even beginners can take advantage of their profit-making potential.

Let’s explore the top 6 AI crypto quant trading bots that are delivering profitable results in 2026.

1. BitsStrategy: Mastering the art of high-frequency trading

Overview: BitsStrategy leads the pack with its specialized focus on high-frequency trading (HFT). Using AI, it can execute a large number of trades in a fraction of a second, capitalizing on minute price fluctuations for consistent profits.

Why it stands out:

- Optimized for high-frequency trades and rapid decision-making

- Continuously adapts to market conditions with real-time strategy adjustments

- Advanced risk management ensures minimal losses

Why choose BitsStrategy?

For traders who want to harness the power of high-frequency trading, BitsStrategy is unbeatable. Its speed and automation allow it to capitalize on even the smallest market movements, creating a reliable stream of income for users who don’t want to manually monitor every trade.

Click to visit and register to receive a free $10 real reward!

2. Pionex: Capitalizing on arbitrage opportunities

Overview: Pionex excels in arbitrage trading, where its AI bots track price differences between crypto exchanges and exploit these discrepancies for profit. This highly profitable strategy requires little input from the trader, making it perfect for those seeking a passive income stream.

Why it stands out:

- Built-in arbitrage bot that scans multiple exchanges for profitable gaps

- Seamless integration with crypto exchanges for instant trade execution

- Low fees and high liquidity ensure optimal profits

Why choose Pionex?

For those looking for a low-risk, high-return strategy, Pionex’s arbitrage trading bot allows them to take advantage of price differences across exchanges, offering a stable source of income with minimal involvement.

3. 3Commas: The portfolio powerhouse

Overview: 3Commas isn’t just a trading bot — it’s an entire portfolio management system that uses AI to manage multiple assets simultaneously. It combines powerful tools like Dollar-Cost Averaging (DCA) and Grid Trading to ensure consistent profits, even in volatile markets.

Why it stands out:

- Robust portfolio management tools that automatically balance investments

- DCA and Grid bots for steady, long-term profit generation

- Multi-exchange support and seamless integration across platforms

Why Choose 3Commas?

3Commas is the go-to platform for portfolio management, making it ideal for traders who want to automate their strategies across multiple exchanges while maintaining a diversified portfolio. The AI tools are designed for long-term success, ensuring a reliable source of passive income.

4. Cryptohopper: Tailoring trading strategy

Overview: Cryptohopper offers the ultimate in customizability. This platform allows users to create personalized trading strategies while still leveraging the power of AI. Whether they are a beginner or a pro, Cryptohopper adapts to their needs with its easy-to-use interface and powerful optimization tools.

Why it stands out:

- Customizable AI strategies for a personalized trading experience

- AI-powered optimization of existing strategies to improve performance

- Access to a strategy marketplace to purchase or sell pre-built strategies

Why choose cryptohopper?

If anyone wants to customize their trading strategy while benefiting from AI-powered execution, Cryptohopper provides the perfect blend of control and automation. It’s ideal for traders who want to experiment with their own strategies and leverage AI to maximize profits.

5. TradeSanta: Simplifying crypto trading for beginners

Overview: TradeSanta is designed for those who want simplicity in their trading experience. Its intuitive platform allows users to set up pre-defined strategies like Grid Trading and DCA, making it ideal for beginners who want to profit without getting into complex setups.

Why it stands out:

- Pre-set strategies such as Grid Trading and DCA for beginners

- Cloud-based interface, accessible from any device

- Minimal setup required with automated execution

Why choose TradeSanta?

TradeSanta is perfect for beginners who want to start trading without dealing with complicated configurations. With its easy-to-use interface and pre-set strategies, it makes earning passive income from crypto trading as simple as clicking a button.

6. Coinrule: No-code strategy building

Overview: Coinrule’s standout feature is its no-code strategy builder, allowing users to create personalized AI trading strategies without any technical expertise. The platform’s intuitive interface is accessible to both novice and experienced traders.

Why it stands out:

- No-code strategy builder allows for fully customized trading strategies

- Real-time AI-powered execution of personalized plans

- Backtesting features to test strategies before going live

Why choose Coinrule?

For those who want to create their own tailored trading strategies but don’t have coding skills, Coinrule makes it easy to build and automate their trading plans. It’s perfect for those who want to take a more hands-on approach to their crypto trading while benefiting from AI-powered execution.

Conclusion

These 6 most profitable AI crypto quant trading bots of 2026 offer a range of strategies, from high-frequency trading to arbitrage and portfolio management, ensuring that there is a solution for every type of trader. Whether someone is a seasoned professional or a beginner just starting out, these bots provide the tools and automation needed to profit from the dynamic crypto market.

- BitsStrategy offers high-frequency trading for rapid profits.

- Pionex specializes in arbitrage opportunities across exchanges.

- 3Commas provides a comprehensive portfolio management system.

- Cryptohopper allows for customizable AI strategies.

- TradeSanta simplifies trading for beginners.

- Coinrule enables personalized strategies without coding.

By leveraging these AI trading bots, anyone can automate their trading, reduce risk, and increase profitability in 2026’s fast-moving cryptocurrency market. Choose the bot that best fits a particular trading style and start profiting today!

Disclosure: This content is provided by a third party. Neither crypto.news nor the author of this article endorses any product mentioned on this page. Users should conduct their own research before taking any action related to the company.

Crypto World

US Spot Bitcoin ETFs Hit Strongest Gains Since February

US-listed spot Bitcoin exchange-traded funds (ETFs) have renewed the pace of inflows, recording their largest daily flows in weeks.

Spot Bitcoin (BTC) ETFs posted $471 million in inflows on Monday, the largest daily inflow since Feb. 25, when the funds attracted $507 million, according to SoSoValue.

The inflows came as the Bitcoin price briefly approached $70,000 before retreating below $69,000, according to CoinGecko data.

The volatility occurred amid ongoing geopolitical pressure as well as renewed concerns over Bitcoin’s quantum resistance, while the Crypto Fear & Greed Index remained in “Extreme Fear” at 13.

BlackRock’s IBIT leads the inflows at $182 million

BlackRock’s iShares Bitcoin Trust ETF (IBIT) led the inflows with about $182 million, followed by the Fidelity Wise Origin Bitcoin Fund (FBTC) with $147 million, according to Farside data.

The ARK 21Shares Bitcoin ETF (ARKB) ranked third with nearly $119 million, marking its largest daily inflow since July 10, 2025.

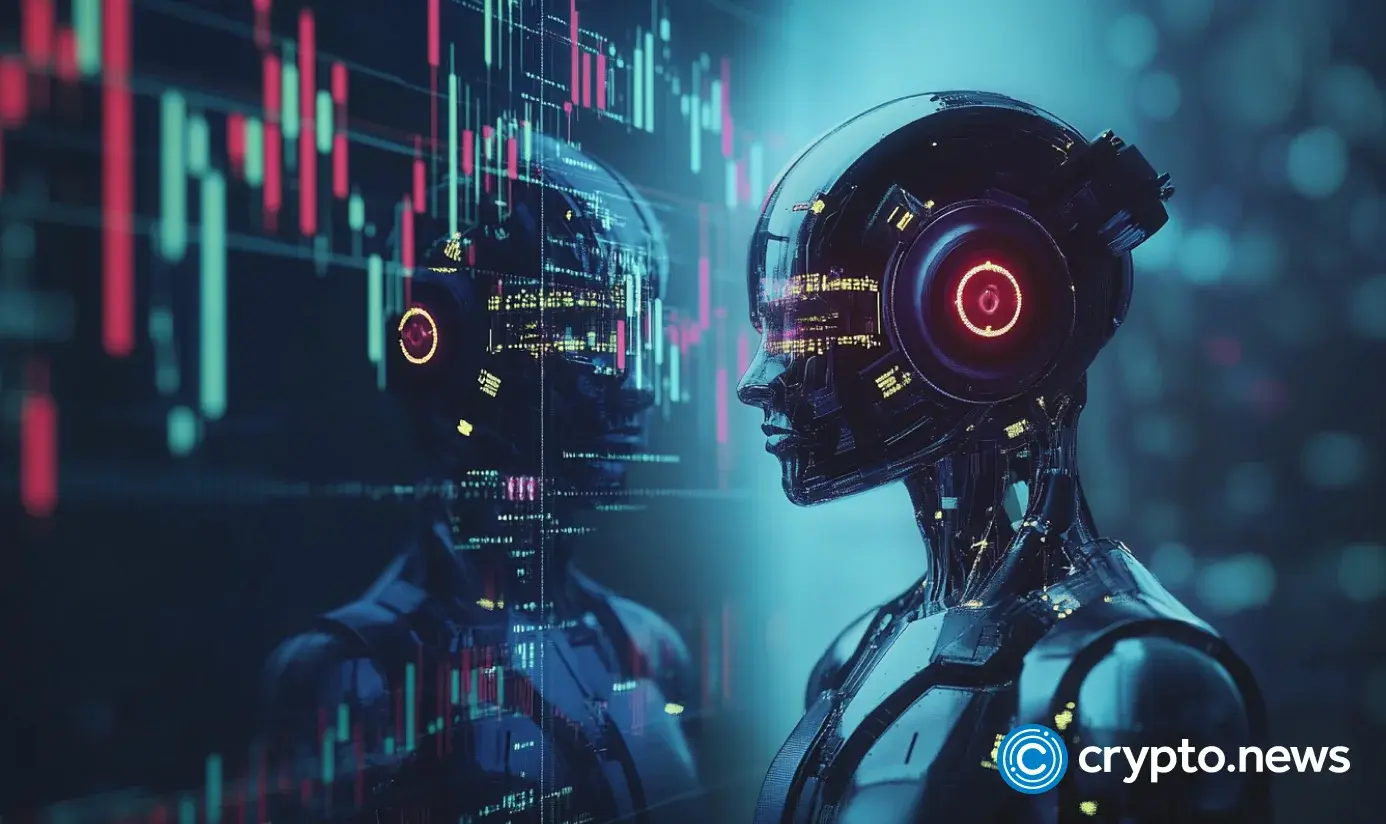

On Monday, the blockchain analytics platform Arkham observed that ETF outflows slowed to a halt last week, with major issuers selling just about $16.6 million in Bitcoin. ARK Invest’s ARKB ETF purchased the most BTC, or $34 million in a week, it said.

Following the three trading sessions in April so far, US spot Bitcoin ETFs recorded about $307 million in net inflows, bringing total assets under management (AUM) back above $90 billion.

Related: Strategy adds $330M BTC as paper losses top $14.5B in Q1

In March, Bitcoin ETFs posted $1.3 billion in inflows, marking the first monthly gain after outflows of $1.61 billion in January and $207 million in February.

Ether ETFs record $120 million in inflows

US spot Ether (ETH) ETFs followed the recovery in sentiment on Monday, recording $120 million in inflows and offsetting $78 million in outflows from the prior two trading sessions.

Ether ETFs posted three consecutive months of losses, bringing total outflows for the period to about $770 million.

Other altcoin ETFs saw muted activity, with XRP (XRP) recording zero inflows on Monday, while Solana (SOL) ETFs posted about $247,000 in inflows.

Crypto World

Whale transfers $20M in Bitcoin to Binance as price remains shaky

A whale has transferred nearly $20 million worth of Bitcoin to Binance as the flagship crypto continues to struggle.

Summary

- A Bitcoin whale moved around 300 BTC to Binance, with roughly $20 million in value, while still holding about 200 BTC.

- The wallet built its position earlier in 2025 at an average price of $97,541, leaving it at a loss if the holder sells.

Data from Arkham Intelligence shows that an address labeled “bc1q…kp4n” sent around 300 BTC, valued at over $20 million, to a Binance deposit address on Tuesday. As of press time, the wallet still retains roughly 200 BTC, which is worth about $13.75 million based on prices at the time of writing.

The wallet appears relatively recent compared to others seen in recent months, where decade-old holdings have suddenly become active to execute similar transfers.

On-chain data indicates that the address accumulated around 513 BTC between January and March 2025. At the time, the stash was worth close to $50 million, pointing to an average acquisition price of roughly $97,541 per coin.

So far, it remains unclear whether the transfer was made with the intent to sell, but movements to exchanges are often linked to potential selling activity. Given that the wallet is currently sitting at a loss, with Bitcoin trading near $69,000, the move could be aimed at limiting further downside.

On the contrary, the transfer could simply be portfolio restructuring or internal fund management rather than an immediate sale.

However, if we look at recent whale activity, it would not come as a surprise if the holder is preparing to sell. Bitcoin is down more than 45% from its all-time high and has faced intense volatility in recent sessions.

Last month a dormant wallet moved 2,100 BTC, worth around $147.7 million, after more than 13 years of inactivity. In another case, roughly $33 million in Bitcoin was sent to Binance by a separate whale.

This is happening as Bitcoin price has remained under pressure due to bearish macro catalysts, particularly rising tensions between the U.S. and Iran. The conflict has pushed oil prices higher and aggravated inflation concerns in the U.S. and across global markets. As long as these tensions persist, large holders may be inclined to remain on the sidelines.

On the other hand, institutions and treasury firms like Strategy have continued buying the flagship crypto.

Crypto World

Apple (AAPL) Stock Holds Strong Despite CEO’s $16.5M Share Sale

Key Takeaways

- Tim Cook offloaded $16.5M worth of Apple shares on April 2, selling 5,087 shares between $251.25 and $256.00 each

- Apple stock has declined approximately 4.6% in 2026, hovering near $255, marginally trailing the broader S&P 500

- The company unveiled the MacBook Neo on March 4 with a groundbreaking $599 price tag — every variant sold out within 16 days

- BofA projects the MacBook Neo addresses a $32B addressable market opportunity for 2026

- Analyst Wamsi Mohan from Bank of America maintains his Buy recommendation with a $320 target on Apple shares

Apple (AAPL) shares are hovering around $255, reflecting a year-to-date decline of approximately 4.6%.

The tech giant’s chief executive has been methodically reducing his holdings. Tim Cook divested $16.5 million in Apple shares during an April 2 transaction — unloading 5,087 shares at price points spanning $251.25 to $256.00. These transactions occurred through a predetermined Rule 10b5-1 arrangement, a mechanism specifically structured to eliminate concerns about insider trading.

Despite the recent sale, Cook maintains a substantial position with 3.28 million Apple shares, currently valued at approximately $848 million. While he’s trimming his stake, the CEO remains heavily invested in the company’s future.

Speculation about Cook’s potential departure from the CEO position recently surfaced. He addressed these rumors directly in a recent media appearance, clarifying that he’s made no public indication of leaving the position he’s occupied since taking over in 2011.

Apple’s sluggish 2026 performance isn’t happening in isolation. The entire Magnificent 7 cohort is experiencing negative returns this year. Microsoft has plummeted nearly 23%, Tesla is down 21.8%, Meta has declined 12.2%, and Amazon has fallen 7.8%. Against this backdrop, Apple’s 4.6% retreat appears relatively modest.

What distinguishes Apple from other tech behemoths isn’t aggressive AI infrastructure investment — it’s the strategic absence of it. While cloud providers are expected to deploy nearly $700 billion toward AI capabilities in 2025, Apple’s capital expenditure plans hover around $14 billion. The company is wagering that AI technology will become commoditized. Whether this thesis proves correct remains uncertain, but the approach maintains lean operational costs.

MacBook Neo Achieves Instant Sellout

The standout product launch this quarter is undoubtedly the MacBook Neo, introduced March 4 with a $599 price point. This represents Apple’s most affordable laptop offering in company history — priced lower than the Apple Watch Ultra 3. The device directly targets the $500–$1,000 notebook category, a segment where Apple previously maintained minimal presence with just 0.6% market penetration in 2025.

The launch timing appears strategic. Millions of aging PCs are incompatible with Windows 11 upgrades, generating a significant hardware replacement wave. Dell COO Jeffrey Clarke noted in late 2025 that approximately 500 million Windows 11-compatible PCs remain unupgraded — with an additional 500 million machines unable to support the operating system.

On March 20, Apple CEO Cook shared on X: “Mac just had its best launch week ever for first-time Mac customers.” Within the same timeframe, all eight MacBook Neo configurations sold out through Apple’s online store until the following month, as reported by 9to5Mac.

BofA Projects $32B Market Opportunity

Bank of America’s Wamsi Mohan conducted comprehensive analysis on the Neo’s revenue potential. His research team calculated a 2026 total addressable market of $32 billion, derived from 2025 notebook shipment volumes in the $300–$800 price range, adjusted downward by 10% for 2026, then multiplied by Apple’s competitive education average selling price of $499.

Assuming 10% market penetration and 19% operating margin performance, Mohan projects the Neo could contribute an additional $0.03 to earnings per share. While this appears modest in isolation, the strategic value lies in ecosystem expansion — Apple’s iPhone installed base encompasses roughly 1.5 billion devices compared to just 260 million Mac units. Transitioning iPhone users into Mac ownership strengthens overall platform engagement.

Mohan reaffirmed his Buy rating alongside a $320 price objective, calculated using a 32x multiple on his 2027 earnings forecast of $9.94 per share.

Crypto World

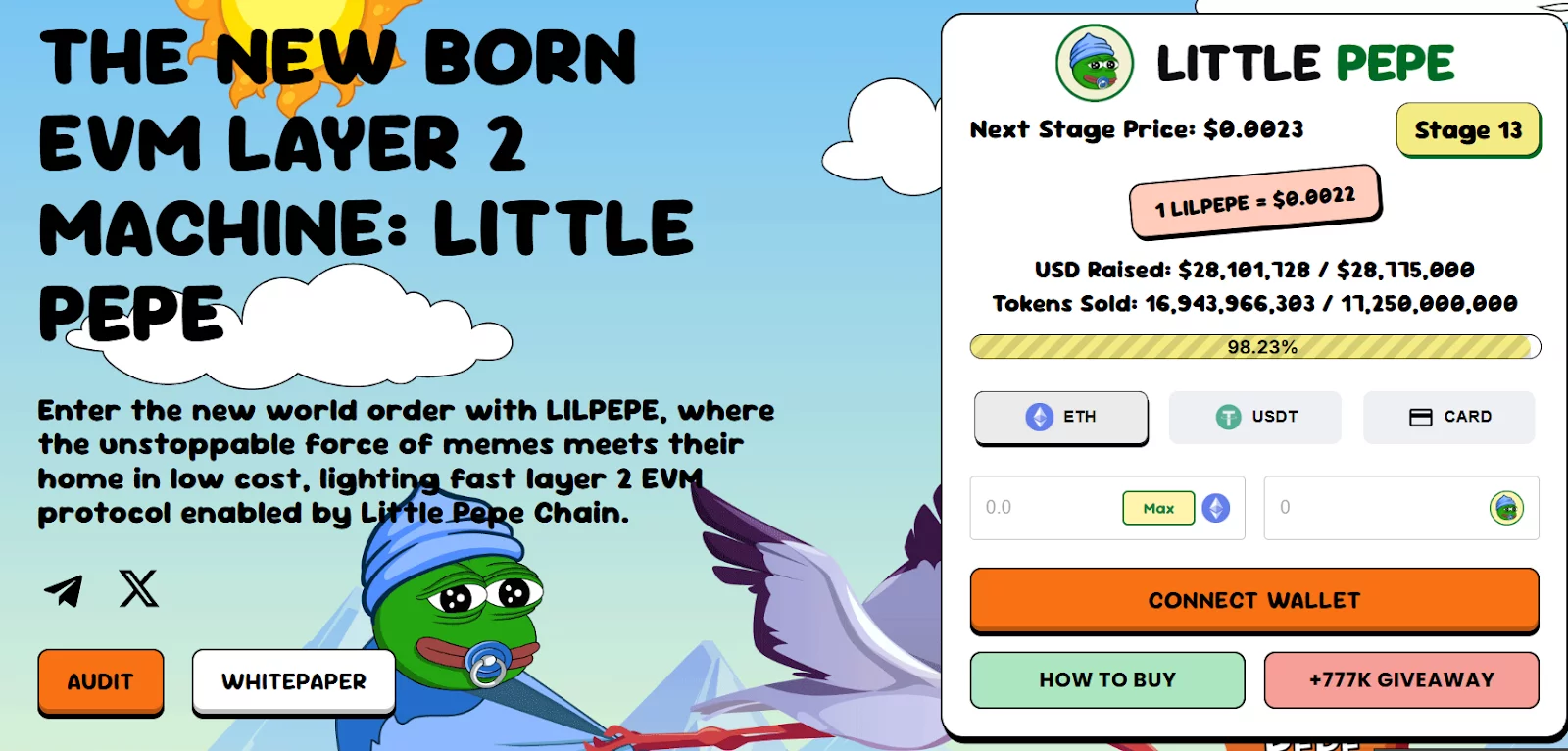

Little Pepe raises $28 million in presale, stage 13 is almost sold out

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

Little Pepe surpasses $28m in presale, advancing to Stage 13 as investor participation grows.

Summary

- Little Pepe presale surpasses $28m, entering Stage 13 as token price rises and supply nears sellout

- LILPEPE gains traction with Layer 2 utility, combining meme appeal with EVM-compatible infrastructure

- Strong presale demand pushes Little Pepe toward next price tier, highlighting early investor momentum

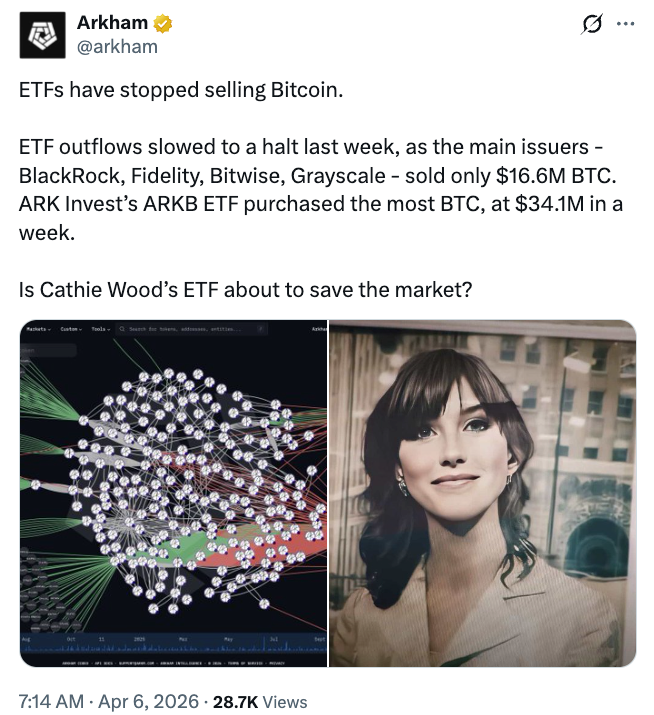

Little Pepe (LILPEPE) has now achieved yet another milestone in the continuation of the ongoing presale process, having now exceeded $28 million in overall funds raised. It has now moved into Stage 13 of the process, where the price of the token is now $0.0022 and is gaining more and more pace with the rising number of participants in the process.

In the latest updates, it has now raised $28,101,728 out of the $28,775,000 overall Stage 13 target, with 16,943,966,303 out of the 17,250,000,000 available for the process being sold out. This has now put more and more pressure on the late entrants in the process as the overall presale is now moving closer and closer to the next price level.

In the next stage, the price of the token is expected to rise to $0.0023, all in line with the pattern of appreciation that has been established. Since its listing in Stage 1 at a price of $0.001, this asset has appreciated by more than 120%, highlighting the value of early mover advantage.

Growing interest backed by utility and layer 2 infrastructure

Unlike most memecoins, which only rely on their virality, the Little Pepe coin seeks to position itself as a Layer 2 ecosystem with actual functionality. With the use of EVM-compatible architecture, the project seeks to provide ultra-fast, secure, and cheap transactions in the creation of a scalable environment.

The token operates with zero transaction tax, removing additional trading costs for users. Alongside this, staking mechanisms and NFT integrations are planned, adding further utility beyond simple trading activity.

With the aim of developing a wide ecosystem, including various applications and tools dedicated to memes, the community aspect will be merged with the practical use cases of the blockchain.

Exchange listings and expansion plans strengthen outlook

The team has set out their future plans, which include the listing on top centralized exchanges as well as DEX. This will not only improve the market once the presale is over but also meet the high market expectations set by the community, which is hoping to achieve a $1 billion market cap.

The project has also managed to gain attention by setting the aim to enter the top 100 on CoinMarketCap, further adding to the high growth prospects.

Community campaigns drive participation

Little Pepe’s rapid rise has been closely tied to strong community engagement. To celebrate its growth, the project has launched a $777,000 presale giveaway, where 10 winners will receive $77,000 worth of LILPEPE tokens each.

In order to participate in the campaign, the person has to make a minimum contribution of $100 in the presale and complete the social engagement task. This makes the platform more interactive and increases the person’s chances of winning with more entries.

In addition, a Mega Giveaway has been introduced for Stages 12 to 17. Both large contributors and randomly selected buyers are eligible to win rewards exceeding 15 ETH, adding another incentive layer for participants.

Momentum builds as presale nears next phase

With the number of tokens available dwindling in Stage 13 and the price set to rise soon, the current phase is one of the last chances for investors to get in before the price jumps again.

With the support of technical infrastructure, no-tax trades, and community-driven strength, the attention of the crypto world is still focused on Little Pepe. As the presale continues, the meme coin and Layer 2 elements are building a story that goes beyond the hype.

For more information, visit the official website, Telegram, and X.

Disclosure: This content is provided by a third party. Neither crypto.news nor the author of this article endorses any product mentioned on this page. Users should conduct their own research before taking any action related to the company.

Crypto World

Ackman’s Pershing Square Launches $64 Billion Takeover Bid for Universal Music Group (UMG)

Key Takeaways

- Pershing Square, led by Bill Ackman, has unveiled a non-binding $64 billion merger proposal with Universal Music Group through its SPARC Holdings vehicle.

- The bid prices UMG at €30.40 per share, representing a 78% premium over the previous closing price of €17.10.

- Universal Music Group shares surged approximately 13% following the announcement, while major shareholder Bollore Group climbed around 6%.

- The proposed merger would create “Nevada Corporation,” which would trade on the New York Stock Exchange.

- The deal structure includes Michael Ovitz, former Disney president, joining the board as chairman if the transaction proceeds.

Bill Ackman’s investment firm Pershing Square has unveiled a massive $64 billion takeover proposal for Universal Music Group, seeking to combine the Amsterdam-listed music powerhouse with its SPARC Holdings special purpose vehicle in a transaction that would relocate UMG to American markets.

The all-in proposal prices Universal Music Group at €30.40 per share, marking a substantial 78% premium above Monday’s closing price of €17.10. Market reaction was swift, with UMG shares climbing approximately 13% during early Tuesday sessions. Meanwhile, Bollore Group, which maintains the largest ownership position in UMG, experienced a stock increase of roughly 6%.

Universal Music Group has not yet issued a statement regarding the takeover approach.

The offer remains non-binding at this stage. The transaction framework calls for UMG shareholders to receive €9.4 billion in cash consideration alongside 0.77 shares of the newly formed Nevada Corporation for each UMG share owned.

Pershing intends to secure the cash component through multiple channels: SPARC’s rights holders, leveraged financing arrangements, and liquidating its ownership position in Spotify.

The resulting combined company — tentatively named Nevada Corporation — would obtain a primary listing on the New York Stock Exchange, fulfilling Ackman’s longstanding objective of establishing UMG’s presence in American capital markets.

Ackman’s Strategic Rationale Behind the Proposal

In correspondence addressed to Universal Music Group’s board of directors, Ackman praised management for their “excellent” stewardship of the company’s operations. However, he highlighted persistent underperformance in the stock price since UMG’s 2021 debut on the Amsterdam exchange as the fundamental challenge requiring action.

Ackman identified three primary concerns: market uncertainty surrounding Bollore Group’s 18% ownership stake, postponement of UMG’s previously planned American listing, and what he characterized as insufficient deployment of UMG’s financial resources.

Just last month, Universal Music Group abandoned a previous arrangement with Pershing regarding the pursuit of a U.S. stock exchange listing — a decision that seems to have catalyzed Tuesday’s formal merger proposition.

Pershing maintains a 4.7% ownership interest in UMG, positioning it as the fourth-largest institutional shareholder based on LSEG information.

Major Stakeholders Remain Silent

Bollore Group, controlling an 18% stake in Universal Music Group, has not issued any public statement. Vivendi, holding the second-largest ownership position, similarly declined to provide commentary. Tencent Holdings, ranked as UMG’s third-largest shareholder, has not responded to inquiries.

The stance of these major investors carries significant weight. A transaction of this magnitude requires substantial shareholder consensus to advance toward completion.

Michael Ovitz, renowned talent representative and former president of The Walt Disney Company, is designated to assume the role of board chairman at UMG should the merger receive approval.

Pershing Square has indicated it anticipates finalizing the transaction by the conclusion of 2026.

-

NewsBeat5 days ago

NewsBeat5 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business4 days ago

Business4 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion4 days ago

Fashion4 days agoWeekend Open Thread: Spanx – Corporette.com

-

Crypto World6 days ago

Crypto World6 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Business1 day ago

Business1 day agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Crypto World7 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Sports3 days ago

Sports3 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business3 days ago

Business3 days agoExpert Picks for Every Need

-

Business5 days ago

Business5 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Sports7 days ago

Sports7 days agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Crypto World6 days ago

Crypto World6 days agoBitcoin enters the public bond market as Moody’s gives a first-of-its-kind crypto deal a rating

-

Crypto World6 days ago

Bitcoin stalls below key resistance as technical signals skew bearish

-

Tech5 days ago

Tech5 days agoCommonwealth Fusion Systems leans on magnets for near-term revenue

-

Politics6 days ago

Politics6 days agoStarmer’s centre has collapsed, and the left was right all along

-

Business2 days ago

Business2 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion7 days ago

Fashion7 days agoZara Turns Up the Heat With New Swimwear

-

Fashion7 days ago

Fashion7 days agoTuesday’s Workwear Report: Tavira Sculpt Stretch Crepe Trousers

-

Crypto World7 days ago

AI Memory Rout Wipes 9% Off Nvidia Stock: Chart Says More Pain Ahead

-

Crypto World6 days ago

Crypto World6 days agoWhy It’s Partnering, Not Issuing

-

Tech7 days ago

Facial Recognition Is Spreading Everywhere

You must be logged in to post a comment Login